Microsoft just validated Anthropic's entire go-to-market strategy

$13 billion into OpenAI, and the biggest product launch of 2026 runs on Claude.

On March 9, Microsoft announced Copilot Cowork — the centerpiece of its new $99/user/month M365 E7 Frontier Worker Suite, generally available May 1. Built, in Microsoft’s own words, “in close collaboration with Anthropic,” it brings the technology powering Claude Cowork directly into Microsoft 365.

Microsoft has invested over $13 billion in OpenAI since 2019. It holds a ~27% stake valued at $135 billion after the October restructuring. For the flagship product launch it’s betting on to convert 450 million M365 commercial users into paying AI customers — it chose someone else’s model.

Two weeks ago, I wrote that the real divide between Microsoft and Anthropic isn’t scheduling versus continuous context — it’s governance philosophy. Top-down control versus bottom-up extensibility. Today, Microsoft made that divide literal: Anthropic’s capability, wrapped in Microsoft’s governance layer. The question is what that architecture tells us about where AI value actually lives.

The $13 billion hedge

The official framing is tidy.

“Microsoft 365 Copilot is model diverse by design,” the announcement reads. Jared Spataro, Microsoft’s CMO for AI at Work, told Fortune that Claude Cowork is “a fantastic tool” with “real limitations in corporate environments.” Translation: great brain, needs adult supervision.

Or is “Model diverse by design” a revisionist branding dressed as a strategic pivot?

Microsoft’s $5 billion investment in Anthropic last November, alongside a $30 billion Azure compute commitment from Anthropic, wasn’t a diversification play from day one. It was a response to watching a disturbing pattern unfold.

ELI5: What’s happening here? Imagine you’ve spent years and billions building a restaurant around one chef. Then you quietly hire a second chef — from a rival kitchen — to cook the dishes you’re putting on the tasting menu. You can call it “menu diversity.” Your first chef might call it something else.

The numbers tell the story Microsoft’s press releases don’t.

Out of roughly 450 million M365 commercial users, only 15 million pay for Copilot — 3.3% penetration. Seat growth looks healthy at 160% year-over-year.

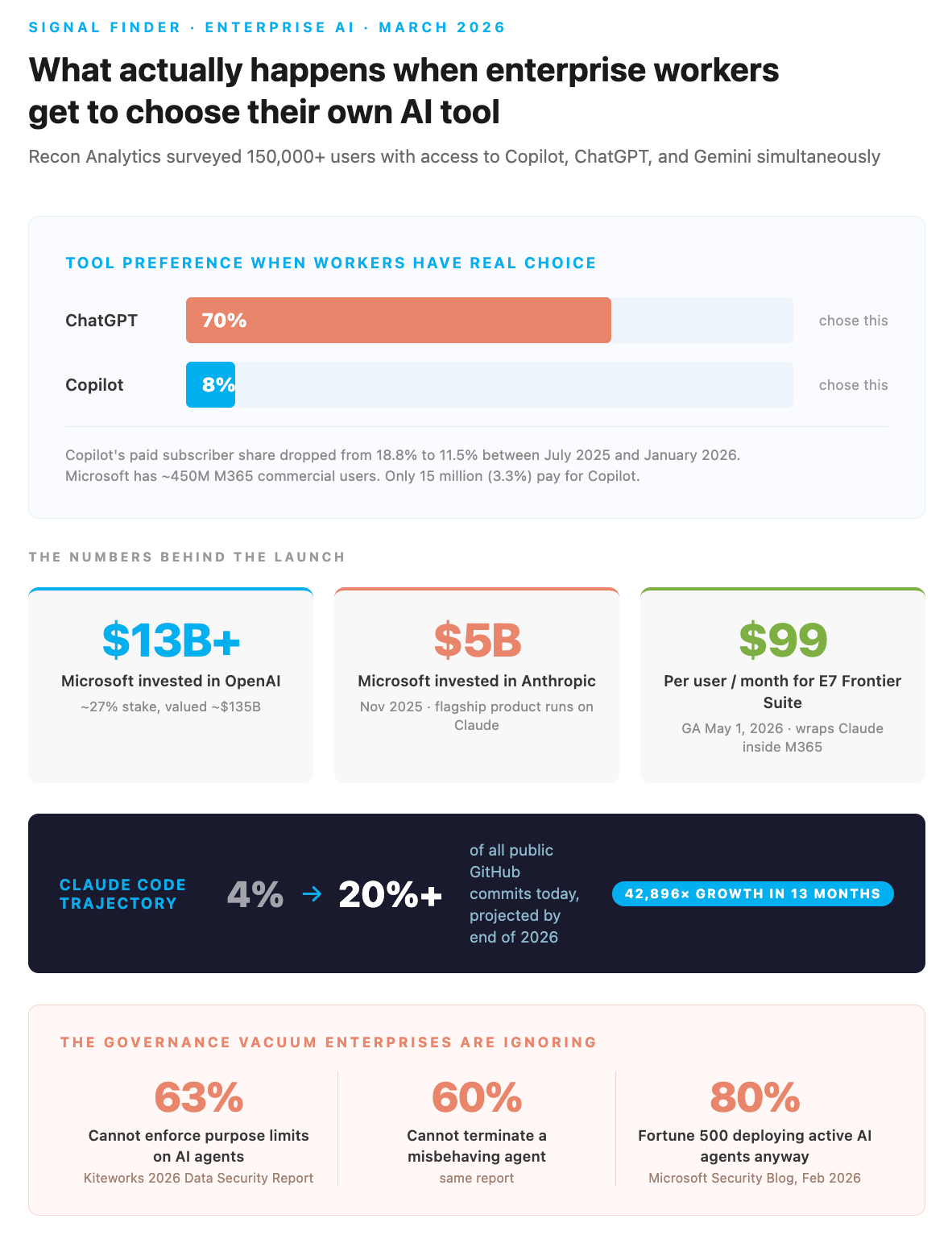

Here’s the data point that should keep Redmond awake: when Recon Analytics surveyed 150,000+ enterprise users who had access to Copilot, ChatGPT, and Gemini, only 8% chose Copilot. Seventy percent chose ChatGPT. Copilot’s paid subscriber share dropped from 18.8% to 11.5% between July 2025 and January 2026.

Pre-installed doesn’t mean preferred. Microsoft can put Copilot on every M365 seat in the world, and users still reach for something else.

If this sounds familiar, it should. Microsoft bundled Internet Explorer with every copy of Windows for a decade. Chrome won anyway because the product was better.

The pattern that actually matters

Something shifted in the last six months. It happened across every major AI tool, all at once. Claude Code runs locally in your terminal, working directly with your project files. Claude Cowork does the same on your desktop (Mac or Windows) with folder-level permissions. And now Copilot Cowork brings that same “work with your actual files” pattern into M365.

ELI5: What does “agentic” mean? Most AI tools today work like a texting buddy — you ask a question, it answers. Agentic AI is different. It’s more like a colleague who sits at a desk next to you, opens the same files you’re working on, and does actual tasks: edits a spreadsheet, drafts an email from your inbox, reviews a contract in your folder. It doesn’t just talk about work. It does work.

Claude Code now accounts for 4% of all public GitHub commits — roughly 135,000 per day — with 42,896x growth in 13 months. SemiAnalysis projects it could hit 20%+ by the end of 2026. In a UC San Diego/Cornell survey of 99 professional developers, Claude Code ranked first in usage, ahead of GitHub Copilot and Cursor.

ELI5: What is Claude Code? It’s an AI tool that lives in your terminal (the command-line interface developers use). Instead of chatting in a browser, it works directly inside your code projects — reading files, writing code, running tests, committing changes. Think of it as the difference between texting a contractor photos of your kitchen versus handing them the keys.

In practice: A developer uses Claude Code to refactor a 2,000-line authentication module. It reads the existing code, proposes changes across 14 files, runs the test suite, and commits. A legal ops team uses Claude Cowork to review vendor contracts against a standard playbook stored in a local folder. A financial analyst connects Copilot Cowork to their SharePoint models and asks it to stress-test three scenarios without ever copying data into a chat window.

The same pattern is now reaching workers who’ve never touched a terminal — and this is where the Microsoft comparison gets uncomfortable.

Anthropic recently pushed skills directly into the PowerPoint and Excel add-ins.

Skills are not prompts.

A prompt is a one-time instruction: “make this slide cleaner.”

A skill is an encoded workflow, a defined sequence of steps built once, executed identically every time, and shareable across your entire organization via a team account.

Your CFO writes a variance analysis skill that reads every formula in a spreadsheet, calculates actual-vs-target gaps, and adds plain-English explanations as cell comments. Done once. Now every analyst on the team types

/varianceand gets that same audit in under a minute, without writing a single prompt or knowing anything about how the skill works underneath.

Copilot in Office is generative. “Help me write this.” “Summarize that.”

Claude Cowork is running a live feed of your entire session — not a snapshot when you open the document, a continuous feed. Ask it mid-execution what changed three slides ago and it knows, because it’s been watching the whole time.

That’s a different product, not just a different model.

Microsoft also hasn’t shipped org-deployable skill workflows for the Office add-ins — no slash commands, no way for a CIO to push a skills library to every employee’s instance. Spataro wasn’t wrong when he called Claude Cowork “fantastic but limited in corporate environments.”

The governance infrastructure most enterprise IT teams haven’t built yet is exactly what makes that live-session capability uncomfortable to deploy at scale.

That matters more than any single feature.

Today, effective AI assistance in Office requires every worker to engage with AI consistently — something McKinsey’s Superagency data shows only 13% of employees actually do, using it for 30% or more of their daily work. Skills flip that around: institutional knowledge gets encoded once by someone who knows the work deeply, then anyone on the team invokes it with a slash command. The 87% who never built that habit get the same output as the 13% who did.

The Gartner contradiction

Two numbers. Hold both.

Gartner predicts 40% of enterprise applications will feature task-specific AI agents by the end of 2026, up from less than 5% in 2025. In essentially the same breath, they predict over 40% of agentic AI projects will be canceled by the end of 2027.

Both predictions are probably right.

The rush to deploy agents is real. So is the organizational failure rate. McKinsey’s “Superagency” data reveals why:

C-suite leaders estimate 4% of their employees use GenAI for 30%+ of daily work. The actual number is 13%.

And 47% of employees say they’ll reach that threshold within a year, versus only 20% of executives who believe it.

The people doing the work are ahead of the people buying the tools.

This is exactly the shadow AI pattern from post #4 — playing out now at the agent layer.

The governance gap is staggering.

Kiteworks’ 2026 Data Security Report found that

63% of organizations cannot enforce purposeful limitations on AI agents.

60% cannot terminate a misbehaving agent.

80% of the Fortune 500 are deploying active AI agents anyway.

Spataro’s framing of Claude Cowork having “real limitations in corporate environments” is both accurate and self-serving.

The governance wrapper is valuable.

The question is whether it’s $99/user/month valuable when the underlying capability comes from someone else’s model.

The IBM inversion

In 1985, IBM and Microsoft co-developed OS/2. Microsoft simultaneously built Windows on the side. When Windows 3.0 took off, Microsoft pivoted and IBM was left holding the bag. The partner who controlled distribution won. The partner who contributed the engineering lost.

Now the roles are inverted. Microsoft is the enterprise distribution giant relying on a partner’s technology. Anthropic is the capability provider who could, at any point, decide the wrapper is less valuable than the brain.

The 13 enterprise plugins Anthropic launched for Claude Cowork in February (Google Drive, Gmail, Calendar, DocuSign, FactSet, Slack) suggest they’re already building their own wrapper. The PowerPoint and Excel skills update sharpens the point: Anthropic pushed org-shareable skills directly into the Office add-ins weeks before Microsoft’s E7 Frontier Suite ships in May.

Enterprises that want Claude’s agentic capability inside Office don’t need to wait for the $99/user/month wrapper. They can have it today.

There’s also MCP: the Model Context Protocol, which Anthropic co-founded and donated to the Linux Foundation alongside Block and OpenAI, with support from Google, Microsoft, and AWS. Over 10,000 active public servers. Adopted by ChatGPT, Cursor, Gemini, Copilot, and VS Code.

ELI5: What is MCP? It’s a universal adapter standard for AI tools. Like how USB-C lets you plug any charger into any laptop, MCP lets any AI model connect to any data source — your email, your files, your databases. Before MCP, every AI tool needed custom integrations. Now there’s one standard plug. The fact that Microsoft, Google, and OpenAI all adopted Anthropic’s standard tells you something about who’s setting the technical agenda.

When the ingredients become branded — when “Powered by Claude” is the 2026 version of “Intel Inside” — the platform provider has a problem. If customers start asking which model runs this instead of which platform is it on, value migrates from the wrapper to the brain.

What this means for your stack decision

In post #7, I argued that model choice is the least consequential decision in your AI strategy. At the chatbot layer, that’s still true. At the agent layer — where the AI touches your actual files, makes decisions, and takes actions — model capability starts to matter a lot more.

Three things to do now:

Test the “work with your actual files” pattern before buying the wrapper. Claude Cowork for Teams is economical to try. Claude Code has a generous free tier. See whether agentic AI delivers value with your actual workflows before committing to a $99/user/month bundle. The M365 E7 suite doesn’t GA until May — you have time. (make sure you setup Claude where you are not sharing Enterprise data with Anthropic)

Measure preference, not deployment. Copilot’s 3.3% penetration and 8% preference rate should be a warning. Buying seats is procurement. Usage is signal. If you’re already paying for Copilot, run the Recon Analytics test yourself: give a cohort access to multiple tools and see what they actually choose.

Solve the governance gap before scaling agents. The Kiteworks data (63% can’t enforce purpose limitations, 60% can’t terminate misbehaving agents) is an organizational emergency, not a vendor feature request. No amount of wrapper sophistication fixes a governance vacuum. Agent 365 offers to provide this as part of the E7 license.

To be fair

The strongest read of this announcement isn’t that Microsoft is hedging against OpenAI — it’s that Microsoft is doing exactly what good platform companies do.

Azure already hosts Llama, Mistral, and Grok alongside OpenAI’s models. Adding Claude to Copilot could be the same playbook applied one layer up: pick the best model for each job, wrap it in your security and compliance layer, and charge for the wrapper. If you’re a CIO who’s already committed to M365, that’s arguably good news — you get Claude’s agentic capability without managing another vendor relationship.

The uncomfortable question is whether the “confession” framing is too neat. Microsoft’s $5 billion Anthropic investment is 2.7% of their $135 billion OpenAI stake.

That’s a rounding error, not a pivot.

Call it what it is: Microsoft is becoming a model-agnostic distribution layer — which is either a position of strength (they own the customer relationship) or weakness (they’ve admitted they can’t win on the tech itself).

There’s a second concern worth naming: Anthropic is burning cash at an estimated $8-10 billion per year in compute.

Claude’s computer-use scores (72.5% on OSWorld versus GPT-5.2 at 38.2%) explain why Microsoft chose it for agentic tasks. But those scores don’t answer what happens to every enterprise that built on Claude if the next funding round doesn’t close.

The “Intel Inside” analogy flatters Anthropic — Intel’s position eventually commoditized when AMD and ARM caught up. Whether “Powered by Claude” becomes a genuine buying signal or stays a footnote in the release notes is still an open question.

Claude Code is at 4% of GitHub commits today and accelerating. If it hits 20% by year-end, we’ll look back at the Copilot Cowork announcement not as the moment Microsoft embraced model diversity — but as the moment the distribution layer started losing the argument.

What are you seeing on the ground: are your teams reaching for what IT approved, or what actually works?

References:

Microsoft: Introducing the First Frontier Suite Built on Intelligence Trust

Microsoft 365 Blog: Copilot Cowork — A New Way of Getting Work Done

Fortune: Microsoft Copilot Cowork, AI Agents, Anthropic, E7, M365 SaaS

Microsoft Blog: Microsoft, Nvidia, and Anthropic Strategic Partnerships

Microsoft Blog: The Next Chapter of the Microsoft-OpenAI Partnership

Recon Analytics: AI Choice 2026 — Why Licenses Don’t Equal Adoption

Gartner: 40% of Enterprise Apps Will Feature Task-Specific AI Agents by 2026

Gartner: Over 40% of Agentic AI Projects Will Be Canceled by End of 2027

Microsoft Security Blog: 80% of Fortune 500 Use Active AI Agents

Anthropic: Donating the Model Context Protocol to the Linux Foundation