When your AI prediction ages badly, the interesting part is why

I said Anthropic wouldn't do scheduling. They just did.

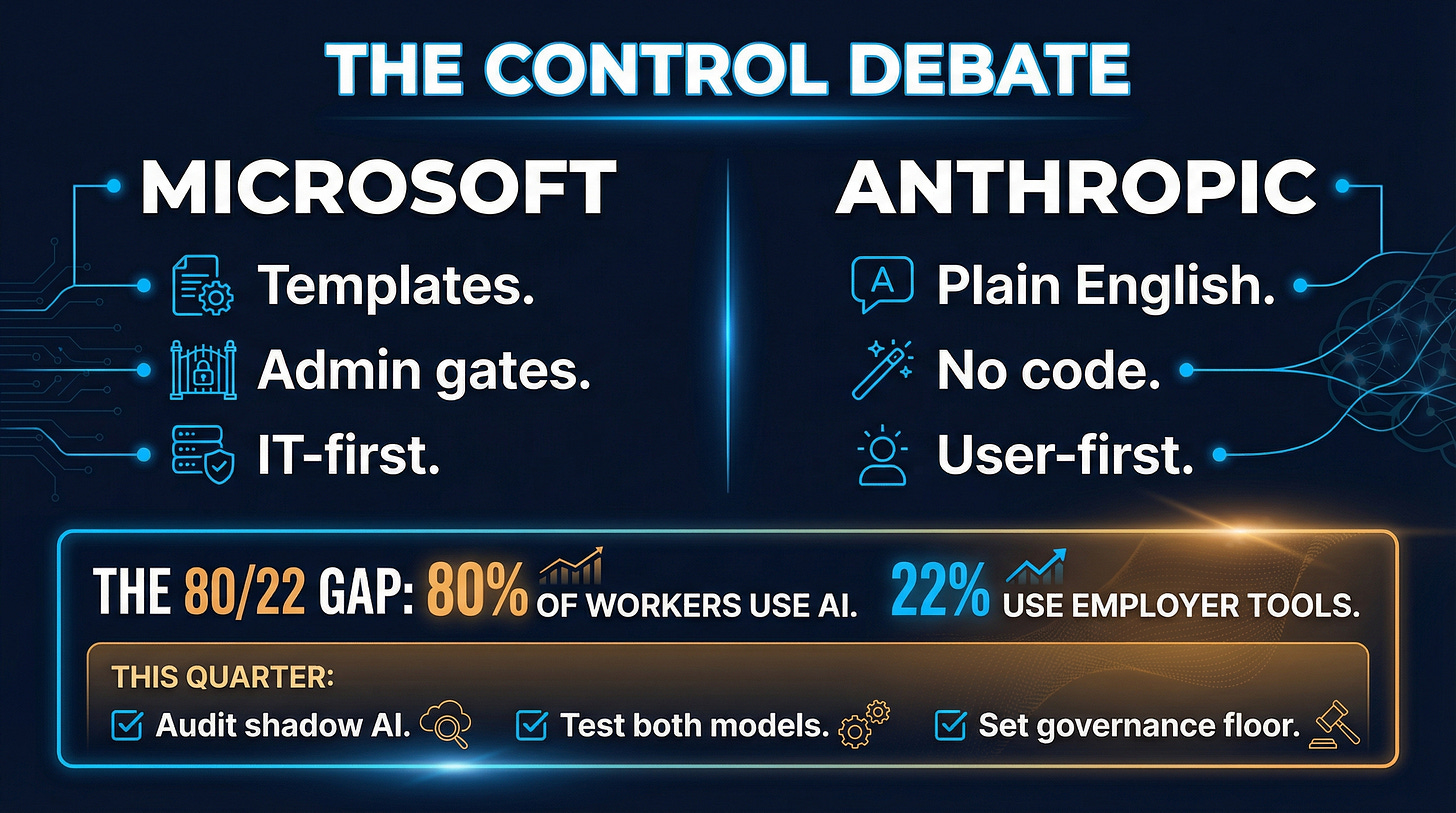

Three weeks ago, I wrote that Microsoft and Anthropic represented two fundamentally different philosophies of AI automation. Microsoft was betting on scheduled workflows. Anthropic was betting on continuous context. I deduced it as a genuine fork in the road.

Then, on February 25th, Anthropic launched Scheduled Tasks in Claude Cowork. Daily, weekly, hourly, on-demand. The very capability I said they were deliberately avoiding.

And one I am thoroughly enjoying!

So I was wrong. But I was wrong in a way that reveals something more important than the original argument.

The prediction that missed

Let me be specific about what I got wrong. I argued that Anthropic’s approach to work automation was philosophically opposed to scheduling. That their persistent-context model meant they didn’t need to carve work into discrete, time-triggered chunks. That scheduling was Microsoft’s game, and Anthropic was playing a different one entirely.

The original piece drew a clean line between two visions.

Clean lines make for good writing. They don’t always survive contact with product roadmaps. Especially in the age of exponential AI!

What actually happened: Anthropic looked at the same data everyone else is looking at and reached the same conclusion. Recurring knowledge work needs automation.

Claude Cowork’s scheduled tasks let users define recurring jobs in natural language, pick a cadence, and let Claude handle the rest. No code required. No IT ticket. No template library.

That last part is where the original thesis wasn’t entirely wrong. It was just aiming at the wrong variable.

Scheduling was never the divide

Both launches reveal the same thing: the interesting difference between Microsoft and Anthropic was never whether to schedule AI work.

Microsoft Teams AI Workflows, which completed global rollout in mid-February, operates through a specific governance model.

Admin-controlled scheduling.

Predefined templates.

IT enablement required.

The feature ships turned OFF by default and requires explicit admin activation through Power Platform security policies.

Anthropic’s version starts from the opposite end. A knowledge worker opens Claude, describes a recurring task in plain English, sets a schedule, and it runs. The plugin architecture launched January 30th adds MCP integrations, sub-agents, and custom slash commands. An enterprise plugin catalog is planned but not yet shipped.

Same capability. Radically different theory of adoption.

Microsoft is building top-down governance: IT defines what’s possible, employees operate within those boundaries. This is the logical extension of the “Frontier Firm” vision from Ignite 2025.

Anthropic is building bottom-up extensibility: individuals define what they need, governance layers get added as organizations mature.

This is a control debate, not a scheduling debate.

The shadow AI problem neither has solved

The control question matters more than the feature question.

According to Cybersecurity Dive, 80% of office workers already use AI tools, but only 22% use employer-provided ones. I wrote about this gap in Secret Cyborgs Are Already Running Your Company. That gap is a design problem. Shadow AI exists because approved systems solve the wrong problem at the wrong altitude.

Microsoft’s approach addresses the IT leader’s fear: ungoverned AI doing ungoverned things.

Fair.

But if the governance model creates enough friction that workers route around it, you’ve built a compliance artifact, not a productivity tool.

Anthropic’s approach addresses the knowledge worker’s frustration: I know exactly what I need automated, just let me describe it.

Also fair.

But without governance scaffolding, you get 200 employees running 200 custom automations with no visibility, no audit trail, and no consistency.

McKinsey’s latest data sharpens the point: 88% of organizations now use AI in some capacity, but only 39% report zero impact on EBIT.

The gap between adoption and value isn’t about which AI you pick. It’s about whether the automation model matches how work actually flows through your organization.

What this means for leaders making platform bets

Most organizations will need both philosophies, and the ones that pick a side too early will pay for it.

This pattern repeats in enterprise environments.

A top-down platform gets mandated, adoption stays shallow, and the real work happens in unsanctioned tools. Or a bottom-up tool spreads virally, creates genuine value, then gets killed by security review because nobody planned for governance.

The organizations that extract actual value from AI automation in 2026 are the ones that treat this as a sequencing problem, not a platform choice.

This quarter:

Audit your shadow AI. Not to shut it down — to learn what problems your people are actually solving. The 80/22 gap is a map of unmet needs.

Test both models on the same workflow. Pick one recurring knowledge task. Run it through Microsoft’s template-driven approach and through a natural language definition in Claude. Compare not just output quality but adoption friction.

Define your governance floor, not your governance ceiling. Non-negotiable controls are data boundaries, audit logging, access controls. Everything above that floor should be flexible enough to accommodate both directions.

This year:

The market for AI workflow automation is projected to reach $14.3B, with efficiency gains of up to 40% in knowledge work. That value won’t go to organizations that standardize on a single automation philosophy. It’ll go to organizations that build a governance layer thin enough to enable speed and thick enough to maintain control.

Microsoft’s six core capabilities for scaling agent adoption and Anthropic’s plugin catalog are both heading toward the same destination.

The question is whether your organization can move from both directions simultaneously, or whether you’ll pick a lane and wonder why the other half of your workforce isn’t in it.

My original article drew a clean line between two AI philosophies. Reality blurred it in three weeks. What is your organization actually optimizing for when it picks an AI automation platform — control or capability?

References:

Microsoft — Scheduled Prompts in M365 Copilot Chat (Tech Community)

Microsoft — 6 core capabilities to scale agent adoption in 2026

Prior articles in this series: