The Fifth Revolution: a Roadmap for the People in the Middle (Part 2)

In February 2021, Chegg was worth $14.7 billion. By 2026, it was trading at roughly $0.60 a share. Duolingo, facing the same AI disruption in the same industry, reported $1.04 billion in revenue, up 39%. The difference: Chegg sold answers that AI made free overnight. Duolingo sold the experience of learning that AI couldn’t replicate.

One company is worth a billion. The other is at risk of getting delisted.

In “The Fifth Revolution: 250 Years of Evidence for What Happens Next (Part 1),” I traced this across five technological revolutions spanning 250 years. Every one of the revolutions created more jobs than it destroyed.

Every one had an Engels’ Pause, a brutal transition where productivity surged while the people doing the work saw almost none of the benefit.

The question is what to do about it.

I was on a bus in Ireland when my own AI system told me to stop working. Told me I was trading a once-in-a-lifetime memory for a marginal improvement in weekly output. That thought — AI makes you more productive and it makes you less present, less independent, less capable without it — runs through everything that follows here.

The centaur imperative

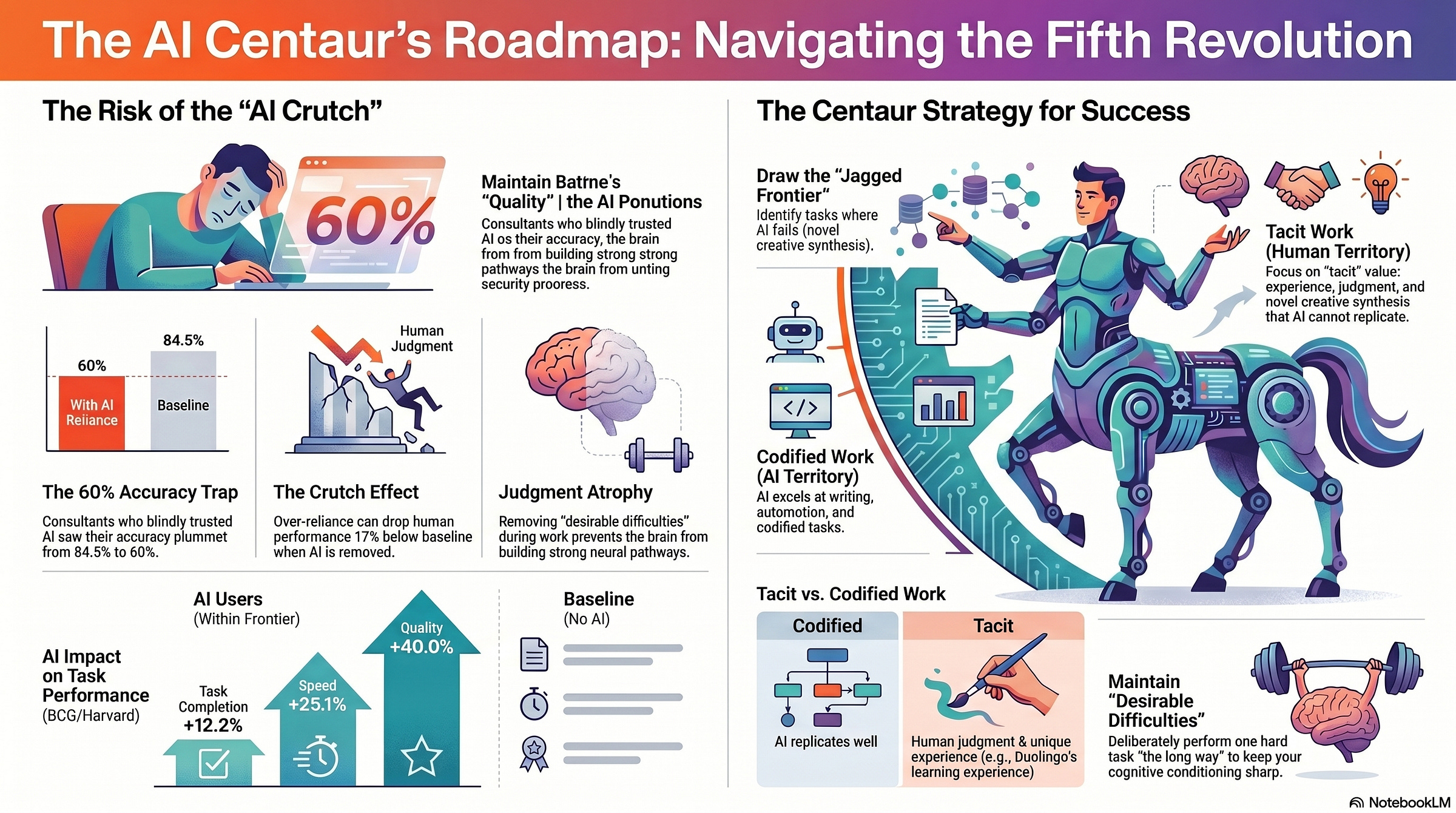

A Harvard Business School study tracked 758 BCG consultants through a controlled experiment. Some used AI. Some didn’t. The results split three ways.

Centaurs maintained a clear boundary between human and AI work. They knew which tasks they were good at and which tasks AI was good at, and they kept a clean division.

Cyborgs blurred the integration, starting a thought, letting AI extend it, weaving judgment back in. Both centaurs and cyborgs significantly outperformed the control group. AI users completed 12.2% more tasks, 25.1% faster, at 40% higher quality on tasks within AI’s frontier.

That phrase, “within AI’s frontier,” is doing more work than it looks like. The researchers called it the jagged technological frontier.

AI’s capability boundary isn’t a clean line.

It handles tasks that look difficult (market sizing, persuasive writing, multi-step analysis) and fails at tasks that look simple (integrating information the model hasn’t seen, novel creative synthesis, catching its own confident errors).

The jaggedness is the trap.

If you can’t tell which side of the frontier a task falls on, you either under-use the tool or over-trust it.

The third group matters most.

Consultants who received prompt engineering guidance and trusted AI output without independent verification saw their accuracy drop from 84.5% to 60%. Not a decline in speed. A decline in correctness. The study’s conclusion: “Over-reliance on AI output, not ignorance of the task, was the mechanism of failure.”

The people who failed were the ones who trusted AI too much. The AI didn’t replace their judgment. It replaced their need to use judgment. And when judgment atrophied, accuracy collapsed.

Robert Bjork has been studying what he calls desirable difficulties since 1994. The core finding hasn’t changed: genuine learning requires struggle. Difficulty during training hurts immediate performance but builds stronger long-term retention. The conditions that frustrate you, the blank page, the stuck feeling, the problem you can’t see around, are producing the strongest neural pathways. AI removes that friction. And it feels great. You feel smarter. You’re getting slower.

The OECD (Organisation for Economic Co-operation and Development) published data in 2026 showing a 48% performance improvement when workers used AI. Remove the AI, performance dropped 17% below baseline. Not back to baseline. Below it. The crutch effect is measurable.

The study tracked 18,000 workers across 15 countries and 11 industries. It wasn’t a fluke of one sector or one kind of task. The pattern worked for analysts, writers, coders, and managers alike. When the tool was present, output surged. When it was removed, something had quietly eroded. Workers who used AI most heavily showed the steepest post-removal decline. The 17% drop wasn’t random degradation. It tracked with usage intensity. The more you leaned on the crutch, the harder you fell without it.

Think about this like an athlete. Nobody questions whether NFL quarterbacks should use AI for film study. They should. The AI catches formations they’d miss, surfaces patterns across games, and compresses hours of tape into minutes. But no quarterback skips sprints because the film study is more efficient. The physical conditioning builds something the AI can’t replicate. Cognitive conditioning works the same way. Use AI for the film study. Run the sprints anyway.

AI fluency is a genuine competitive advantage.

The centaurs and cyborgs prove that. But AI fluency and independent cognitive capability are two different skills, and the people who let one atrophy in favor of the other will end up in the 60% accuracy group. The consultants who trusted AI blindly were educated, high-performing professionals at one of the world’s top firms.

The failure mode was comfort, not ignorance.

The builders

I gave a keynote at HackKU last weekend. Four stories from that stage stuck with me because each one illustrates a different principle for the roadmap.

Matthew Gallagher built Medvi, a telehealth company, with $20,000 and his brother. Two employees. $401 million in sales in the first year, tracking toward $1.8 billion. AI handled the operational infrastructure: scheduling, documentation, patient matching, compliance workflows. The using AI for leverage is real.

But in February 2026, the FDA issued a warning letter for misbranding violations. Investigators found allegations of more than 800 fake doctor accounts on Facebook. A class action lawsuit was filed in March 2026. Over 5,000 active ads running under questionable accounts.

AI amplifies whatever you already are. Including your ethics. Medvi is a cautionary tale about building something at scale without the institutional checks that scale requires. The “one-person billion-dollar company” narrative might be one person precisely because no compliance officer, no medical board, no chief ethics officer was in the room to say no.

AI made it possible. Judgment, or the absence of it, made it what it is.

Peter Steinberger built the first prototype of OpenClaw, now one of the most important open-source agent frameworks, in about an hour at a party. Connected WhatsApp to a command-line interface. The repo has 247,000 GitHub stars and 47,700 forks. He joined OpenAI in February 2026.

That’s the part that gets cited in “AI is democratizing everything” threads. The part that gets left out: Steinberger previously built PSPDFKit, a PDF framework running on over a billion devices, which he sold for $100 million. He had 13 years of deep engineering expertise before the AI moment. The one-hour prototype was built on a career’s worth of pattern recognition.

AI is a force multiplier on existing capability. The people building the most impressive things with AI aren’t beginners who discovered a cheat code. They’re experienced practitioners who suddenly have a power tool that matches their expertise.

Chieko Asakawa lost her sight completely by age 14 after a swimming pool accident at 11. She became an IBM Fellow. She built the world’s first practical voice browser in 1997, two decades before Alexa or Siri. In 2025, her AI Suitcase, a navigational robot with generative AI that describes surroundings in real time, was demonstrated at the Osaka World Expo.

Thirty years of building things the world needed but nobody imagined. And nobody imagined them because the people designing technology had never navigated a world they couldn’t see.

Asakawa didn’t compete with sighted engineers. She saw problems they didn’t know existed. The voice browser was a recognition that screens were the wrong interface entirely.

The Japan contrast

Same technology. Different intention. Opposite outcome.

Japan’s population has declined for the fourteenth consecutive year. The working-age population is 59.6%. There aren’t enough humans to do the work that needs doing. Automation is a survival strategy, not a cost play.

Research published through the University of Chicago found that:

In Japan, one additional robot per 1,000 workers increases employment by 2.2%.

The same metric in the United States decreases employment by 1.6%.

The technology is identical. The institutional context is opposite. In the US, automation is deployed to cut costs. Workers resist because they correctly understand the intention. The political and social friction around automation is a rational response to a system that treats displacement as a driver.

In Japan, automation is deployed to keep the lights on. Workers accept it because the alternative is that the work doesn’t get done at all.

Robots fill gaps that no worker exists to fill. The political friction dissolves because the intention is continuity.

Ask this before every AI deployment: are you deploying this because there aren’t enough people to do the work? Or to do the work with fewer people?

The technology is the same. The intention shapes the outcome.

And employees can tell the difference.

Japan proves that AI’s labor market impact is institutional, not technological. How you choose to deploy it matters more than what it can do.

Seven guiding lights

1. Know where the floor is rising

AI automates codified knowledge (answers, templates, standard procedures, anything that could be looked up, generated, or pattern-matched from existing data). The cognitive floor, the minimum level of knowledge work that requires a human, is rising fast.

Map your daily work. Literally. Take a week and track every task. Classify each one: is this codified (AI could do it with the right data) or tacit (it requires judgment, context, relationships, or the experience of having been wrong before)?

If your work is 80% codified, you’re Chegg.

If it’s 80% tacit, you’re Duolingo.

Most people are somewhere in between, and the honest version of the exercise reveals that the codified percentage is higher than they want to admit.

The Dallas Fed data gives this teeth: AI substitutes for codified work and augments tacit work. The 13% employment decline among young workers in AI-exposed sectors is concentrated in roles that were primarily codified. The workers whose jobs survived are the ones whose daily work required judgment that AI couldn’t replicate.

Ensure your value isn’t built entirely on codified work.

Every professional should be able to answer the question: what do I do that requires having been in the room?

2. Build the centaur model, not the autopilot

The BCG data is clear. Both centaurs and cyborgs outperform. Blind trusters collapse. The model that works is a deliberate division of cognitive labor.

The centaur version: I handle the judgment calls, the client relationship, the novel problem framing. AI handles the research synthesis, the first draft, the data compilation. Clean boundary. I know where the handoff is.

The cyborg version: I start a thought. AI extends it. I weave my experience back in. AI refines. The line between my thinking and AI’s contribution is blurred, but my judgment is in the loop at every iteration. This is how I engage for my Substack articles.

Both require something most people haven’t had to think about:

Knowing what you’re actually good at.

Not what your job title says.

Not what your LinkedIn profile claims.

What you do that consistently produces outcomes others can’t replicate.

That’s the boundary line. Draw it consciously before the AI draws it for you.

I argued in “AI native is not a generation. It’s a drive.” that intrinsic motivation, not demographics, predicts AI success.

The centaur and cyborg approaches are what that drive looks like in practice.

3. Maintain desirable difficulties deliberately

Bjork’s research spans three decades and the finding hasn’t changed: the conditions that frustrate you during learning are the conditions that build the strongest retention. Spacing, interleaving, retrieval practice. The struggle we have is the signal.

Remove the struggle and the learning collapses.

AI removes struggle with ruthless efficiency. That’s its selling point.

Stuck on a problem? Ask the model.

Need to structure an argument? Let AI draft the outline.

Can’t remember the research? Ask for a summary.

Every friction point that used to force your brain to do the work is now optional.

The OECD crutch effect data makes this concrete: 48% better with AI, 17% worse without it. Performance doesn’t return to baseline when the tool is removed. It drops below baseline. The tool created dependency.

For every task you delegate to AI, keep one task you do the hard way. Write one section of every report without AI. Do one analysis by hand before checking it against the model’s output. Solve one problem by sitting with the discomfort instead of reaching for the prompt.

Quarterbacks use AI for film study. They still run sprints. Endurance athletes use AI for training optimization. They still run in the rain.

The AI handles what it’s good at. The human maintains what only struggle builds.

Myelination (the process by which the brain wraps nerve fibers in insulation through repeated effortful practice, making signals faster and stronger) is what separates the consultant who can think independently from the one whose accuracy drops to 60% when the tool is removed.

That’s neuroscience, not metaphor.

4. Learn to see what’s missing

Asakawa saw what sighted engineers never imagined because she navigated a world they’d never experienced. Buolamwini saw what trained algorithms couldn’t because she asked a question the training data had no answer for: what happens when the system encounters someone who doesn’t look like its training set?

The most valuable skill in an AI-saturated world is noticing absence. AI processes abundance better than any human ever will. But whose frustration isn’t represented in the data? What problem hasn’t been articulated? Which customer stopped calling, and why? What question isn’t being asked?

AI generates answers from existing data. It can’t notice that the data is incomplete. It can’t feel the frustration of a problem nobody has named yet. It can’t sit in a room and recognize that the most important person isn’t there.

Ask yourself:

Who’s not in this room?

Whose frustration haven’t I imagined yet?

What’s the problem that nobody’s complaining about because they’ve given up?

Those questions are the new frontier. And the frontier is where the new jobs have always come from, in every single revolution.

5. Redesign the work itself

I found in one research 94% of organizations are adding AI to existing workflows instead of redesigning work around AI’s capabilities. It’s the same mistake factories made with electricity in the 1870s: they bolted electric motors where the steam engine had been — same layout, near-zero productivity gain for 40 years. Henry Ford figured out that electricity meant machines could be arranged by production flow, not proximity to a power source. The assembly line was an electricity invention, not a manufacturing one.

Brynjolfsson’s $1-to-$10 ratio means that organizations spending millions on AI licenses while spending nothing on work redesign are repeating that mistake. The technology is cheap. The Ford assembly line was expensive. The assembly line is what created the value.

The person who uses AI to do their existing job 20% faster is valuable. The person who reimagines what their job could be when AI handles the codified parts is irreplaceable. One is incremental. The other is structural.

The Solow Paradox resolved when organizations stopped treating computers as faster typewriters and started building business models that only computers could enable.

Amazon isn’t a faster bookstore.

Google isn’t a faster library.

Uber isn’t a faster taxi dispatcher.

Each one redesigned the unit of work around what computing made possible.

That redesign is coming for AI. The individuals and organizations who figure it out first will compound their advantage for decades.

6. Three words are a product brief

At HackKU, I ran a phone exercise with the audience. Take out your phone. Think about one thing that frustrates you every single week. Something you encounter so often that you’ve stopped noticing it. Type three words.

Those three words are a product brief.

The AI is free. The APIs are accessible. The infrastructure to build a prototype has never been cheaper. The only thing that cannot be manufactured, cannot be generated, cannot be prompted into existence, is those three words.

Because they came from your life. Your commute. Your workflow. Your family’s medical bills.

Your frustration with a system that was designed for someone else.

Gallagher’s three words were something like “healthcare access sucks.” Steinberger’s were probably “mobile access sucks.” Every product that has ever solved a real problem started with someone who couldn’t ignore a frustration anymore.

Agency is the skill AI can’t replicate. The ability to ask questions that come from lived experience. The ability to look at a broken system and say “this is broken and I’m going to fix it.” AI can help you build the fix. AI can accelerate the prototype, generate the code, design the interface.

AI cannot generate the frustration that makes the fix worth building.

7. The pause will end. Position for what comes after.

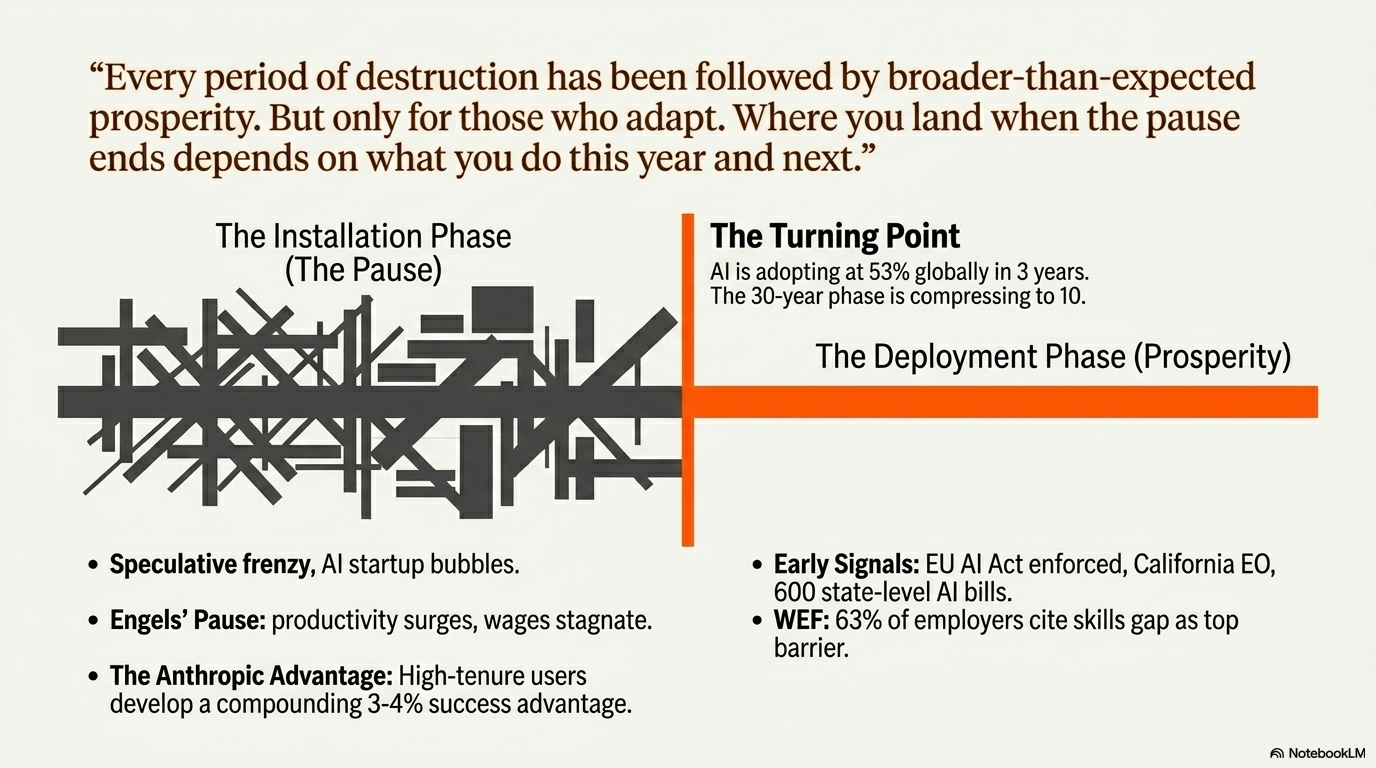

The Engels’ Pause ended. Wages rose 123%, outpacing productivity growth. The Solow Paradox resolved. Computers eventually produced the productivity gains economists predicted.

Every installation phase has given way to a deployment phase. Every period of destruction has been followed by broader-than-expected prosperity. (I trace this full 250-year arc in “The Fifth Revolution: 250 Years of Evidence for What Happens Next.”)

But only for those who adapted. The weavers who retrained as factory managers did well. The weavers who didn’t, didn’t.

The canal workers who became railway engineers prospered. The canal workers who waited for the canals to come back waited forever.

Perez’s framework places the current moment in the installation phase: speculative frenzy, massive capital expenditure, financial bubbles, institutional lag.

The deployment phase, the period of broadly shared prosperity, comes after the turning point.

That turning point hasn’t arrived yet.

The $1.366 trillion in infrastructure spending, the AI startup bubble, the venture capital frenzy: those are installation-phase signals.

The people who build AI fluency during the installation phase will compound their advantage into the deployment phase. Anthropic’s economic data shows that high-tenure users develop a compounding 3-4 percentage point success advantage.

Six months of deliberate AI use today translates to a structural advantage that widens over time.

One caveat: the historical analogy assumes institutional adaptation happens at historical speed. AI is adopting faster than any prior revolution (53% global adoption in 3 years). If the installation phase compresses from 30 years to 10, the institutional response needs to compress proportionally. The track record on institutional speed is not encouraging.

But that adaptation is starting. The EU AI Act is being enforced. California has issued an executive order on AI procurement standards. 600 state-level AI bills are moving through US legislatures. The WEF reports that 63% of employers cite the skills gap as their top barrier. These are the early signals of the deployment phase, the institutional framework catching up to the technological capability.

Where you land when the pause ends depends on what you do this year and next.

The view from the bus

I sat on a bus in Ireland with the Atlantic out every window. Stone walls older than the country I live in. My son, who had just marched through Dublin on a day he’ll remember when he’s 50. The green hills of Clare. And I couldn’t see any of it because I was staring at my screen.

The system I built, an AI that reads my habits, protects my time, and occasionally tells me what I need to hear, saw something I couldn’t. It saw that I was trading a once-in-a-lifetime memory for a marginal improvement in weekly output. It did the math I wouldn’t do. And it made the call I wouldn’t make.

I closed the laptop. I watched the landscape change from rolling farmland to the raw Atlantic edge. I listened to the kids on the bus laugh about the parade.

I had a conversation with my son that I don’t remember the exact words of, but I remember the feeling.

Chieko Asakawa lost her sight at 14 and spent 30 years building technologies for a world she navigated differently than everyone around her. She never saw a screen. She never saw a landscape. But she saw what every sighted engineer in every well-lit lab had missed: that technology designed only by people who can see will only serve people who can see.

The roadmap through the fifth revolution is about what you see when you look up from the screen.

The frustration nobody’s named. The person who isn’t in the room.

The problem that doesn’t have a dataset yet because nobody with power has experienced it.

250 years of evidence says the jobs will come. The transition will hurt. The pause will end.

And the people who build something meaningful, who see the problems AI can’t see, who maintain the judgment AI can’t replicate, who ask the questions AI can’t generate, will define what comes next.

References

Tier 0 -- academic/government data

Tier 1 -- major research firms

Tier 2 -- academic/industry research

Dell’Acqua, Mollick, Lakhani et al. -- Navigating the Jagged Technological Frontier (Harvard/BCG)

Buolamwini -- Gender Shades: Intersectional Accuracy Disparities (MIT)