The Fifth Revolution: 250 years of evidence for what happens next (Part 1)

The Engels' Pause is back: what 40 years of stagnant wages in the 1800s tells us about AI in 2026

The intelligent system I built to make me more productive had to tell me to stop being productive.

My 15-year-old son was marching in the Dublin St. Patrick’s Day parade with his school band. Next day, we were on a tour bus driving toward the Cliffs of Moher. Beautiful Ireland out every window. Rolling green hills sectioned off by stone walls that have been standing for ages. The Atlantic getting closer.

And I was on my laptop. Writing Substack articles about AI. With AI.

My own AI system I have developed to brainstorm, research, draft and edit content flagged me:

Don’t let the I-should-be-productive voice ruin a once-in-a-lifetime day. You can’t manufacture this memory later.

The AI I spent months building to maximize my output looked at what I was doing and said: stop.

The thing it was protecting me from was, itself.

I closed the laptop. It took me a minute. The pull of the screen felt like work, and work feels like identity, and identity feels like safety.

Especially right now, when the thing generating that pull is the same technology that has 48% of Gen Z saying AI’s risks outweigh its benefits in the workplace.

AI doesn’t feel like a threat when you’re using it. It feels like productivity. It feels like getting ahead. That’s what makes it so hard to see what’s actually being traded away.

And it’s what makes the harder question so difficult to think with:

Will AI take my ability to do my job?

The paradox nobody can resolve

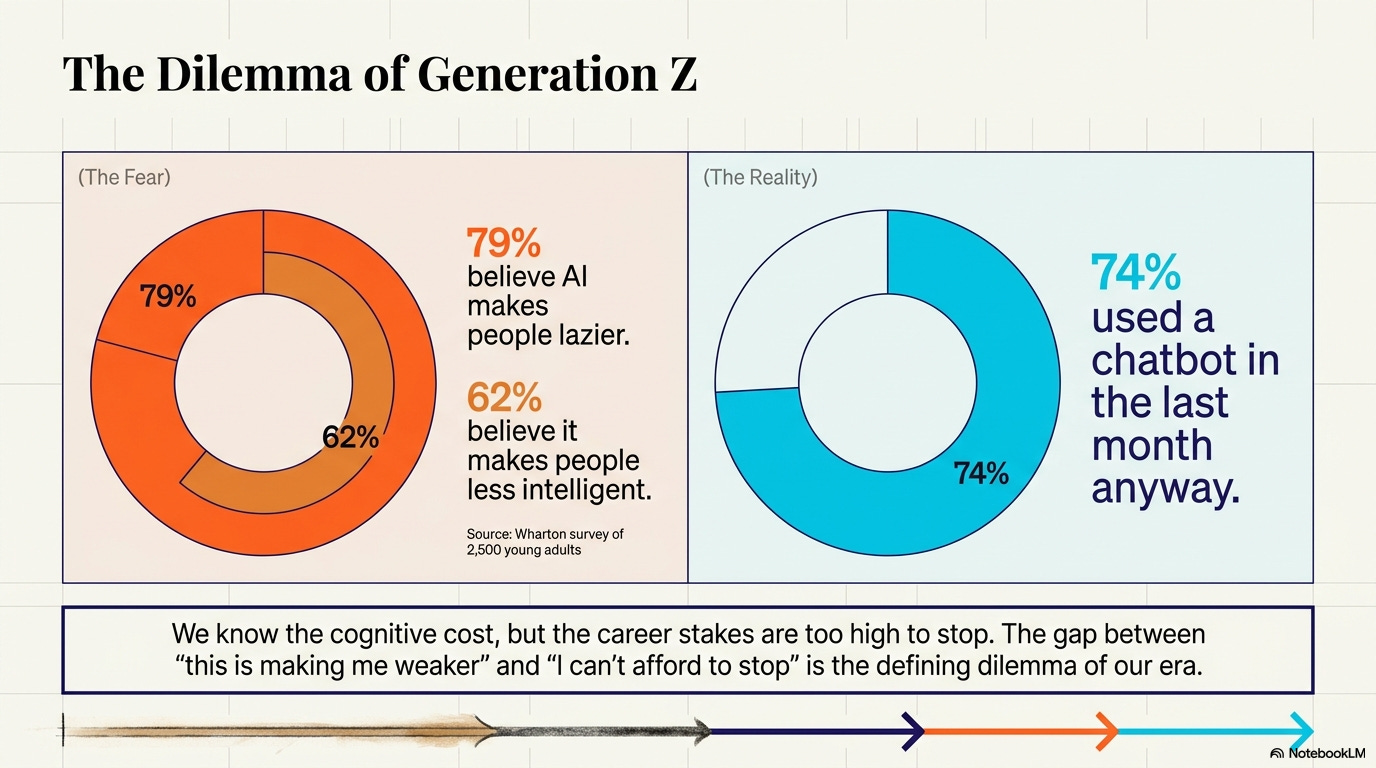

Gallup polled 1,572 Gen Z adults in April 2026.

Excitement about AI had dropped 14 points to 22%.

Anger had climbed 9 points to 31%.

A separate Wharton survey of 2,500 young adults found 79% believe AI makes people lazier and 62% believe it makes people less intelligent.

And 74% of them had used a chatbot in the previous month. Anyway.

That’s the clearest signal of what’s actually happening. An entire generation sees the trade-off with more clarity than anyone, names the cost out loud, and keeps going anyway. Because the career stakes outweigh the cognitive concerns. Because their classmates are using it.

Because the gap between “I know this is making me weaker” and “I can’t afford to stop” is exactly the kind of dilemma that previous technological revolutions created too.

Most conversations on AI and jobs treats the question as binary. Either AI will destroy all the jobs (doomer) or AI will create more than it destroys (optimist).

The actual evidence, across 250 years and five technological revolutions, says both are true.

The jobs come. The transition destroys lives. Those two facts uncomfortably coexist, and the evolving roadmap has to hold both of them.

Today let’s zoom out to 250 years to show that this split isn’t new. It’s worth looking into this pattern.

Five revolutions, one pattern

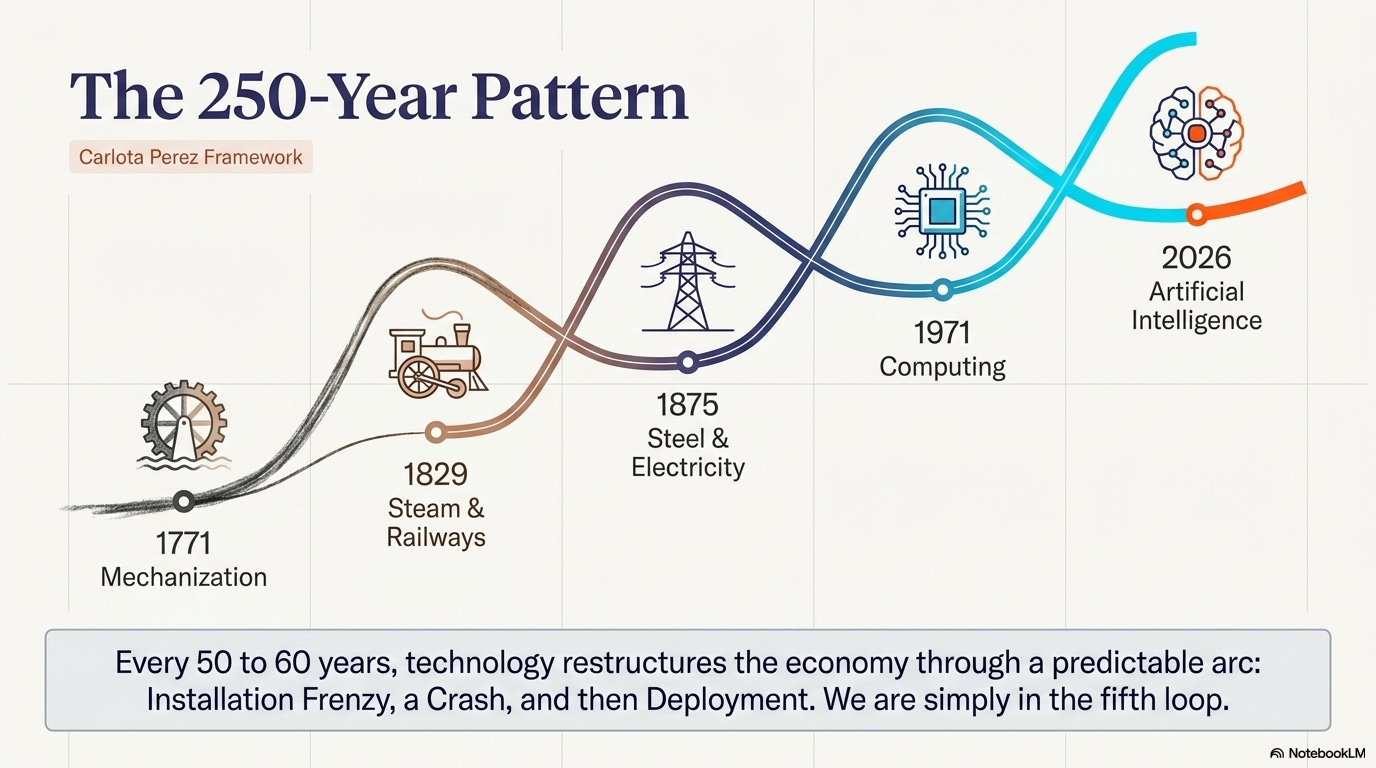

The economist Carlota Perez has spent her career documenting what she calls “great surges of development.” Her framework, published through Cambridge University Press in 2002, identifies a recurring pattern:

Every 50 to 60 years, a new technology appears that restructures the entire economy.

Each revolution follows the same arc.

An installation phase of speculative frenzy, a turning point (usually a crash), and then a deployment phase where institutions catch up and broadly shared prosperity follows.

There have been five of these. AI is the fifth.

Understanding the first four is the only way to make sense of what might transpire with AI in the current moment.

Mechanization (1771)

Richard Arkwright’s water-powered cotton mill in Cromford, England, is the conventional starting point. Arkwright built a new way to organize labor. Skilled weavers and spinners who had controlled the pace and quality of textile production for generations watched their livelihoods collapse into factories where unskilled workers tended machines.

The response was the Luddites. Most people use “Luddite” to mean technophobe.

The actual Luddites were skilled textile workers in Nottingham, Yorkshire, and Lancashire between 1811 and 1816, and they protested machinery used “in a fraudulent and deceitful manner” to circumvent standard labor practices. Their objection was to factory owners using machines to de-skill the work, cut wages, and eliminate the apprenticeship system that had governed the trade for centuries. Ned Ludd, the movement’s supposed founder, was likely fictional. A name to rally around, might not be a real person.

The British government’s response tells you how seriously they took the threat. Parliament deployed 12,000 troops to suppress the Luddites. As the historian Eric Hobsbawm documented, that was a larger force than the Duke of Wellington led into Portugal in 1808 during the Napoleonic Wars. Parliament made machine-breaking a capital crime with the Destruction of Stocking Frames Act of 1812.

Convicted Luddites faced execution or penal transportation to Australia.

The Luddites were organized skilled workers who understood exactly what was happening and fought it. And they lost. Not because they were wrong about the disruption, but because the economic forces behind mechanization were larger than any protest movement could contain.

What happened next is the part I don’t see written about more and I found very interesting.

ELI5: The Engels’ Pause

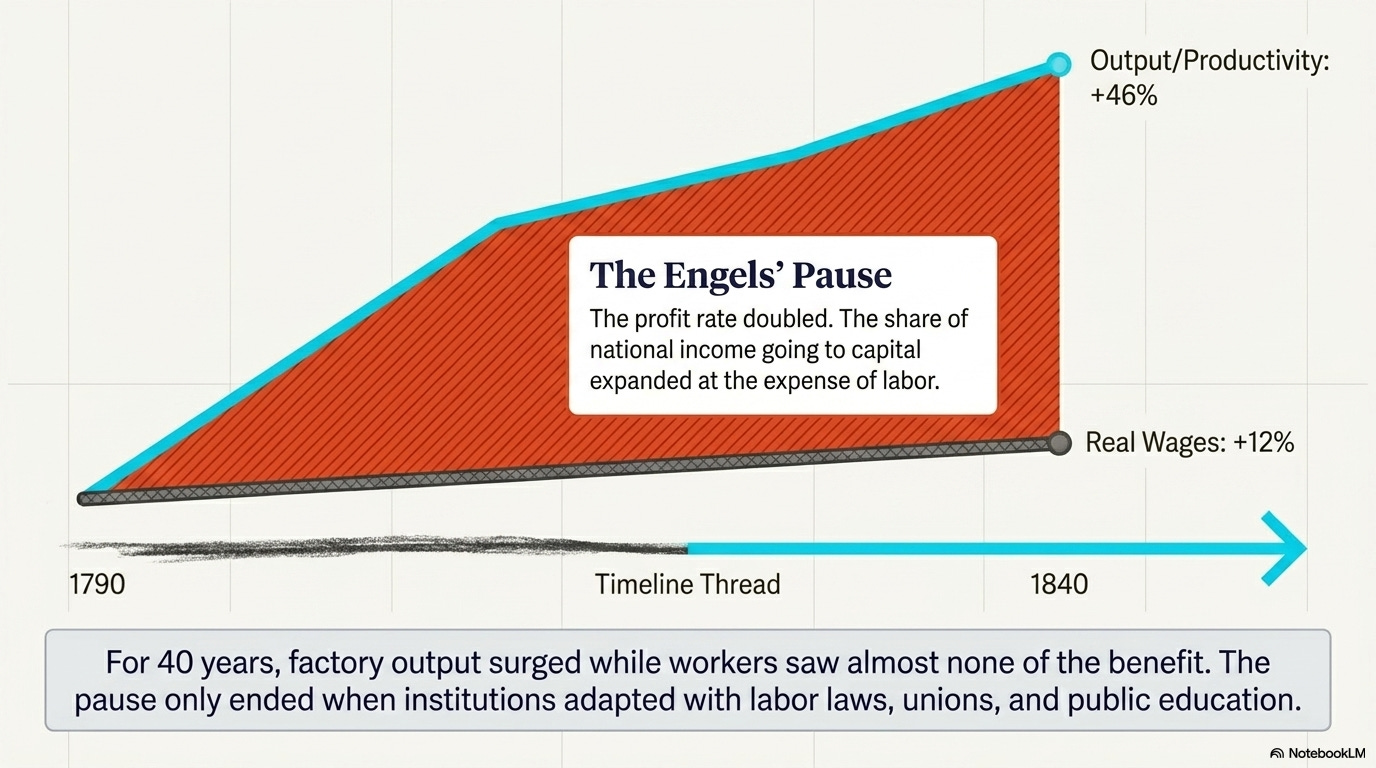

Imagine a factory that doubles its output. You’d expect the workers to get raises. For 40 years during the Industrial Revolution, they didn’t. Profits doubled while wages barely moved. Economist Robert Allen named this the “Engels’ Pause” after Friedrich Engels, who documented the misery firsthand. The pause eventually ended (wages grew even faster than productivity from 1840 to 1900) but only after major institutional changes: labor laws, unions, education reform.

Robert Allen’s research, published through Oxford Economic Papers, shows what happened in Britain between 1790 and 1840: output per worker rose 46%. Real wages rose 12%. For forty years, productivity surged while the people doing the work saw almost none of the benefit. The profit rate doubled. The share of national income going to capital expanded at the expense of labor and land.

Friedrich Engels documented what that looked like on the ground. Families in Manchester working 16-hour days. Children in mines. Life expectancy in industrial districts dropping below 20 years. This isn’t a thought experiment about what might happen. This is history as It happened for two generations.

Then the pause ended.

Between 1840 and 1900, output per worker increased 90%. Real wages increased 123%. Workers didn’t just catch up to productivity. They exceeded it. The wage share of national income grew faster than output growth, meaning the gains of the revolution were finally, belatedly, broadly shared.

What changed?

Institutional adaptation through

The Factory Acts regulated working hours and conditions.

Trade unions won collective bargaining rights.

Public education expanded.

The economy restructured from labor-replacing technologies (the early spinning mills and power looms) to labor-augmenting ones (machine tools that made skilled workers more productive).

Carl Benedikt Frey’s research identifies this shift as the decisive factor:

The early Industrial Revolution was predominantly labor-replacing.

The later inventions were labor-augmenting.

The same choice faces AI today.

The mechanization revolution created entire new categories of work that didn’t exist before Arkwright’s mill:

factory management,

industrial engineering,

quality control,

logistics,

maintenance,

accounting at scale.

But those jobs didn’t arrive on day one. They arrived after the institutional framework caught up.

Railways and steel (1829)

In 1829, the directors of the Liverpool and Manchester Railway held a public competition to decide whether their new railway would be pulled by stationary steam engines with cables or by self-propelled locomotives. Five machines entered. George Stephenson’s Rocket was the only one that finished without breaking down, averaging 12 miles per hour over 70 miles.

The locomotive won. The railway opened the following year.

Within two decades, Britain’s canal system, coaching inns, and stagecoach network collapsed.

Canal workers, toll collectors, stagecoach drivers, hostlers, farriers, and the entire infrastructure of horse-powered transportation saw their industries evaporate.

The fear response was immediate and sometimes absurd.

Doctors diagnosed “railway spine,” a new medical condition allegedly caused by the vibration of train travel.

Newspapers warned that human lungs couldn’t process air at speeds exceeding 30 miles per hour.

Landowners worried that sparks from locomotives would set their fields on fire.

Some of those concerns were legitimate. Early rail travel did kill people. But the broader fear was existential:

a technology that compressed distance would destroy every business model built on the assumption that distance was expensive.

It did destroy those business models.

And it created

civil engineering as a profession,

urban planning as a discipline,

modern finance through the joint-stock company,

national labor markets that let workers move to where jobs existed, and

tourism as an industry.

None of those things existed before the railway.

Every one of them employs more people today than the canal and coaching industries ever did.

Steel, electricity, and heavy industry (1875)

Before 1856, steel was a luxury material. A single ton cost roughly $10,000 in today’s money and took weeks to produce. Henry Bessemer figured out how to blast air through molten pig iron, burning off the carbon impurities in minutes instead of days. The cost of steel collapsed. By the 1870s, steel was cheap enough to build bridges, railways, and skyscrapers. Andrew Carnegie built an empire on the gap between the old price and the new one.

Then came electricity. In September 1882, Thomas Edison flipped a switch at the Pearl Street Station in lower Manhattan and lit up 400 lamps across 85 customers. It was the first commercial electrical power distribution system in the world. Within a few years, wires were strung across every major city, often haphazardly, often lethally.

The fear of electricity was vivid, specific, and grounded in real death.

“Everybody who ventures upon streets in a heavy storm runs a risk of making his next exit from home in a coffin.”

That’s the New York Tribune, January 23, 1889.

The New York World reported on January 19, 1890,

that citizens “destroyed telegraphic devices and phones in terror.”

This wasn’t irrational. In October 1889, John Feeks, a Western Union linesman, died in public view on a Manhattan street after touching a high-voltage wire. His burning body remained tangled in the lines for over an hour while firemen tried to bring him down. This happened in broad daylight in a major city. The public reaction was a reasonable response to watching a man burn to death in an intersection.

When factories first adopted electric motors, they replaced the central steam engine with a central electric motor. Same factory layout. Same arrangement of machines around a central power shaft. Same workflow. The productivity gain was close to zero.

It took 40 years for manufacturers to realize that electricity changed the fundamental constraint. Steam engines required every machine to connect to a central shaft, so factories were arranged around power proximity. Electric motors could be distributed. Each machine could have its own motor. That meant machines could be arranged by production flow instead of by proximity to the power source.

Henry Ford figured this out.

The assembly line was an electricity invention, not a manufacturing invention. And it changed everything. But it took four decades for the organizational redesign to follow the technological capability.

*Every company bolting ChatGPT or Claude or Gemini onto its existing workflow is the factory owner who replaced the steam engine with an electric motor and wondered why nothing changed. Erik Brynjolfsson’s research through Brookings found that for every $1 invested in IT hardware, firms need to invest up to $10 in complementary organizational changes, training, and process redesign.

The technology is cheap. The reorganization is expensive. The organizations that redesign work around AI will capture the value. Everyone else will present the capital expense to the board as innovation.*

Computing (1971)

Intel’s 4004 microprocessor is the conventional marker. Within 30 years, typing pools disappeared. Switchboard operators vanished. Travel agents declined by 70%. Bookkeepers who reconciled ledgers by hand became redundant.

In 1987, Robert Solow won the Nobel Prize in Economics and made an observation that still hasn’t been fully explained: “You can see the computer age everywhere but in the productivity statistics.” Computers were on every desk. IT spending was surging. And aggregate productivity was flat. The Solow Paradox.

Organizations hadn’t reorganized around computers yet. When they did, in the late 1990s, productivity growth surged. And then it plateaued again, and economists are still arguing about why.

The fourth revolution created software engineering, data science, digital marketing, e-commerce, social media management, cybersecurity, UX design, cloud architecture, the gig economy, and every job title with “digital” in it. None of those categories existed in 1971. Together, they employ tens of millions of people. But the transition took decades, and the Solow Paradox was real for years before the gains materialized.

AI (now)

In February 2026, the Federal Reserve Bank of San Francisco published an economic letter concluding there was “limited evidence of a significant AI effect” on aggregate productivity. A general-purpose technology (one that reshapes entire sectors) typically takes “a generation or longer” to show up in the productivity data.

The Solow Paradox is repeating.

Stanford’s HAI reports that AI reached 53% global adoption in three years, faster than the PC or the internet. McKinsey estimates a $4.4 trillion productivity opportunity. Companies are spending at installation-phase rates. And the productivity statistics haven’t moved.

If you’ve read the first four revolutions carefully, you know what this means. The organizational redesign hasn’t happened yet. The electric motor phase. The central-shaft factory layout. The $10 in complementary change that organizations haven’t spent alongside their $1 in technology.

But AI has a feature that none of the previous four revolutions share.

Every previous revolution displaced physical labor and created cognitive labor as replacement. Steam displaced hand spinners and created factory managers. Electricity displaced artisanal manufacturers and created industrial engineers. Computing displaced clerical workers and created knowledge workers. Each time, the displaced workers (or more accurately their children and grandchildren) moved up the cognitive ladder.

AI attacks the cognitive ladder itself.

For the first time in 250 years, the technology displacing jobs is attacking the same cognitive work that previous revolutions created as the escape route. That’s the inversion. And it’s why “learn to code” is no longer the answer.

ELI5: The cognitive inversion

For 250 years, every new technology killed manual jobs and created thinking jobs. AI flips the pattern: it’s coming for the thinking jobs first. The question everyone’s asking is: what’s above thinking? The answer, based on data from ARC-AGI-3 benchmarks, is that AI scores below 1% on tasks requiring genuine novel reasoning while humans score 100%. AI can process existing knowledge. It struggles with situations nobody has encountered before. That gap is the roadmap.

The Engels’ Pause is here again

The Dallas Fed published data in January 2026 showing that employment in the most AI-exposed occupations declined from 16.4% to 15.5% of total employment between November 2022 and September 2025. The headline number is 0.1 percentage points of aggregate unemployment. Invisible in the macro data.

But disaggregate by age and the picture changes. Young workers’ job-finding rate declined more than 3 percentage points in highly AI-exposed occupations. In computer systems design alone, employment dropped 5% while wages rose 16.7% in the same period. The NBER found that of 37.1 million workers with high AI exposure, 6.1 million have both high exposure and low adaptive capacity. These are the workers with nowhere to go. Not college-educated. Not in urban areas with thick labor markets. Not young enough to retrain cheaply.

Goldman Sachs published research in April 2026 using 40 years of displacement data. Technology-displaced workers’ real earnings remain 10 percentage points below non-displaced workers a full decade later. The scarring goes beyond income: delayed homeownership, delayed household formation, lower probability of marriage.

The Bureau of Labor Statistics projects 5.2 million net new jobs between 2024 and 2034. The World Economic Forum projects 170 million new roles created against 92 million displaced, for a net gain of 78 million globally. The aggregate numbers are positive.

AI makes cognitive work cheaper. Cheaper cognitive work doesn’t mean less of it. It means more of it, deployed in more places, handled by fewer people. William Stanley Jevons observed this in 1865 with coal: more efficient steam engines didn’t reduce coal consumption, they made coal useful in so many new applications that total consumption exploded. The same dynamic applies to thinking. The total volume of knowledge work will grow. The humans doing it will be fewer, higher-skilled, and more expensive per head. The rest gets absorbed by the machine.

Both things are true at the same time. The jobs are coming. And the people caught in the transition are getting permanently damaged. This is the Engels’ Pause. Output rising. Wages flat or declining for specific cohorts. The profit share expanding at the expense of labor. For 40 years in the 1800s, Friedrich Engels watched this happen and wrote about it. In the 2020s, the Dallas Fed is documenting the same pattern in different language.

The people who survived the cut are doing well. There are just fewer of them. The ladder is being pulled up.

A 10-year earnings gap means a 25-year-old displaced in 2026 is earning less than peers in 2036. At 35. With a decade of career development behind them. The aggregate job creation numbers are likely correct. The individual cost of being in the wrong cohort at the wrong time is also real and permanent. The roadmap has to hold both of these, because any argument that only holds one is dishonest.

To be fair: the strongest case that this time is different

Wassily Leontief won the Nobel Prize in Economics in 1973 and spent the rest of his career warning about labor displacement. His most famous observation, from 1983: “The role of humans as the most important factor of production is bound to diminish in the same way that the role of horses was first diminished and then eliminated.”

The horse analogy is the one that lands hardest. The US equine population grew sixfold between 1840 and 1900 as horses were integrated into industry and transportation. Then the internal combustion engine arrived. The horse population collapsed. Horses never got new jobs. There was no “equine retraining program.” There was no category of work that horses could do that engines couldn’t. The horse economy simply ended.

Is that what’s happening to cognitive workers?

The optimistic response is that humans aren’t horses. Humans retrain. Humans have political agency. Humans create art, build relationships, exercise judgment. Humans vote.

But retrain into what? The evidence on retraining is sobering. The Trade Adjustment Assistance program, the primary US government retraining initiative, has been studied extensively. Workers who participated earned 10 percentage points less than workers who didn’t participate. The retraining program made outcomes worse. The NBER found that 40% of Workforce Innovation and Opportunity Act participants are trained into low-wage support roles under $25,000 a year. The 200-year agricultural transition, from 90% of the workforce to 2%, delivered abundant food and new industries, and it destroyed rural communities so thoroughly that the consequences are still shaping American politics today.

Daron Acemoglu, who won the Nobel in 2024, has been making a different argument. The real danger is what he calls “so-so automation,” meaning technologies that replace jobs without actually improving outcomes. A self-checkout that eliminates a cashier but makes the shopping experience worse. An AI chatbot that replaces a customer service agent but resolves fewer issues. The job is gone but the work isn’t being done better. That’s the worst possible outcome: displacement without productivity gain.

ELI5: So-so automation

Imagine a restaurant replaces its waiters with tablets for ordering. The waiters are gone, but the food takes longer, orders get messed up more, and nobody’s there to recommend the special. The restaurant saved on labor. The dining experience got worse. That’s so-so automation. Acemoglu’s worry is that AI could do this to cognitive work: eliminate the job without actually doing the job well.

In every previous technological revolution, the new jobs came. It took decades. Sometimes a century. Nobody starved in the aggregate. The agricultural workforce shrank from 90% to 2% and food production exploded. But the humans caught in the middle didn’t have decades. They had one life. And the institutional adaptations that eventually ended the Engels’ Pause (the Factory Acts, the unions, the public education system) arrived too late for the people who needed them most.

The optimist and the pessimist are both right, and they’re arguing about timescale. Brynjolfsson is right about the 20-year outcome. Acemoglu is right about the 5-year transition. The question for anyone reading this in 2026 is which timescale applies to their career.

What survives: Chegg vs. Duolingo

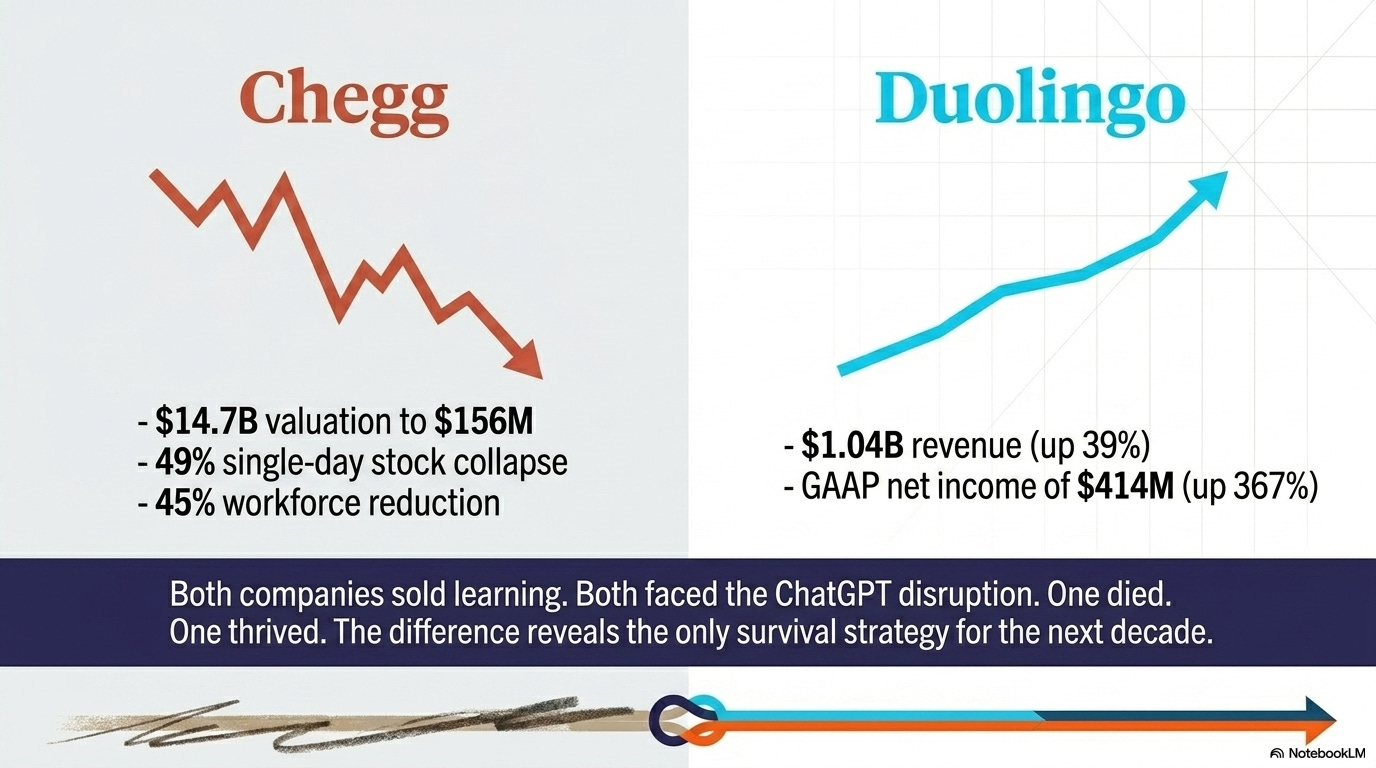

Same industry. Same technology. Same disruption. Opposite outcomes.

Chegg was worth $14.7 billion in February 2021. By 2026, it was trading at around $0.60 a share with a market cap of roughly $156 million and at risk of NYSE delisting. A 49% single-day stock collapse in May 2023 after the company acknowledged ChatGPT was affecting its business. A 45% workforce reduction in October 2025. Revenue down 49% year over year.

Duolingo reported $1.04 billion in revenue for 2025, up 39% year over year. 52.7 million daily active users. GAAP net income of $414 million, up 367%. CEO Luis von Ahn chose to invest $50 million in removing conversion friction, prioritizing growth over monetization. The company is targeting 100 million daily active users by 2028.

Both companies sold learning. Both faced the same AI disruption. One died. One thrived.

The difference is what they were actually selling. Chegg sold answers. When the cost of generating answers dropped to zero, Chegg had nothing left. Every dollar of Chegg’s revenue was built on information asymmetry. When ChatGPT eliminated that asymmetry, the entire business evaporated. There was no second thing.

Duolingo sold the experience of learning. The streak. The gamification. The social accountability. The identity of being someone who is learning a language. AI didn’t compete with that. AI made more of it possible. Duolingo used AI to generate more content, personalize more pathways, and create more engagement loops. The technology that killed Chegg fed Duolingo.

Chegg asked: what do we sell? Duolingo asked: why do people come to us?

Apply that to your own work. Map your daily tasks. What percentage is codified knowledge, meaning answers, templates, standard procedures, things that could be looked up or generated? That’s your Chegg percentage. And what percentage is tacit knowledge, meaning judgment calls, relationship context, creative problem-solving, things that require having been in the room? That’s your Duolingo percentage.

The Dallas Fed data makes this concrete. AI substitutes for entry-level workers doing codified work but augments experienced workers drawing on tacit knowledge. The workers being displaced aren’t losing their jobs because AI does everything better. They’re losing their jobs because AI does the codified part perfectly, and their jobs were mostly codified.

The codified-versus-tacit line is the clearest lens I’ve found for making sense of this moment. Chegg sold answers. When AI made answers free, Chegg evaporated. Duolingo sold the experience of becoming someone who learns. When AI made content generation cheap, Duolingo used it to build more of what AI couldn’t replicate. Same disruption. Opposite outcomes.

Map your own work against that divide and the picture gets uncomfortable fast. Most of us carry more codified weight than we’d like to admit.

The 250-year pattern says the jobs will come. The Engels’ Pause data says the transition will damage real people permanently. Both things are true. The question nobody’s answered yet is what the roadmap looks like for someone sitting in this transition right now, in 2026, trying to figure out which side of the line they’re on and what to do about it.

That’s what I’m tackling next.

In “The Fifth Revolution: A Roadmap for the People in the Middle,” I dig into the data on what actually works — starting with a Harvard/BCG experiment that tracked 758 consultants using AI, where a third of them got worse at their jobs, not better. Then four builders I met at HackKU this spring, each illustrating a different survival principle — including one who built a $1.8 billion company with two employees and one who changed an entire industry by putting on a mask.

And Japan, where the exact same robots that destroy American jobs create Japanese ones. Same technology. Opposite outcome. The difference is an interesting question worth asking.

The pattern has an ending. The roadmap is more specific than you’d expect.

References

Tier 0 -- academic/government data

Carlota Perez, “Technological Revolutions and Financial Capital” (Cambridge UP, 2002)

Oxford Economic Papers -- Slow Real Wage Growth in the Industrial Revolution

Dallas Fed -- Young Workers and AI-Exposed Occupations (Jan 2026)

NBER -- How Adaptable Are American Workers (Working Paper #34705)

Federal Reserve Bank of San Francisco -- AI Moment: Possibilities, Productivity, Policy (Feb 2026)

Bureau of Labor Statistics -- Employment Projections 2024-2034

Foreign Affairs -- Will Humans Go the Way of Horses? (Leontief/Brynjolfsson)

Tier 1 -- major research firms

McKinsey Global Institute -- What Can History Teach Us About Technology and Jobs

Brynjolfsson & Hitt -- IT and Organizational Change (Brookings, 2002)

Goldman Sachs -- Job Displacement, 40 Years of Data, and Scarring (Apr 2026)

Tier 2 -- academic/industry research

Tier 3 -- industry/journalistic sources

Gallup -- Gen Z AI Adoption Steady, Skepticism Climbs (Apr 2026)

HBR -- How Gen Z Uses Gen AI and Why It Worries Them (Jan 2026)

Wharton/Fortune -- Gen Z Believes AI Making Colleagues Less Intelligent