Enterprise Architecture’s Billion-Dollar Paradox

The discipline has never been more funded. It has never mattered less.

Enterprise Architecture has a measurement problem that reveals something worse than failure. EA job titles are up, from 64% to 76% of organizations (Forrester). Named EA teams grew from 46% to 69%. The tools market crossed $1.35 billion. EA team abandonment hit an all-time low of 16%.

By every institutional metric, EA has never looked better.

Here’s the other dataset.

Only 19% of EA teams (per an EA tool vendor’s own survey of 500+ practitioners) say their mission is understood across the organization.

A significant share of digital and IT professionals don’t think architects add value (Forrester).

Gartner predicts 50% of EA teams will rebrand by 2028. Nobody rebrands something that’s working.

Growing investment and shrinking impact, simultaneously. That’s the paradox, and it’s unstable.

ELI5: Enterprise Architecture is supposed to be the discipline that makes sure all of a company’s technology systems work together and don’t create chaos. Think of it as the city planning department for corporate IT. The problem? The city is growing, the planning department is hiring, but nobody’s reading the plans.

The document trap

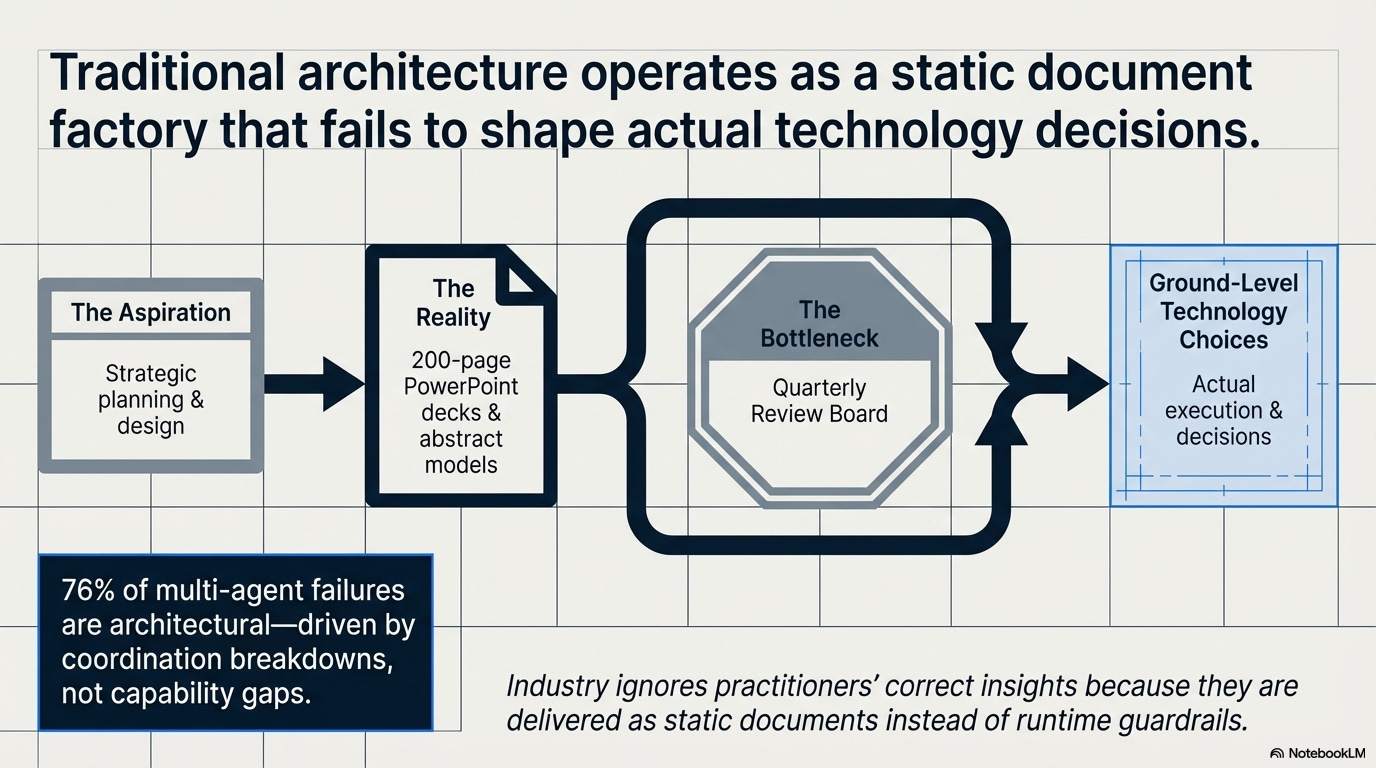

EA teams produce artifacts that don’t shape decisions. Eetu Niemi’s research identifies six structural failure modes where architecture content gets created but never consumed by the people who actually make technology choices. The function aspires to be strategic but operates at the “operational end of the spectrum,” maintaining models, updating diagrams, attending review boards that move slower than the systems they’re meant to govern.

This isn’t new. But the consequences are.

76% of multi-agent failures are architectural: coordination breakdowns, not capability gaps. Bounding what each agent can do is an EA principle. EA practitioners have preached dependency mapping for 20 years. The industry ignored them because EA delivered this insight as 200-page PowerPoint decks instead of runtime guardrails.

The governance functions EA claims to own? Other teams built them.

Security teams built Zero Trust. Compliance teams built policy engines. Platform teams built golden paths (pre-approved deployment configurations that encode architectural decisions so developers can’t make wrong choices accidentally). I traced this pattern in the Speakeasy Problem: governance emerging in unofficial channels because the official governance function was too slow. That was about shadow AI. It’s happening inside IT itself now.

McKinsey’s own paper on rethinking enterprise architecture for the agentic era is addressed entirely to CIOs and CTOs. Enterprise architects are never mentioned as actors.

In a paper about EA, the function doesn’t exist.

In practice: A platform engineering team at a mid-size insurer ships a “golden path” for deploying microservices: pre-approved configurations, automated compliance checks, infrastructure templates. Developers love it.

Nobody asks the EA team’s permission because nobody thought to. The EA team finds out three months later when they notice their reference architecture doesn’t match production. This isn’t a failure of coordination. It’s a failure of relevance.

What the money is actually buying

The EA tools market grew from roughly $200-250 million in 2018 to over $1.35 billion today, with projections to hit $1.86 billion by 2030. Organizations are spending more on architecture than ever. But tools don’t fix an organizational positioning problem.

McKinsey says agentic AI is “collapsing traditional IT planning horizons.” RAND data shows over 80% of AI projects fail to reach production, twice the rate of non-AI IT projects. Gartner predicts more than 40% of agentic AI projects will be canceled by 2027, and traditional AI governance misses 60-70% of the failure modes specific to autonomous agents.

The Stanford HAI 2026 AI Index puts a finer point on this: 362 documented AI incidents last year, up 56% from 2024. And the systems EA is supposed to govern are getting harder to see inside. The Foundation Model Transparency Index dropped from 58 to 40, with 80 of 95 major models released without training code. Document-based governance assumes you can read the documentation. Increasingly, there isn’t any.

This is the gap EA was designed to fill.

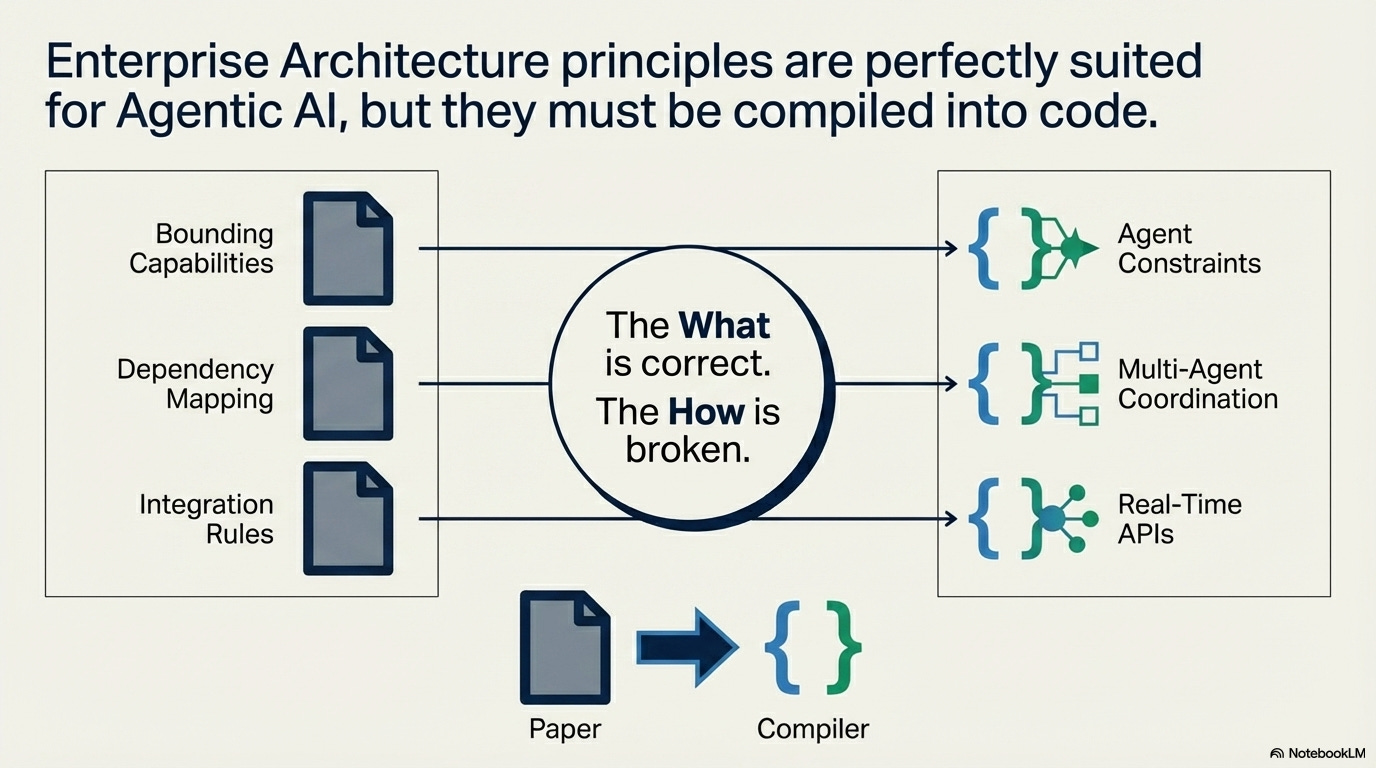

Dependency mapping.

Boundary definition.

Integration governance.

The principles are right. The delivery mechanism (quarterly review boards producing static documents) is simply wrong for systems that make autonomous decisions in milliseconds.

Platform providers are absorbing infrastructure layers every 12-18 months. EA’s architecture target moves faster than its review cycle. A quarterly architecture review board cannot govern a layer that gets absorbed mid-quarter.

ELI5: Imagine air traffic control reviewing flight paths once a quarter, on paper, while planes are landing every 90 seconds. The safety rules aren’t wrong. They’re just running at the wrong clock speed.

Somebody already solved this

Financial regulators faced this exact problem a decade ago. Algorithmic trading systems moved faster than human oversight could follow. The catastrophic failure case is well documented: Knight Capital lost $460 million in 45 minutes (2012 SEC enforcement action) when dormant code activated and started executing trades with no human in the loop.

The regulatory response wasn’t to eliminate governance. MiFID II (the EU’s Markets in Financial Instruments Directive, which governs how trading firms operate) requires pre-trade validation controls, kill switches, circuit breakers, and real-time alerts within five seconds. The European Securities and Markets Authority’s February 2026 briefing extends this framework to AI-driven trading systems.

The governance function survived by becoming as fast as the thing it governs.

The pattern continues to grow.

Stanford HAI counts 47 countries with active AI legislation but only 12 with enforcement mechanisms. 156 enforcement actions in 2025, up 263% from the prior year.

Governance on paper, enforcement catching up.

That’s eerily similar to EA’s problem at national scale: the policies exist, the runtime enforcement doesn’t.

EA faces the same challenge with AI agents. Governance can’t afford to slow down by staying manual.

EA needs to speed up by becoming machine-readable.

Platform engineering is already doing this, encoding architectural decisions as code and making “non-compliant deployments technologically impossible.” Zachman-FEAC (one of the original EA framework bodies, now applying that lens to AI) frames it directly:

“Governance is no longer a board meeting. It is telemetry.”

Researchers are building Computational Governance Agents that continuously monitor and enforce architectural policies in real-time.

The declarative vs. programmable infrastructure decision I wrote about in the Terraform analysis is an EA-level call: it determines the flexibility-safety tradeoff of your entire infrastructure layer.

TOGAF and Zachman have no vocabulary for it. They can model data flows but can’t express “this layer should say what it wants, not how to build it.”

The absence of that vocabulary is why platform teams make these decisions without EA.

In practice: A financial services firm’s EA team defines a policy: “No agent may execute trades exceeding $50K without human approval.” In the document era, this lives in a governance PDF that traders may or may not have read. In the compiled-EA model, this becomes a constraint enforced at the coordination layer between agents, and the agent literally cannot bypass it. Same principle. The effectiveness is not comparable.

The uncomfortable part

Every enterprise architect who writes “EA is broken” is also offering to be the one who fixes it. Including me.

The management consulting market is $374.67 billion. Every “EA needs reinvention” article creates demand for the reinvented version, the one the author conveniently offers.

Forrester’s bullish EA narrative supports their advisory practice revenue. Gartner’s “50% will rebrand” prediction creates urgency for advisory engagements. EA tool vendors need EA to be essential and require better tools.

What I can say: the governance vacuum is real.

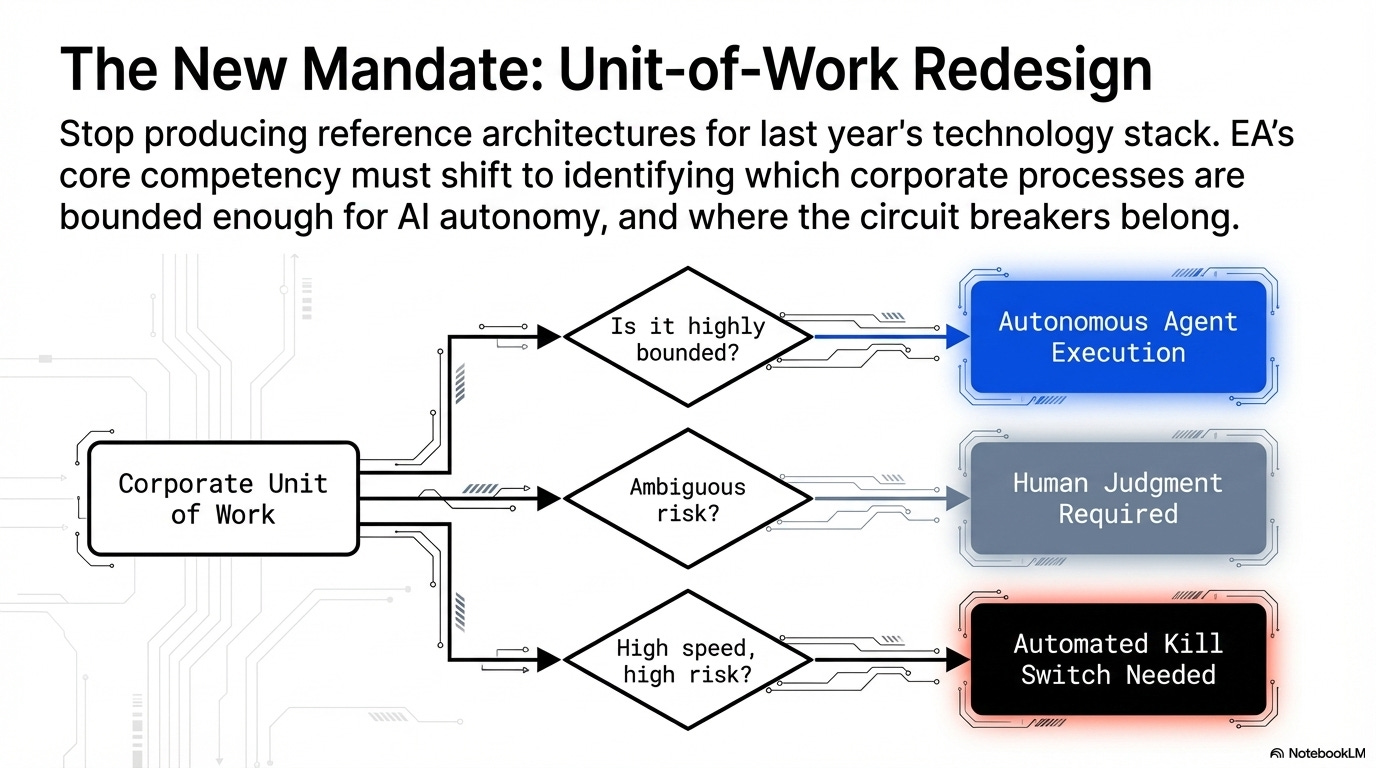

80% AI project failure rates and 40% agentic project cancellations aren’t fabricated. Somebody needs to own the work of mapping dependencies, defining boundaries, and enforcing integration rules for systems that make autonomous decisions. Whether that somebody is called an enterprise architect or a platform engineer matters less than whether the function operates at the speed of the thing it governs.

If EA can’t make that transition, somebody else will. In a lot of organizations, somebody else already has.

What this means for leaders

The principles EA has championed for decades are exactly what agentic AI systems need. But those principles must be compiled: turned from static documents into constraints that execute at the speed of the systems they govern.

Three things to do, in order:

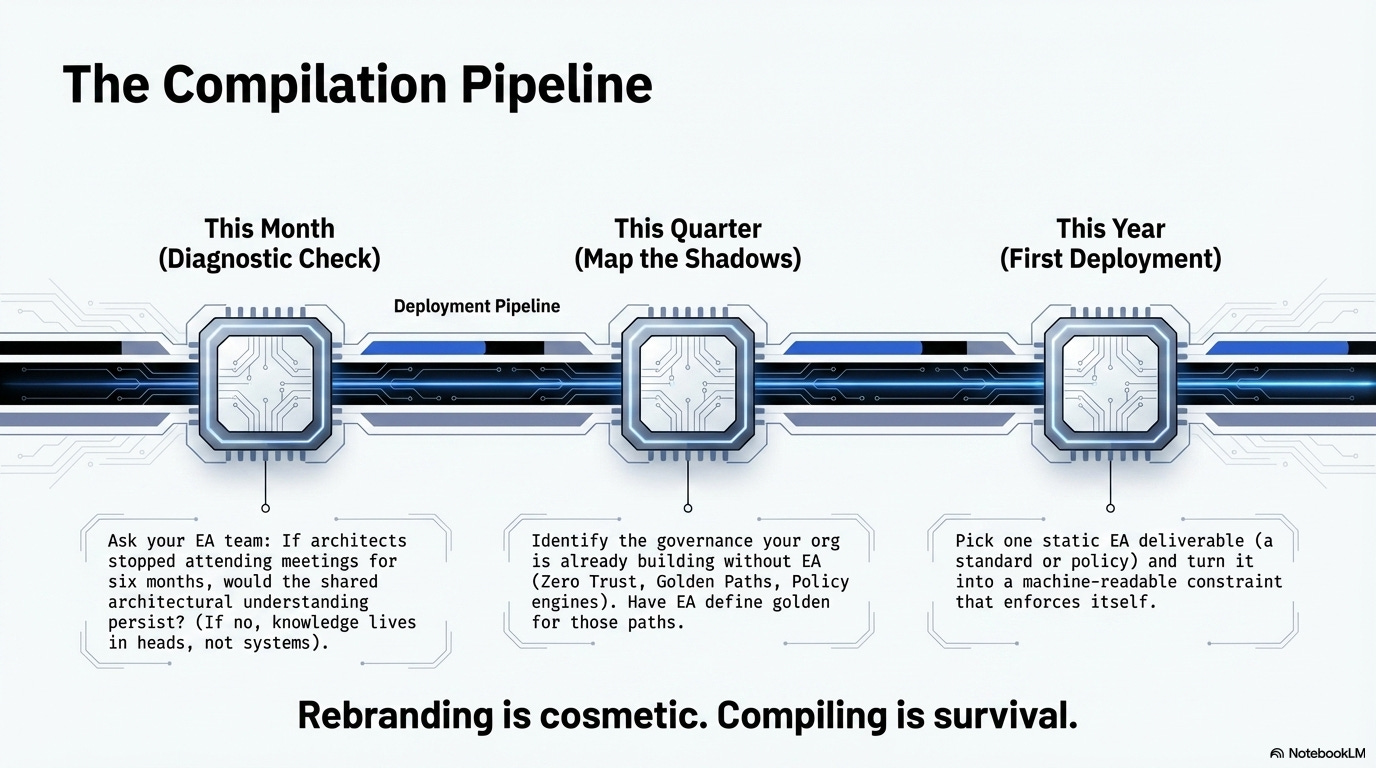

This month: Ask your EA team one question: “If architects stopped attending meetings for six months, would the shared architectural understanding persist?” If the answer is no, architectural knowledge lives in people’s heads, not in systems. That’s the gap.

This quarter, find the governance your organization is already building without EA. Golden paths. Policy engines. Zero Trust architecture. Compliance-as-code. These are EA functions being performed by other teams. The choice is whether EA defines what “golden” means in those golden paths, or gets defined out of relevance.

This year, pick one EA deliverable (a reference architecture, a standards document, a governance policy) and turn it into a machine-readable constraint that enforces itself. Not a diagram someone reviews. A rule that a system executes. InfoQ’s framing is right: “The conformant path should be the easiest path.”

In practice: EA should be identifying which units of work to redesign for AI agents: which processes are bounded enough for autonomous execution, which require human judgment, which need kill switches. Instead, most EA teams are producing reference architectures for last year’s technology stack while platform teams do the actual redesign.

Gartner predicts 50% of EA teams will rebrand by 2028.

Rebranding is cosmetic.

The real question is whether EA compiles its principles into the runtime governance layer, or watches while every other function builds that layer without them.

What’s the honest diagnostic for EA in your organization: winning, irrelevant, or somewhere in between? I’m curious whether the paradox I’m describing matches what you’re seeing.

References: