The Speakeasy Problem: Why Banning AI at Work Guarantees You Lose Control of It

900 million people use ChatGPT every week. Your employees are among them, with or without your permission.

ChatGPT has 900 million weekly active users. That’s more than 10% of the global population — one of the fastest consumer technology adoptions on record. Only 38% of US employees say their employer has integrated AI into their workflows.

That gap isn’t a staffing problem or a budget problem. It’s a policy failure producing exactly the outcome it was designed to prevent.

I covered the what in Secret Cyborgs Are Already Running Your Company. This is the why, and the only exit that actually works.

Consumer adoption is an enterprise roadmap with a 12-month delay

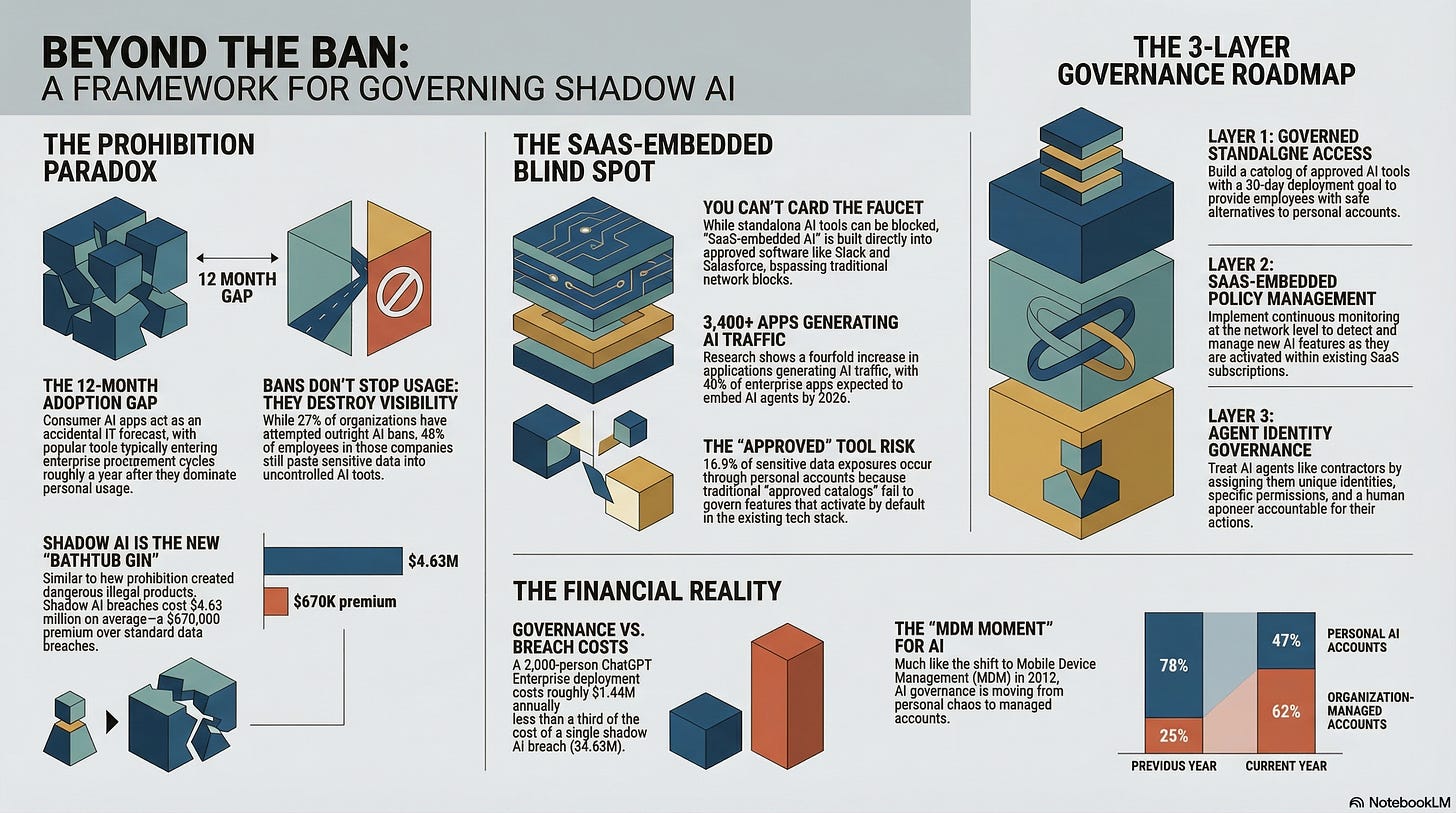

The a16z Top 100 consumer AI apps list has become an accidental enterprise IT forecast. Tools that dominate consumer usage — ChatGPT, Claude, Notion AI, coding assistants — tend to show up in enterprise procurement cycles roughly a year later. Not a guaranteed rule, but consistent enough to plan around. Notion AI’s attach rate surged from 20% to over 50% in a single year. Claude paid subscriptions grew 200% year-over-year.

Employees adopt consumer AI tools at home, bring them to work, IT discovers the usage, and the organization scrambles to respond. Harmonic Security tracked 665 distinct AI tools running inside enterprise environments. Not 6. Not 60. Six hundred and sixty-five.

ELI5: Think of the a16z consumer AI list like a restaurant that’s packed every night. Within a year, a franchise version opens in every office park. The consumer version proves demand; the enterprise version plays catch-up.

What matters is how organizations respond.

Prohibition created speakeasies. AI bans create shadow AI.

When the United States banned alcohol in 1920, speakeasies outnumbered the saloons they replaced by two to one. Enforcement didn’t reduce consumption. It pushed usage underground, created more dangerous products (bathtub gin killed people), enriched criminal networks, and corrupted the institutions meant to enforce the law. I am however a fan of the modern speakeasies.

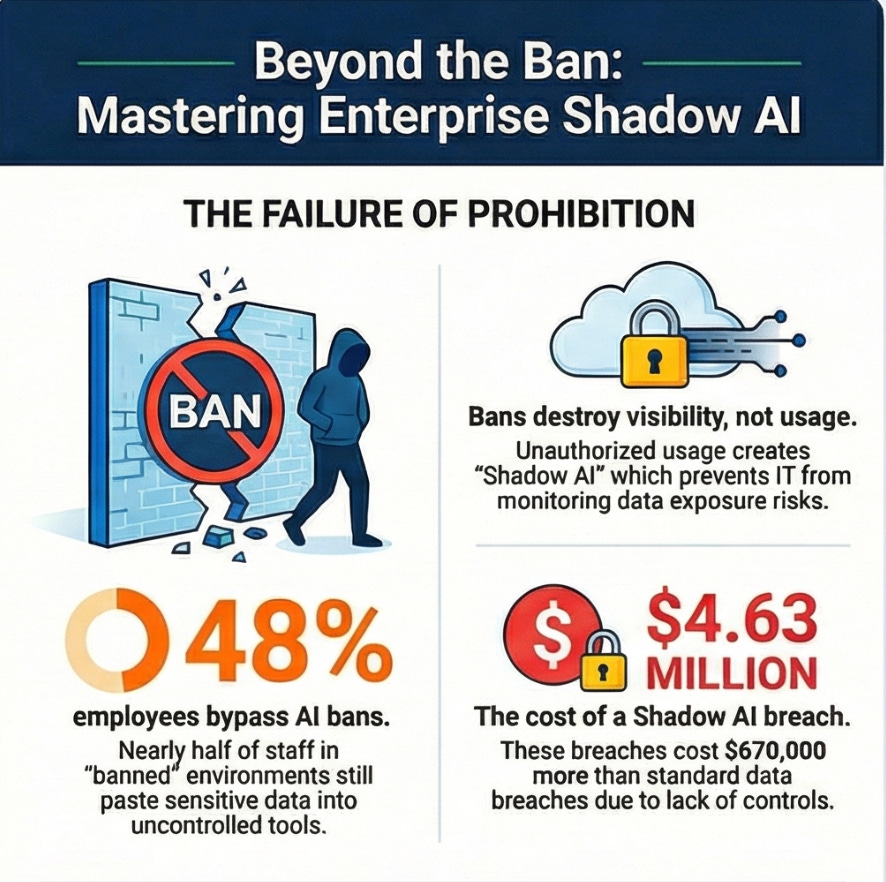

The parallel to enterprise AI bans is almost exact. Twenty-seven percent of organizations have tried outright AI bans. Among those organizations, 48% of employees still paste sensitive data into uncontrolled AI tools.

The ban didn’t stop usage. It destroyed visibility.

IBM’s 2025 Cost of Data Breach report quantified the damage: shadow AI breaches cost $4.63 million on average, a $670,000 premium over standard breaches. And 97% of organizations that experienced AI-related breaches lacked access controls. They weren’t breached because AI is inherently dangerous. They were breached because prohibition weakened the governance layer.

In practice: A lawyer pastes a client’s contract into free-tier ChatGPT to summarize key terms. Thirty seconds of work, zero IT visibility, potential privilege waiver. A hospital’s billing team uses an unapproved AI tool to appeal insurance denials. Fifty-seven percent of healthcare professionals report encountering unauthorized AI in their workflows. A retail chain’s merchandising analyst uploads proprietary sales data to a personal AI account to build forecasts. In every case, the employee is trying to do their job faster. The organization just made it impossible to do that safely.

Harmonic Security found that 16.9% of sensitive data exposures — roughly 98,000 instances — occurred through personal free-tier AI accounts. Not enterprise tools with audit logs.

Personal accounts with no data retention policies, no access controls, and no way for IT to even know it happened.

The “approved catalog” is already obsolete

The obvious response to shadow AI is a catalog of pre-approved tools. Give people a sanctioned option and they’ll stop using the unsanctioned ones.

That logic did work in healthcare where one system provided approved AI alternatives and unauthorized usage dropped significantly.

But catalogs only govern standalone AI tools. The problem has already moved past them.

Gartner projects that 40% of enterprise applications will embed task-specific AI agents by the end of 2026, up from less than 5% in 2025.

Zscaler found that 3,400+ applications now generate AI traffic, a fourfold increase. Salesforce has Einstein.

Microsoft has Copilot baked into Office.

Slack, Salesforce, Workday, Notion, Jira, ServiceNow — every SaaS tool in the stack is embedding AI features that activate by default.

ELI5: Approving standalone AI tools is like carding people at the front door of a bar. SaaS-embedded AI (AI features built directly into software your company already approved) is alcohol showing up pre-mixed in the water supply. You can’t card the faucet.

Enterprises that blocked 39% of AI access attempts (per Zscaler) were blocking standalone tools. The AI embedded inside their already-approved SaaS stack sailed right through. Front door locked, walls made of glass.

In practice: An HR team’s approved recruiting platform ships an AI screening feature in a Tuesday update. No procurement review, no security assessment, no policy check. A finance team’s forecasting tool adds an “AI insights” tab that sends anonymized (but reconstructible) data to a third-party model. A customer service platform embeds an AI agent that auto-responds to tickets using data from the company’s knowledge base. All three are “approved” tools. None of the AI features inside them were approved.

The MDM moment for AI

The transition from chaos to governance is already happening. Netskope reports that personal AI account usage dropped from 78% to 47% in one year, while organization-managed accounts climbed from 25% to 62%.

Same shift that played out with BYOD. Intel popularized the term in 2009 when employees brought personal smartphones to work. IT banned them, mobile device management (MDM) solutions emerged by 2012, and by 2018 only 17% of enterprises still provided phones to all employees.

AI is following the same arc, faster.

ELI5: MDM let IT say “fine, bring your phone, but we manage the work apps on it.” We need the same deal for AI: use it, but through a governed channel where we can see what’s happening.

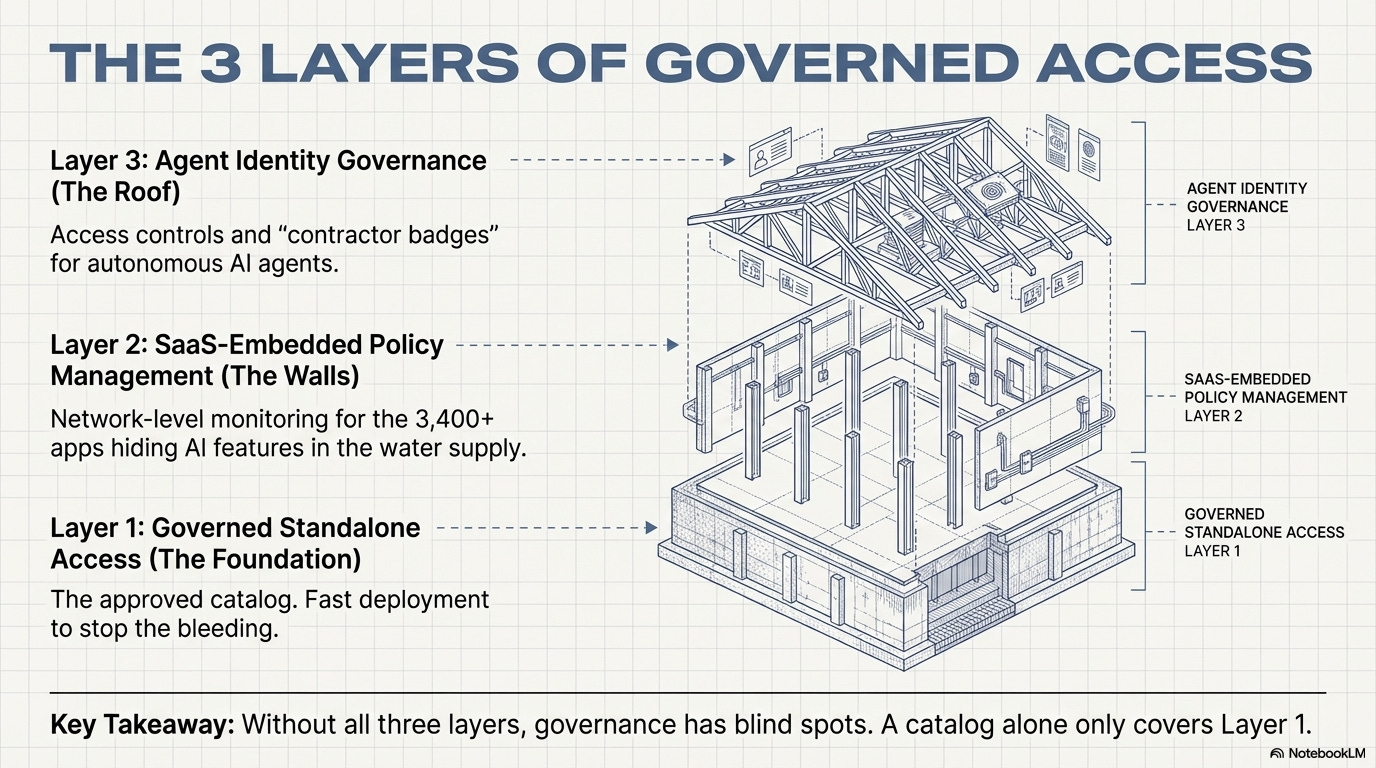

Governed access requires three layers, not one:

Layer 1: Governed standalone access. Build the catalog of approved AI tools, but deploy in 30 days, not 12-month procurement cycles. Goldman Sachs made its multi-model AI assistant available to all 46,000+ employees in mid-2025 and now sees over a million prompts per month. At roughly $60 per user per month for ChatGPT Enterprise, a 2,000-person deployment runs about $1.44 million annually. That’s real money.

It’s also less than a third of what one shadow AI breach costs.

Layer 2: SaaS-embedded AI policy management. The catalog doesn’t cover the 3,400+ apps generating AI traffic inside the stack. This is the Zscaler/Netskope layer — continuous monitoring of AI features activating within approved SaaS tools, with policy enforcement at the network level.

If Salesforce ships a new AI feature, IT needs to know about it before employees start feeding it customer data.

Layer 3: Agent identity governance. AI agents are coming. They’ll book meetings, process invoices, triage support tickets, and access sensitive systems. available via Microsoft Agent 365, going GA May 1, 2026, extends enterprise identity governance to AI agents: conditional access policies, lifecycle management, and a human sponsor accountable for what each agent does.

ELI5: Think of agent identity like a contractor badge system. Every AI agent operating in the enterprise needs a badge (identity), a list of rooms it can access (permissions), and a full-time employee who signed for it (sponsor). No badge, no access.

Without all three layers, governance has blind spots. A catalog alone, while a great start, covers Layer 1.

Most enterprises haven’t started on Layers 2 and 3, and Layer 3’s governance infrastructure doesn’t exist yet for most organizations. The agents will arrive before the controls do, which is exactly how shadow AI started.

To be fair, there’s a scale problem baked into this prescription.

Goldman Sachs built a firewalled, multi-model AI assistant and made it available to 46,000 employees. Goldman has a dedicated AI engineering team, effectively unlimited technology budget, and Marco Argenti running the architecture.

The three-layer model assumes budget for enterprise AI licensing, continuous SaaS monitoring tooling, and agent identity management simultaneously.

Most mid-market enterprises — the ones where shadow AI risk is highest — don’t have the headcount, the vendor leverage, or the budget to stand up all three layers at once.

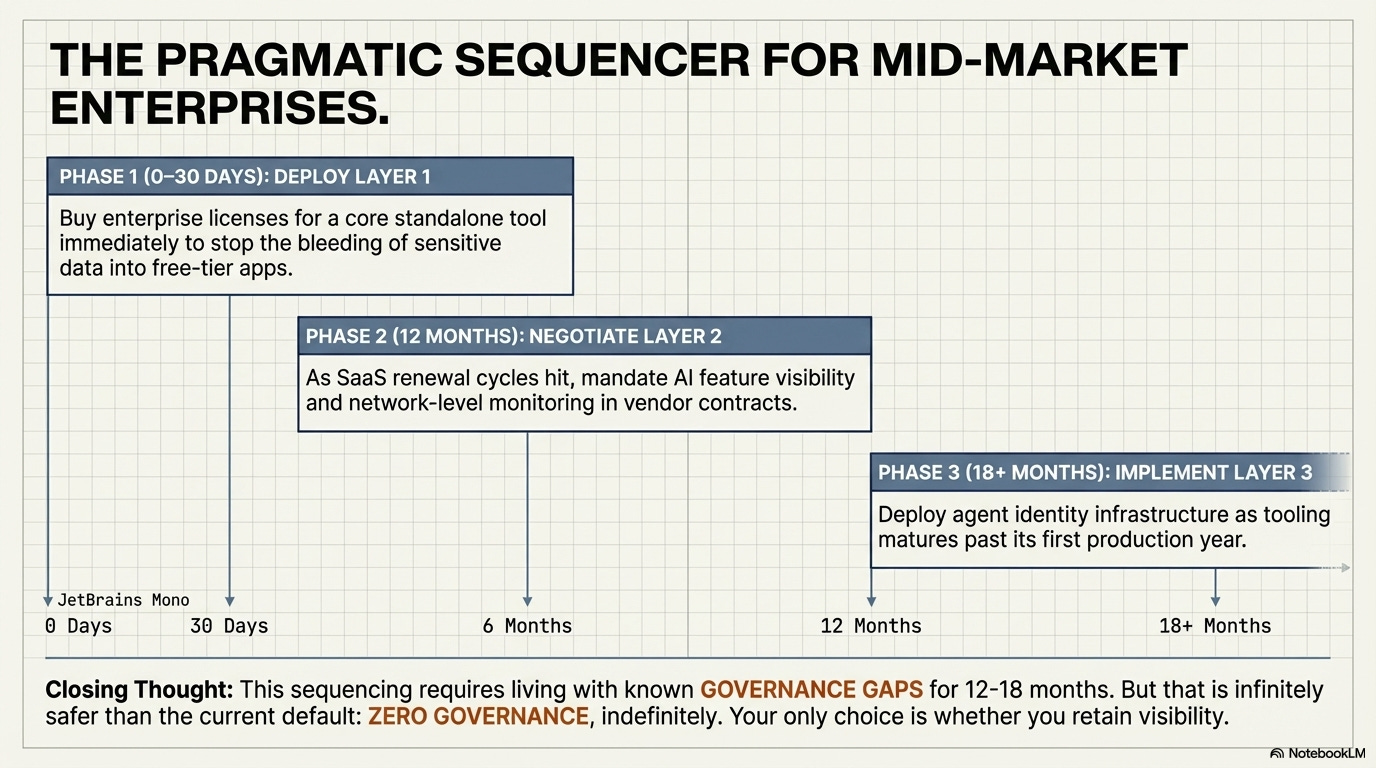

The honest sequencing is: Layer 1 first (get people a governed tool within 30 days), Layer 2 when the next SaaS renewal cycle hits (negotiate AI feature visibility into your contracts), and Layer 3 when the tooling matures past its first production year.

That means living with governance gaps for 12-18 months. Still better than the current default: zero governance, indefinitely.

Your employees already made the decision to use AI at work. The only question left is whether you’ll have any visibility into how.

References: