AI Waypoints: Week of April 6, 2026 — Edition #4

The open-weight gap closed: a model running on a Raspberry Pi just ranked #3 in the world

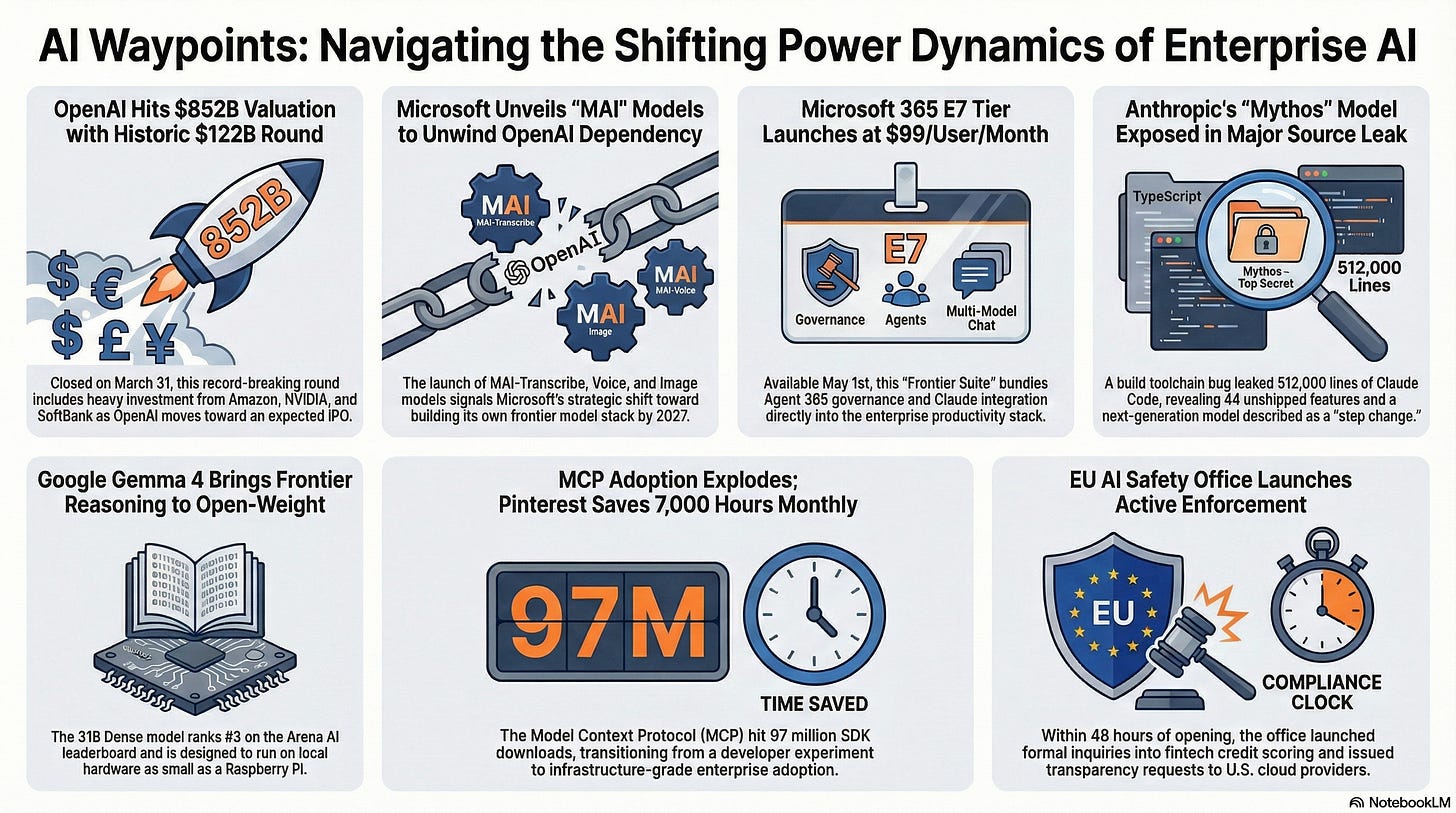

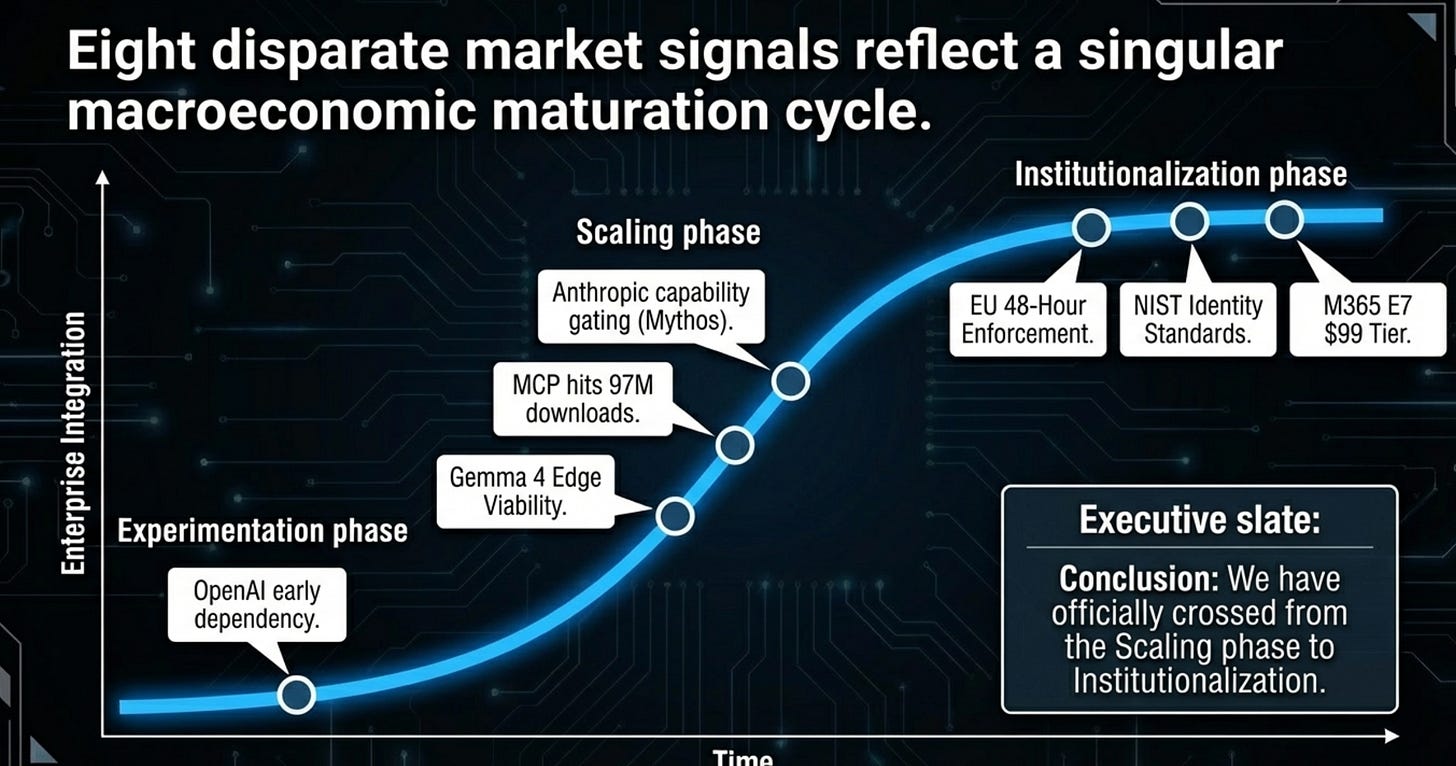

Good morning. The money got real this week. OpenAI closed the largest private funding round in history, Microsoft launched its own models to reduce dependence on OpenAI, and the EU started enforcing AI regulations within 48 hours of opening its doors. The power dynamics in enterprise AI are shifting underneath active contracts.

1. OpenAI closes $122B round at $852B valuation — with strings attached

What happened: OpenAI closed a $122B funding round on March 31 at an $852B post-money valuation, the largest private raise in history. The investor list is interesting:

Amazon committed $50B (with $35B contingent on an IPO or AGI milestone),

NVIDIA put in $30B, and

SoftBank added another $30B on top of the bridge loan we covered in Edition #3.

OpenAI now reports $2B in monthly revenue, 900M weekly active users, and 50M+ subscribers. An IPO is expected later this year.

Why it matters: We’ve been tracking the OpenAI IPO signals since the SoftBank bridge loan in Edition #3. It’s worth noting the Amazon deal structure: $35B of its $50B commitment is contingent on an IPO or an AGI milestone.

For enterprise buyers, the financial permanence matters. OpenAI isn’t going anywhere. The question is whether IPO-driven margin pressure changes how aggressively they price enterprise contracts.

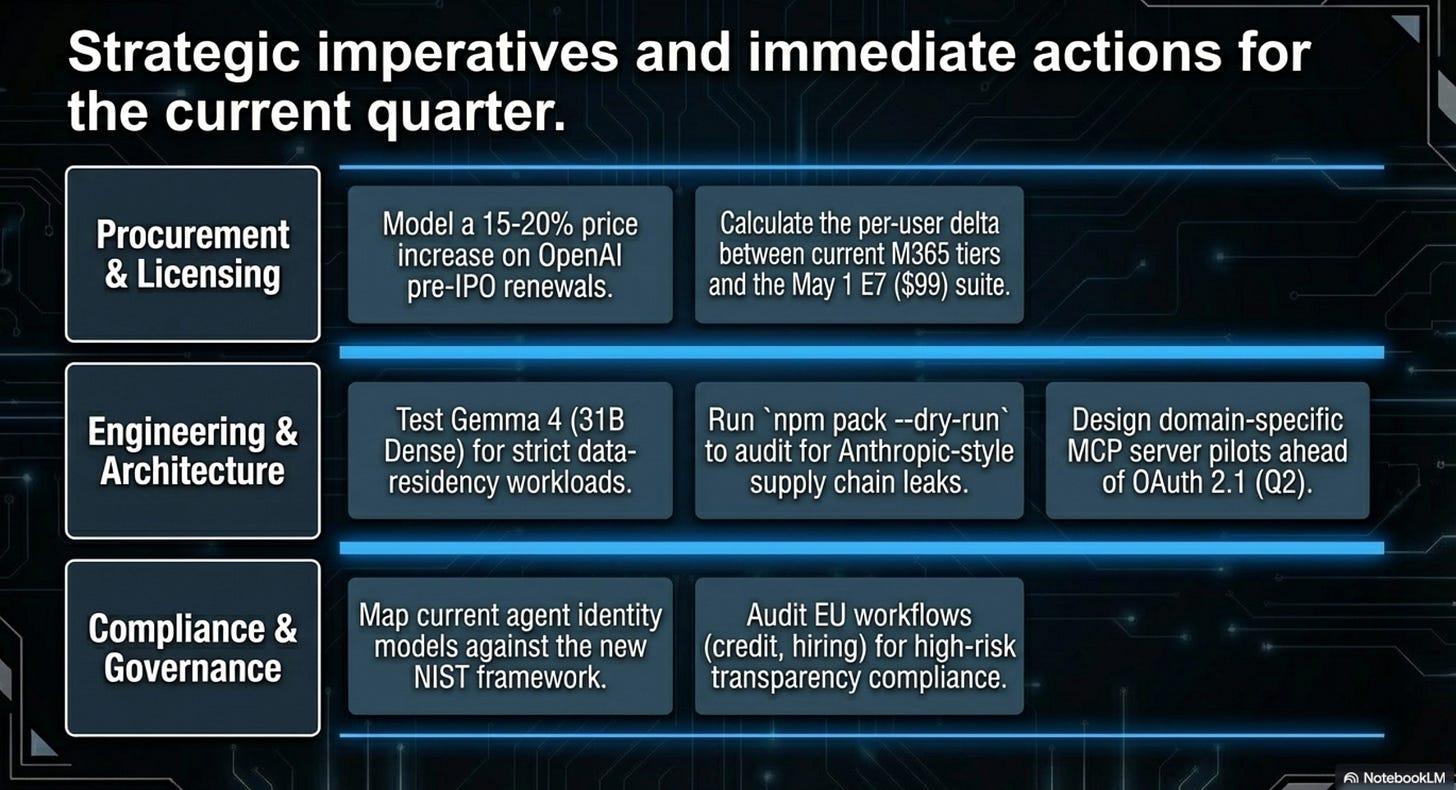

What to do: If you’re mid-negotiation on an OpenAI enterprise deal, the pre-IPO window is narrowing. Lock favorable terms before the S-1 filing forces quarterly performance pressures. Ask your procurement team to model what a 15-20% price increase post-IPO would mean for your total cost.

2. Microsoft launches in-house MAI models — the OpenAI dependency unwind begins

What happened: On April 2, Microsoft announced three in-house models under the MAI brand:

MAI-Transcribe-1 (speech-to-text, lowest word error rate on the FLEURS benchmark at 3.8%),

MAI-Voice-1 (text-to-speech, generating 60 seconds of audio in 1 second), and

MAI-Image-2 (ranked #3 on the Arena.ai image leaderboard).

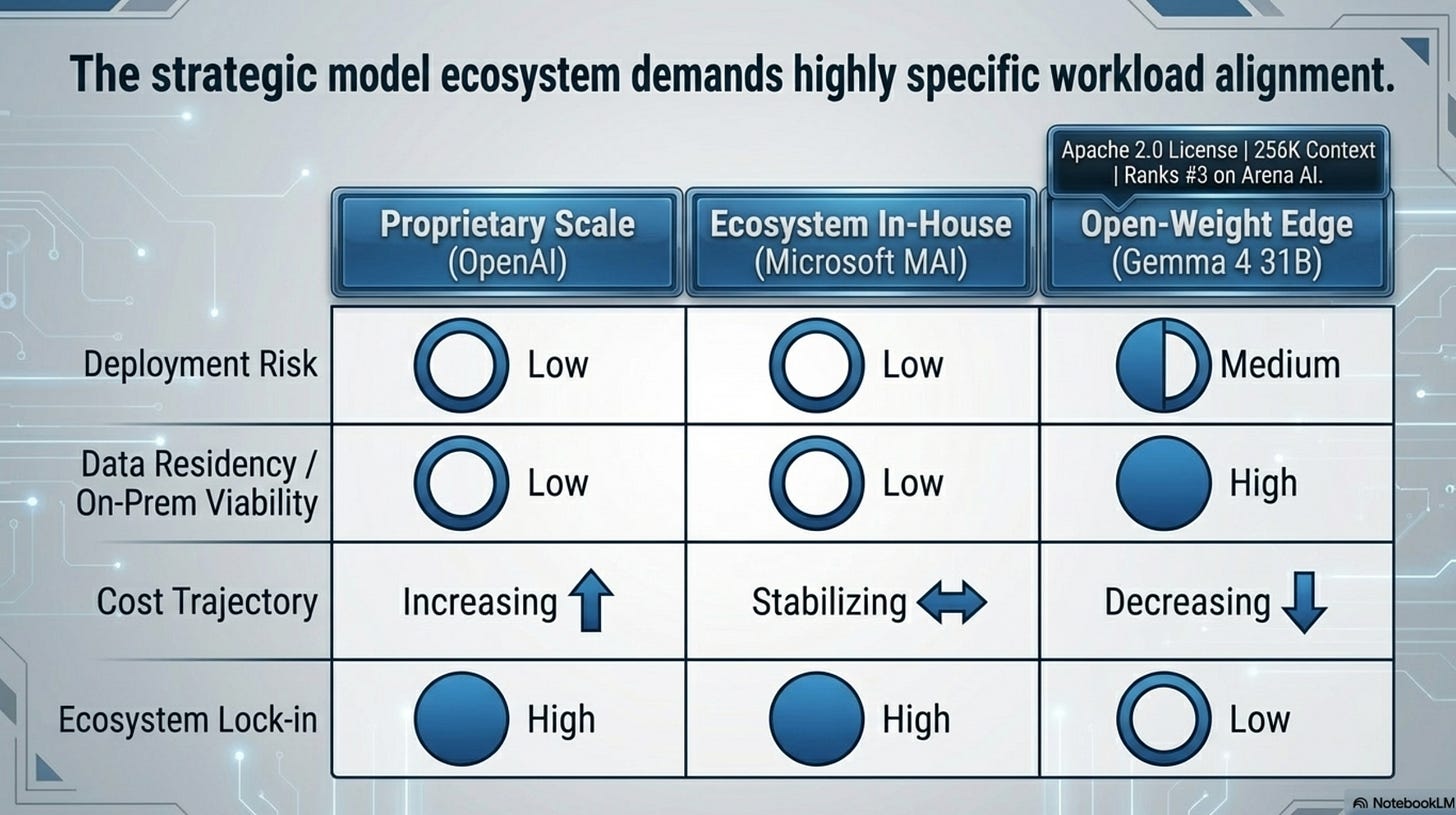

All three are available through Microsoft Foundry and a new MAI Playground. Bloomberg separately reported that Microsoft aims to build its own frontier large language models by 2027.

Why it matters: I wrote in the Copilot Cowork analysis that Microsoft was positioning Claude inside its governance shell because Copilot adoption was lagging. That was a distribution play. This is a different move. Microsoft building its own model stack (starting with speech, voice, and image, with LLMs by 2027) signals they want to reduce the OpenAI dependency that has defined their AI strategy since 2023. For enterprise customers, this is good news: more model options inside the Microsoft ecosystem means more pricing competition and less single-vendor concentration risk. The practical question is whether MAI models show up in Copilot and M365 products, or stay siloed in Foundry for developers.

What to do: Ask your Microsoft account team where MAI models fit in the Copilot roadmap. If you’re running Azure AI workloads that use OpenAI endpoints, watch for MAI alternatives that could reduce your per-token costs. Don’t renegotiate anything yet, but start tracking which Microsoft-native models match your use cases.

3. Microsoft 365 E7 “Frontier Suite” goes transactable May 1

What happened: Microsoft’s E7 tier becomes transactable through partners on May 1 at $99/user/month. The bundle includes E5 + Entra Suite + Copilot + Agent 365, Microsoft’s new agent governance platform priced at $15/user/month as a standalone. Separately, Claude is now available as a model option in mainline Copilot chat (not just through Azure AI Foundry) and in the Researcher agent.

Why it matters: We called E7 “the largest new tier since 2015” when Microsoft announced pricing in the SaaS pricing analysis. The $99/user/month price point bundles governance into the licensing model. Agent 365 at $15/user is the first major cloud vendor’s agent governance platform baked into an enterprise productivity suite. That number will dominate IT budget conversations in Q2 and Q3. The Claude-in-Copilot integration matters too: Microsoft is making the multi-model story real at the user level, not just the API level. Every organization running M365 needs a position on E7 before renewal conversations start, because Microsoft reps will be leading with it.

What to do: Pull your current M365 licensing breakdown this week. Calculate the per-user delta between your current tier and E7. If you’re already on E5 + Copilot, the incremental cost for Agent 365 governance might be cheaper than building your own. However, it is early days for Microsoft (or anyone for that matter) in this space and make sure to evaluate what’s GA vs what’s still in early preview.

4. Anthropic Claude Code leak reveals Mythos model and 44 unshipped features

What happened: On March 31, The Register reported that the full Claude Code source (512,000 lines across 1,906 TypeScript files) was published to npm through a misconfigured source map triggered by a Bun bundler bug. Security researchers found 44 unshipped feature flags and references to “Mythos” and “Capybara,” the next-tier model above Opus that Anthropic described internally as a “step change” in capabilities. This is the same Mythos model that surfaced in the CMS leak we covered in Edition #3. This time the exposure came through the software supply chain, not a content system.

Why it matters: Two separate leaks of the same unreleased model through two different attack surfaces in two weeks. The CMS leak was a configuration error. The npm leak was a build toolchain bug that shipped source maps to a public registry. They’re different categories of supply chain risk. For every organization publishing npm packages or using Bun as a bundler, this is a concrete audit trigger. The feature flags also tell us something: 44 unreleased capabilities sitting behind flags in production code means Anthropic is shipping and gating simultaneously.

If you’re building on Claude APIs, some of those features will change the integration behavior when they go live.

What to do: Run npm pack --dry-run on your own published packages this week and check whether source maps or internal files are leaking. If you use Bun, verify your bundler config strips source maps from production builds. If you’re an Anthropic enterprise customer, ask about Mythos timing. The capability gap between current Opus and Mythos may affect your model selection roadmap.

5. Google releases Gemma 4 — frontier reasoning goes open-weight

What happened: On April 2, Google released Gemma 4, a family of four open-weight models (2B, 4B, 26B MoE, and 31B Dense) under the Apache 2.0 license. The 31B Dense model ranks #3 on the Arena AI text leaderboard. All models support 256K context windows with vision and audio input, and they run on hardware as small as phones, Raspberry Pis, and NVIDIA Jetson boards.

Why it matters: The open-weight performance gap just collapsed again. A 31B parameter model that ranks #3 on Arena AI, costs orders of magnitude less to access than the proprietary competition, and runs on a Raspberry Pi is mindblowing.

For regulated industries that can’t send data to external APIs (healthcare, financial services, defense), Gemma 4 is the strongest case yet for on-premises AI that doesn’t require compromising on quality. The Apache 2.0 license removes the commercial restrictions that limited earlier open models. The 256K context window with multimodal input means these aren’t toy models. They can handle real enterprise document workflows.

What to do: If you’ve been blocked on AI adoption by data residency requirements, test Gemma 4 31B Dense against your actual workloads this week. The benchmark numbers are impressive, but what matters is whether it handles your documents, your domain vocabulary, your edge cases. Compare the total cost of running it on-premises against your current API spend.

Also, I tried it on my iPhone 17 Pro Max with LocallyAI. It kind of surreal to have a model of this much capability in my pocket!

6. MCP crosses from protocol to production — Pinterest saves 7,000 hours monthly

What happened: The MCP Dev Summit at NYC (April 2-3) put some real numbers on what had been mostly anecdotal adoption. The MCP protocol now sees 97M monthly SDK downloads across 5,800+ community servers. Pinterest presented the standout case study: domain-specific MCP servers handling 66,000 invocations per month across 844 users, saving an estimated 7,000 hours monthly. OAuth 2.1 enterprise authentication is shipping in Q2.

Why it matters: I flagged MCP’s security problems in Edition #2 (36.7% of servers vulnerable to SSRF). Those problems haven’t disappeared, but the protocol crossed a threshold this week that makes “wait and see” untenable. 97M monthly SDK downloads is infrastructure-grade adoption, not a developer experiment anymore.

Pinterest ran domain-specific servers with usage tracking and saved 7,000 hours a month. That’s the pattern worth copying. The OAuth 2.1 announcement in Q2 addresses the enterprise authentication gap directly. The question has shifted from “should we adopt MCP” to “how do we govern it before shadow MCP servers show up in our environment” — the same pattern we described in the Speakeasy Problem.

What to do: Check whether your engineering teams are already running MCP servers. At 97M SDK downloads, the odds that someone in your org has experimented are high. If they have, get the Pinterest governance pattern in front of them: domain-specific servers with usage tracking, not a free-for-all. If they haven’t, the OAuth 2.1 Q2 timeline gives you a natural starting point for a governed pilot.

7. EU AI Safety Office goes live — enforcement starts within 48 hours

What happened: The EU’s central AI enforcement body, the AI Safety Office, officially began operations on April 1. Within its first 48 hours, it launched formal inquiries into two European fintech companies over their AI-driven credit scoring models and issued documentation requests to several major U.S. cloud providers to verify compliance with model transparency rules.

Why it matters: AI regulation just went from “future concern” to “active enforcement.” Two days from opening the doors to issuing formal inquiries signals that the office arrived with a target list. For any enterprise deploying AI systems that serve EU customers, particularly in financial services, hiring, or healthcare, the compliance clock started this week.

The documentation requests to U.S. cloud providers also mean this isn’t just a European company problem. If we’re running models through Azure, AWS, or GCP that touch EU data, our vendors are now fielding regulator questions about how those models work.

What to do: Check whether any of your AI-driven workflows would qualify as “high-risk” under the EU AI Act (credit decisions, hiring screening, customer risk scoring).

If they do, verify that your technical documentation meets the transparency requirements. If you’re relying on a cloud provider’s model, ask them directly what they disclosed to the AI Safety Office and what that means for your compliance posture.

8. NIST moves to standardize AI agent identity and authorization

What happened: NIST closed the public comment period on April 2 for its “AI Agent Identity and Authorization Concept Paper.” This is the first step toward federal technical standards for how AI agents are identified, secured, and authorized to act on behalf of users or organizations. The paper addresses agent authentication, scope-of-action boundaries, and audit trail requirements.

Why it matters: NIST standards have a way of becoming mandatory by gravity. They start as guidelines, get adopted by federal procurement (FedRAMP, FISMA), then migrate into regulated industry requirements. This matters right now because Microsoft just shipped Agent 365 (Signal #3) and MCP just crossed 97M monthly SDK downloads (Signal #6). We’re building the agent infrastructure layer this quarter & beyond.

The identity and authorization architecture NIST defines will shape how every enterprise governs AI agents over the next 3-5 years.

What to do: Read the NIST concept paper before the final standard drops. If you’re building or piloting AI agents, map your current agent identity model against NIST’s framework: how do your agents authenticate, what scope boundaries exist, and what audit trail do they produce?

If the answer to any of those is “we haven’t decided yet,” this paper is your starting architecture.

What signal did we miss? If you’re tracking AI developments in energy infrastructure, education, or defense that should have made the cut, hit reply.

Past editions:

References: