The Rungs Are Gone: AI Isn't Replacing Workers — It's Locking Out the Next Generation

The unemployment rate looks fine. That’s the problem.

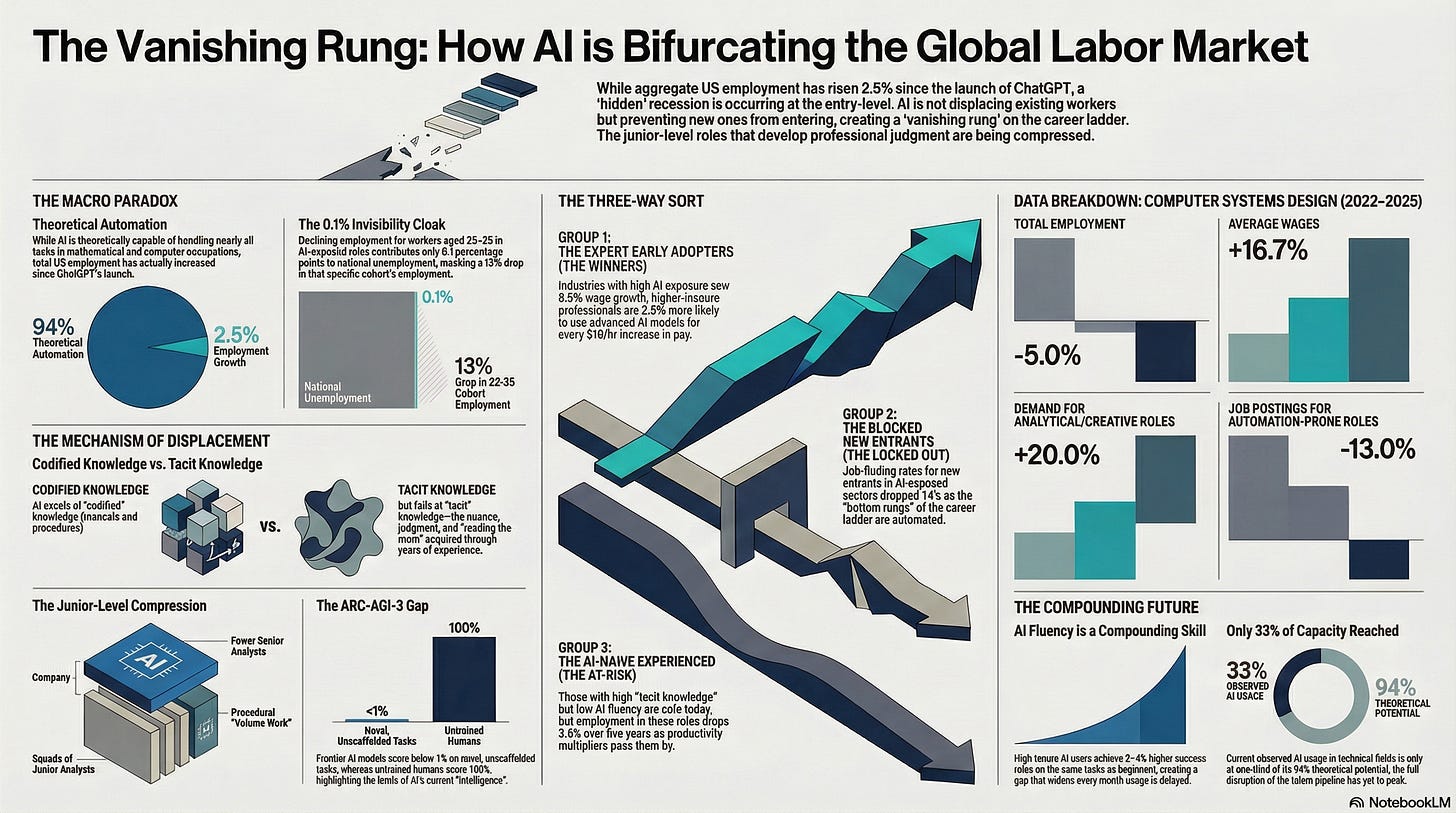

Earlier this month, a Fortune headline warned of “a Great Recession for white-collar workers” based on Anthropic’s own labor market research. The researchers found that AI is theoretically capable of handling 94% of tasks in computer and mathematical occupations. That sounds alarming. Then you look at the unemployment data — US total employment is up 2.5% since ChatGPT launched in November 2022 — and the alarm fades. Surely if AI were devastating the labor market, it would show up somewhere.

It does. Just not where anyone is looking.

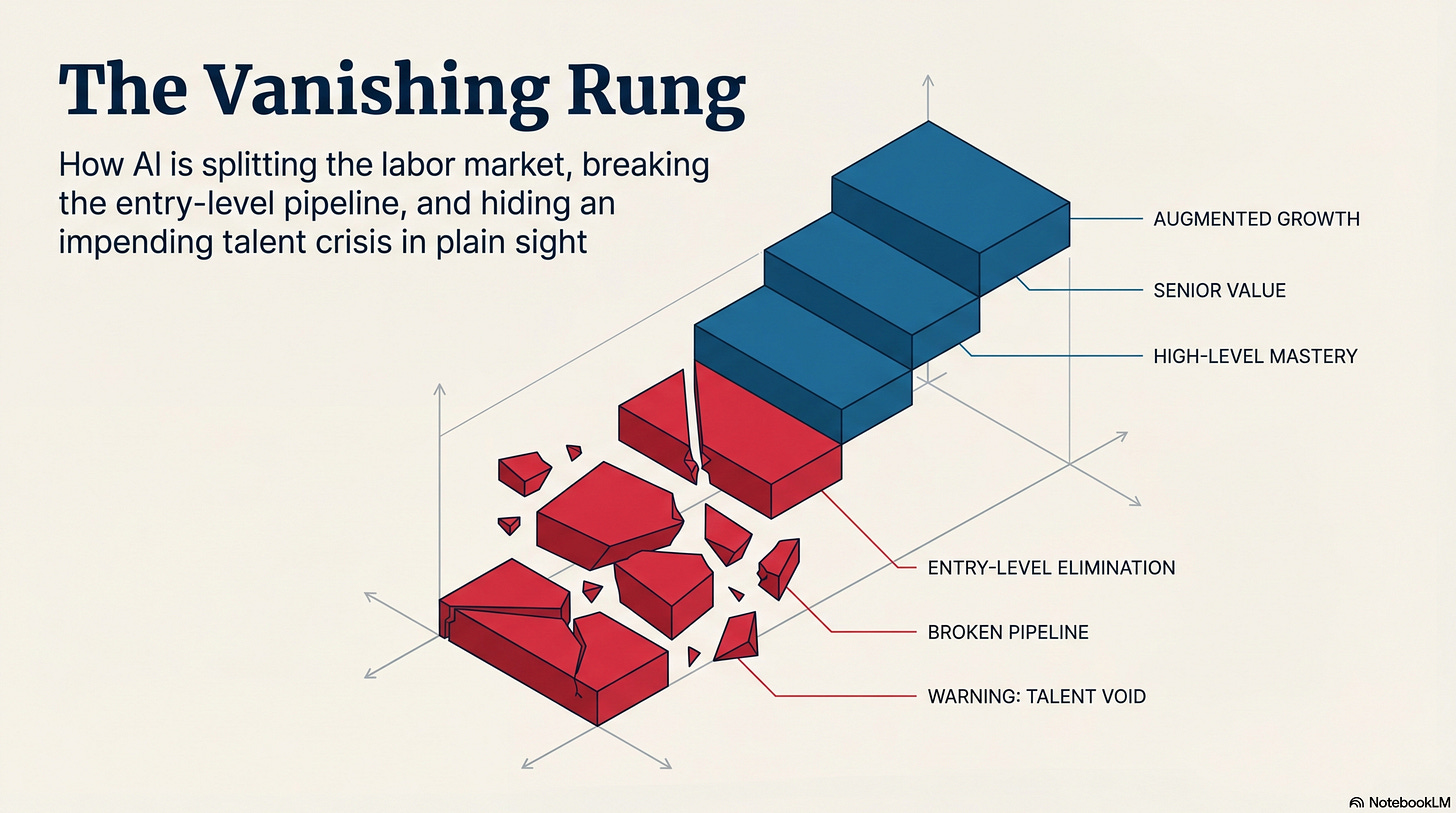

The AI labor market story isn’t about layoffs. It’s about new entries. Experienced workers are getting wage increases. Young workers are getting locked out before they start. And the aggregate employment statistics — which measure people who had a job and lost it, not people who never got one — are hiding the split entirely.

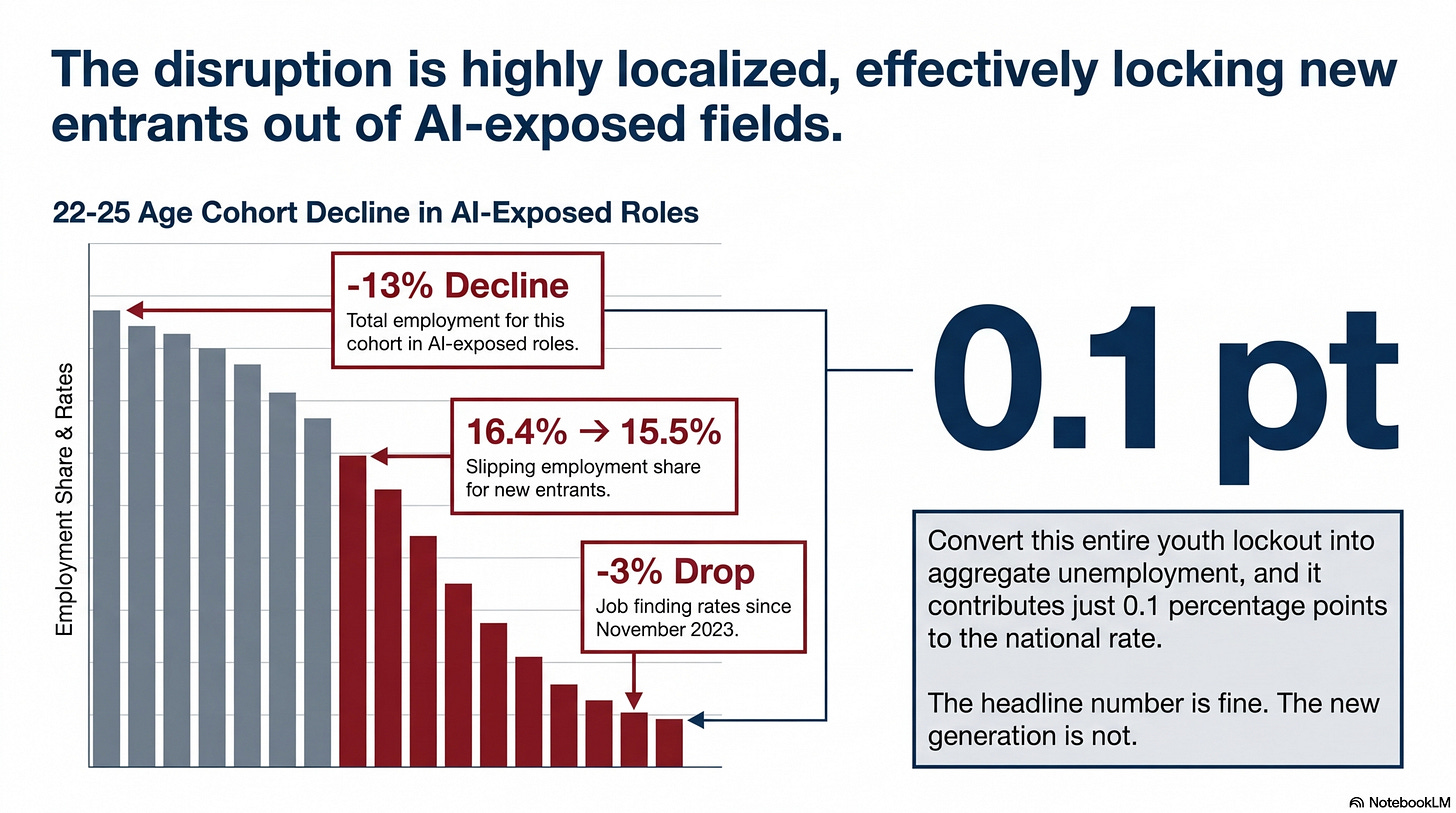

The number that explains everything: 0.1

The Dallas Fed (yes, Dallas has a Fed) published research in January 2026 on employment trends among workers aged 22-25 in AI-exposed occupations — jobs where AI can directly perform a significant portion of tasks. Employment in those roles has declined 13% since 2022. Employment share in the most AI-exposed occupations has slipped from 16.4% to 15.5% for this cohort. Job finding rates for new entrants in the most AI-exposed sector dropped more than 3% since November 2023.

Add all of that up and convert it into aggregate unemployment, and it contributes 0.1 percentage points to the national unemployment rate.

The headline number might seem fine. The generation of new grads trying to enter the workforce in AI-exposed fields is not.

A parallel piece of research from the Dallas Fed clarifies the mechanism. AI can automate what economists call codified knowledge — things we learned from a textbook, a training manual, or a procedure document. It has much more difficulty with tacit knowledge — the judgment you build from years of seeing edge cases, reading the room, knowing which rules actually matter in practice.

A new lawyer follows procedure. A senior partner knows when the client needs something other than the technically correct answer. AI has gotten very good at the procedure part. The senior partner is fine. The new associate who was supposed to learn by doing that procedural work? That’s where it breaks.

The ARC-AGI-3 benchmark, released March 25, puts a number on how wide this gap actually is. It tests whether AI can figure out the rules of a novel environment from scratch — no instructions, no stated goals, just exploration and adaptation.

Every frontier language model scored below 1%. Untrained humans scored 100%.

That’s not measuring raw intelligence. It’s measuring exactly what the Dallas Fed means by tacit knowledge: reading an unfamiliar situation and figuring out what matters.

This explains why the data looks so contradictory. Computer systems design employment is down 5% since ChatGPT. Computer systems design wages are up 16.7% over the same period. In the same sector, at the same time: fewer jobs, higher pay. What that tells us is that the junior-level volume work is being compressed, and the experienced-level judgment work is getting more valuable — and the split between them is widening.

The three-way sort

Harvard Business School researchers analyzed 900+ occupations and 19,000+ job tasks between 2019 and March 2025. They found a 13% decline in job postings for automation-prone occupations since ChatGPT launched. In the same period: a 20% growth in demand for analytical, technical, and creative roles.

This isn’t a labor market that’s contracting. It’s one that’s splitting, and the gap between the halves is getting wider.

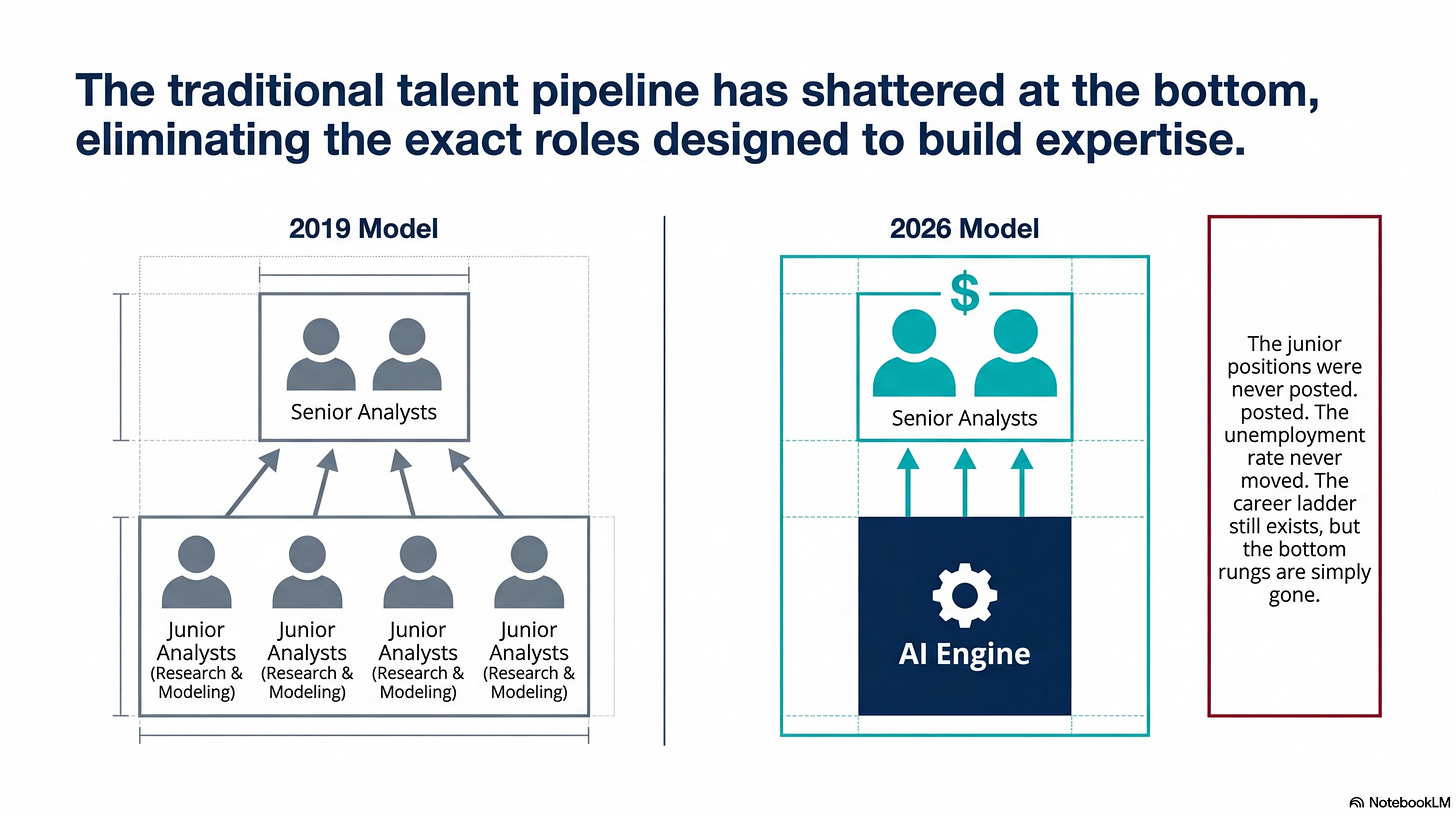

A financial services firm that used to hire four junior analysts to research companies and build initial models now hires two senior analysts who use AI to do the research and modeling work that the juniors would have done. The senior analysts’ salaries are higher. The junior analyst positions were never posted. The unemployment rate never moved.

The data points toward three distinct groups.

*A caveat before we go further: I take Anthropic’s studies on its own users with a grain of salt. Some of the findings read like McKinsey publishing research that McKinsey-style engagements deliver above-market returns. We’ve all seen that slide. We all mentally discount it.

The first is the early adopters who already have domain expertise. They’re winning, and the numbers aren’t subtle. Top 10% AI-exposed industries saw 8.5% wage growth. The higher the hourly wage of the occupation, the more likely workers are to be using Claude’s most capable model (Opus). Every $10 more per hour in wages is associated with 2.8 percentage points more Opus usage in professional contexts. They’re not just using AI — they’re using it better, on harder problems, and getting paid more for it. I do agree there is a significant difference in model capabilities of free vs paid accounts.

The second group is new entrants trying to break into AI-exposed fields. The entry-level positions that would have given them tacit knowledge — the junior analyst work, the associate roles, the early-career coding jobs — are being compressed by AI before they can get there. Job-finding rates in their target occupations dropped 14%. The career ladder still exists. The bottom rungs are just gone.

The third is experienced workers who haven’t figured out AI yet. They’re not being displaced today — their tacit knowledge still commands a premium. But the IMF found that in regions with high AI skill demand, employment in AI-exposed occupations with low human-AI complementarity (where the work doesn’t benefit much from pairing with AI) is 3.6% lower after five years. If that experience is valuable because it represents the old way of doing things — not because it pairs well with AI — the protection has an expiration date. There is still a market for COBOL programmers.

What the latest Anthropic data adds

On March 24, Anthropic published the newest edition of its Economic Index, titled “Learning Curves.” The central finding: high-tenure Claude users achieve 3-4 percentage points higher success rates in their conversations — after controlling for task type, model choice, language, use case, and country.

With that caveat in mind, the compounding finding still tracks with what the independent research shows. AI fluency is a compounding skill.

Not because experienced users are doing harder things — they’ve developed habits and strategies that produce better outcomes on the same tasks.

Someone who started using Claude seriously a year ago isn’t just 12 months ahead. They’re qualitatively better at getting value from it, in ways that persist even when we control for everything else.

That’s the compounding effect. It doesn’t close on its own. Every month of serious usage widens the gap between someone who’s been at it and someone who hasn’t started.

The report also shows that average task value on Claude.ai is declining — from $49.3 to $47.9 in hourly wage terms — as mass-market users arrive with simpler tasks. The averages are dropping while the skill ceiling keeps rising. Both at the same time.

49% of jobs have now seen at least 25% of their tasks performed using Claude. We’re not early anymore. And yet, for computer and mathematical occupations — the most exposed cohort — observed AI usage is at 33% of what it’s theoretically capable of. The ceiling is 94%.

Let that percolate for a second. At one-third of theoretical capacity, we’re already seeing visible entry restriction and a widening skill premium. The full disruption hasn’t started.

ARC-AGI-3 defines where that ceiling stops. The compounding fluency advantage from Learning Curves accrues within known, structured domains — the kind of work AI has already been engineered to assist with.

On genuinely novel, unscaffolded tasks, the gap between AI and an untrained human is still near-total.

The 94% theoretical disruption ceiling assumes AI is operating within human-built scaffolding. Outside it, the floor drops fast.

So what do we do with this

Most organizations are watching the macro data and concluding things are fine. Total employment is up. Unemployment is stable. The “Great Recession” didn’t arrive on schedule.

The macro data is measuring the wrong thing.

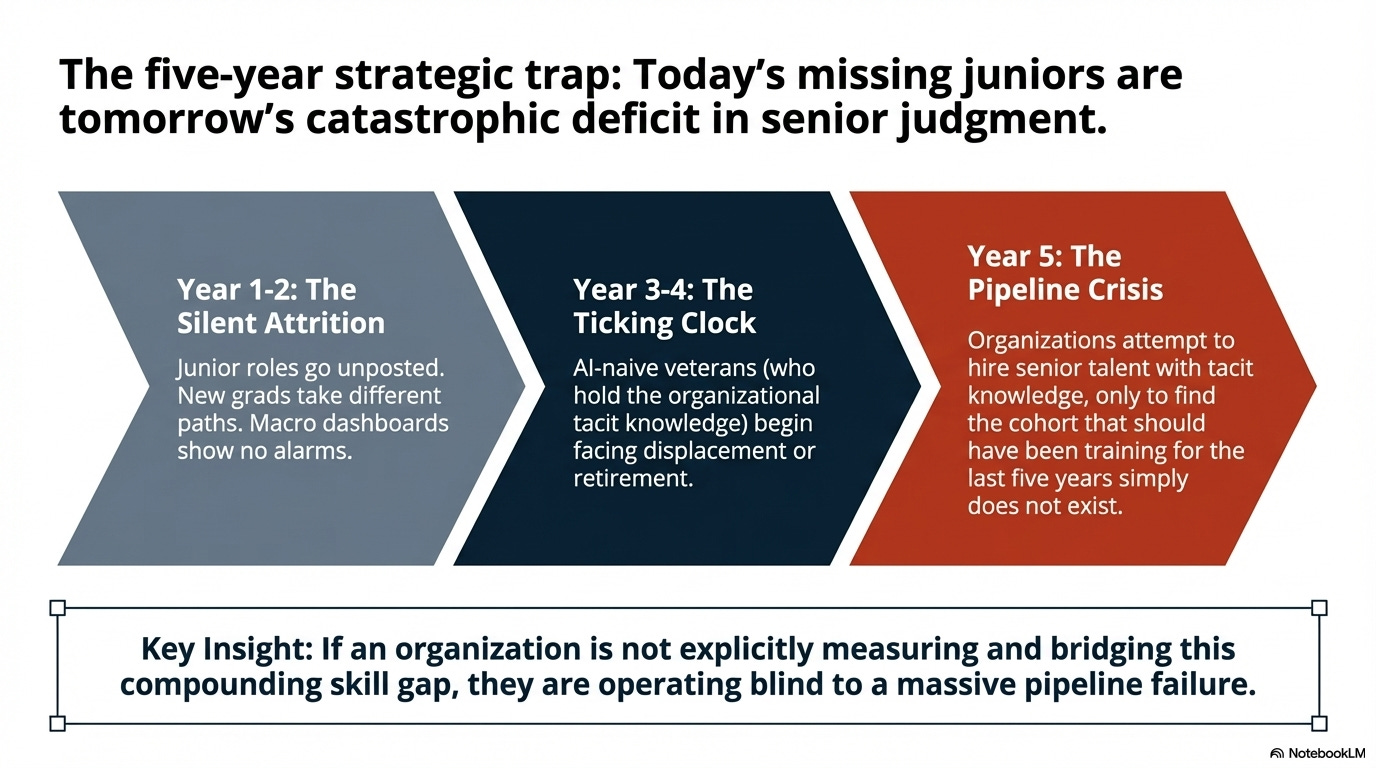

The disruption likely is in the talent pipeline. The junior-level talent that organizations rely on to grow senior-level capability isn’t forming at the rate it used to. In five years, the shortage of people who learned by doing the work that AI now does will be a real constraint — and it won’t show up in any dashboard until it’s already a problem.

That compounding skill gap is the most urgent message.

People who’ve been using AI seriously for a year are already meaningfully more productive than colleagues who started six months later. That gap won’t close on its own. If we’re not measuring it, we’re ignoring it.

Most hiring strategies still assume a steady flow of entry-level talent in AI-exposed roles. The pipeline is narrower than it was two years ago. The junior analyst, junior developer, and junior research roles that used to absorb new graduates are posting less frequently. The people who would have taken those paths are taking different ones.

And the experienced-but-AI-naive (not AI-native) workers? They’re the highest-risk cohort in year 3-5, not year 1. Their tacit knowledge is real and valuable today. But they haven’t paired it with AI fluency, which means we’re getting experienced judgment without the productivity multiplier.

When those workers eventually retire or leave, there may not be a junior pipeline ready to replace them.

To be fair

To be fair, there’s a legitimate version of the skeptical case. The 22-25 cohort employment numbers in AI-exposed occupations declined between 2022 and 2025 — but that window also covers the unwinding of one of the most unusual hiring environments in recent memory. Tech companies hired aggressively through 2021 and into 2022 on the assumption that zero-interest-rate conditions and pandemic-driven demand would persist. They didn’t.

The correction that followed — tens of thousands of layoffs across the sector, hiring freezes at companies that had tripled their headcount in two years — would have depressed entry-level employment regardless of what Anthropic shipped in November 2022. The Dallas Fed data shows the mechanism is lower inflow, not mass layoffs, which is what you’d expect from both an AI-driven structural shift and a post-bubble hiring freeze.

Separating the two is genuinely difficult.

There’s a second skeptical argument worth taking seriously, and ARC-AGI-3 actually hands the skeptics some ammunition.

Researchers testing Opus 4.6 with a hand-crafted task harness on a familiar environment found 97% accuracy. The same model, same harness, on an unfamiliar environment: 0%. That collapse suggests the disruption may be bounded where AI performs well on codified tasks when human engineers have built the scaffolding around it. Without that scaffolding, in genuinely novel contexts, the capability evaporates.

Jobs that look codified on a job description but regularly involve novel situations may be more durable than the theoretical automation percentages suggest.

What makes the AI hypothesis more than just a convenient narrative is what’s happening to wages at the same time as the headcount contraction. If this were purely a post-bubble correction, you’d expect wages to track employment down — or at minimum to flatten. Instead, computer systems design wages are up 16.7% over the same period that employment declined 5%. The experienced workers who remained got paid more. The junior roles being eliminated weren’t just cyclical budget cuts; they were being replaced by something. The wage premium for survivors, sustained across multiple research sources with different methodologies, is the signal that survives the skeptic’s objection.

We’ve been stuck on a binary — replacement or augmentation. The data says it’s something more specific. A three-way sort, happening at the entry point rather than the exit. That’s the question worth sitting with: which side of the sort are the people around us landing on?

References:

Anthropic Economic Index: “Learning Curves” — March 24, 2026 (https://www.anthropic.com/research/economic-index-march-2026-report)

Anthropic Economic Index: “Labor Market Impacts” (https://www.anthropic.com/research/labor-market-impacts)

Harvard Business School: “Displacement or Complementarity?” via HBR — March 2026 (https://hbr.org/2026/03/research-how-ai-is-changing-the-labor-market)

Dallas Fed: “AI is simultaneously aiding and replacing workers” — February 2026 (https://www.dallasfed.org/research/economics/2026/0224)

Dallas Fed: “Young workers’ employment drops in high AI exposure occupations” — January 2026 (https://www.dallasfed.org/research/economics/2026/0106)

IMF: “New Skills and AI Are Reshaping the Future of Work” — January 2026 (https://www.imf.org/en/blogs/articles/2026/01/14/new-skills-and-ai-are-reshaping-the-future-of-work)

Goldman Sachs: “How Will AI Affect the US Labor Market?” — March 2026 (https://www.goldmansachs.com/insights/articles/how-will-ai-affect-the-us-labor-market)

ARC Prize: “ARC-AGI-3 Launch” — March 25, 2026 (https://arcprize.org/blog/arc-agi-3-launch)

ARC Prize: “ARC-AGI-3 Preview: 30-Day Learnings” (https://arcprize.org/blog/arc-agi-3-preview-30-day-learnings)