Microsoft just put numbers on the operating-model problem

Org factors (culture, manager support, talent practices) account for 2x the AI impact of individual mindset and behavior.

Microsoft’s 2026 Work Trend Index puts numbers on what every CIO already suspects: the operating model is the bottleneck, not the tools.

Microsoft published its 2026 Work Trend Index this week with a finding that should question how enterprises measure the return on AI. They surveyed 20,000 knowledge workers across 10 countries and crunched through a year of usage data from Microsoft 365 agents.

The headline: how a company runs (its culture, its managers, how it treats people) drives 2x the AI payoff that individual mindset and behavior do.

Why this matters now

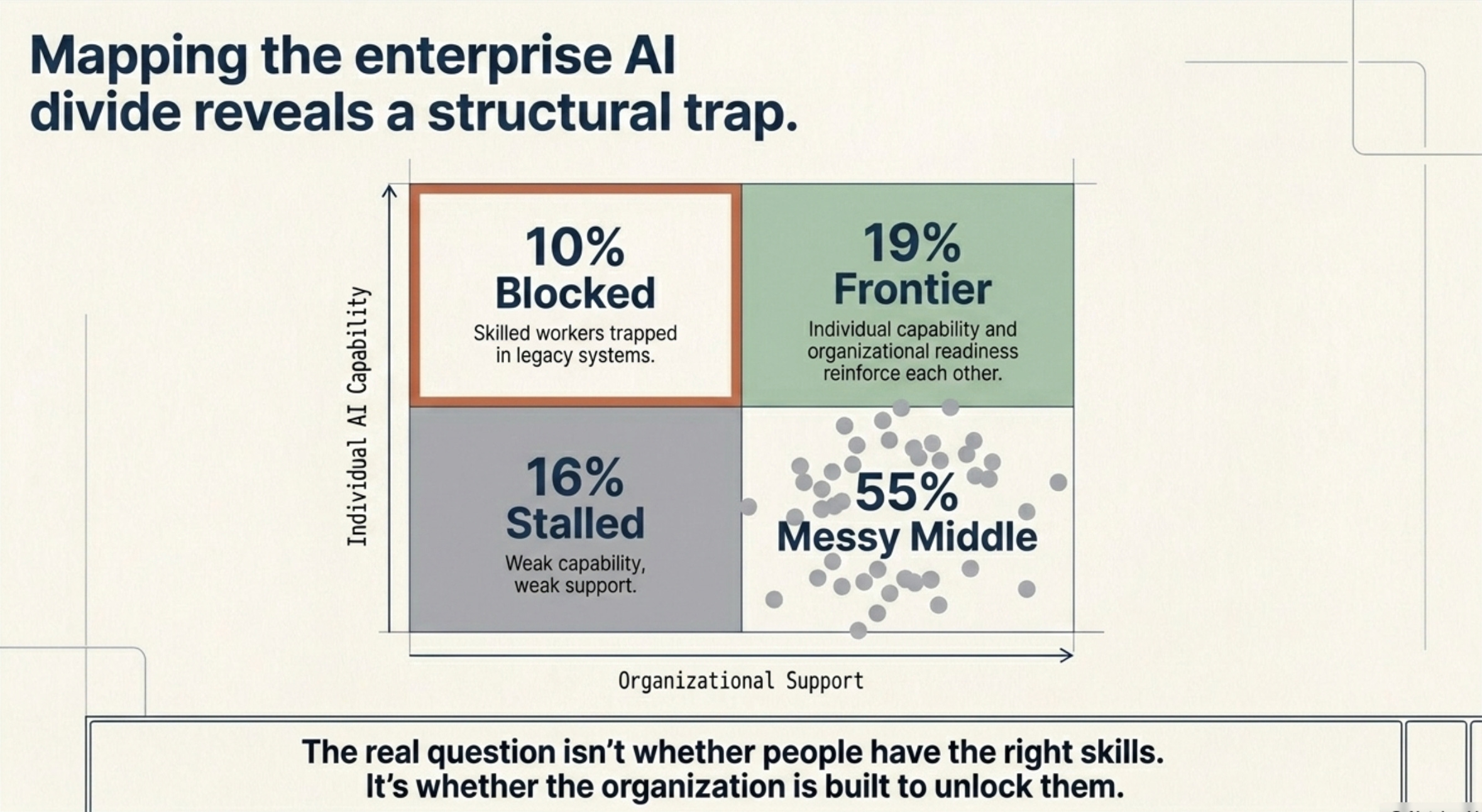

Only 19% of AI users sit in what Microsoft calls the Frontier zone — where the person is ready and the company is ready.

10% are in “blocked agency”: skilled workers stuck in companies that haven’t caught up.

16% are stalled, with low skill and weak support.

About half live in the messy middle.

Microsoft tested 29 different factors with a random-forest analysis (job level, industry, market, company size, the works), and the company’s AI culture came out roughly 2.5x bigger than the biggest individual factor.

The most AI-fluent employees are reinventing how they work faster than performance reviews, training programs, and HR practices can keep up. That’s how companies lose the payoff.

“The real question isn’t whether people have the right skills. It’s whether the organization is built to unlock them.”

Key shifts

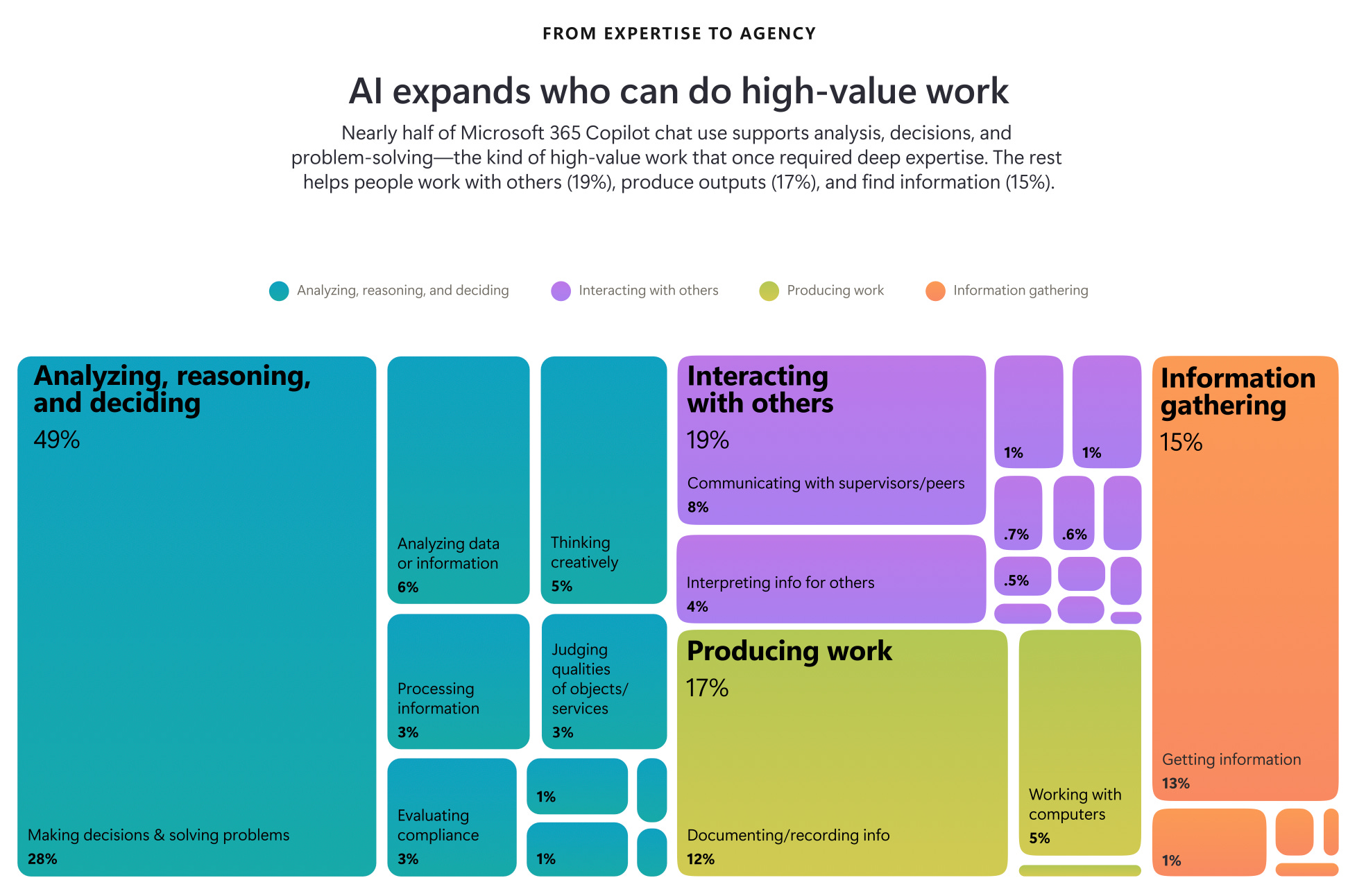

Workers are ready. 58% of AI users say they’re shipping work they couldn’t a year ago, climbing to 80% among Frontier Professionals (the top 16%). 86% treat AI output as a starting point, not a final answer. The “AI is doing the thinking for them” panic isn’t showing up in the data.

Leaders aren’t aligned. Only 26% of AI users say leadership is clearly and consistently on the same page about AI. Only 13% say they’re rewarded for trying new ways of working when the experiment doesn’t immediately ship results. 65% worry about falling behind if they don’t adapt fast. 45% say it feels safer to keep hitting current goals than to redesign how the work gets done. Most performance reviews still pay people to deliver the old way while asking them to invent the new one.

Manager modeling has real lift. A separate Microsoft People Science study (1,800 workers, July 2025) found that when managers use AI visibly in front of their teams, employees report a 17-point lift in seeing AI as valuable, a 22-point lift in thinking critically about how AI is being used, and a 30-point lift in trusting AI agents. That’s the manager effect with numbers attached.

Frontier Professionals build on themselves. They’re 2x more likely to say they’re rewarded for reinventing how work gets done even when the experiment doesn’t ship right away (26% vs. 11%). They cluster in companies that write down how their agents work at the team level (26% vs. 19%), function level (29% vs. 17%), and company level (25% vs. 14%). The places they work actually capture what they learn. That’s the difference.

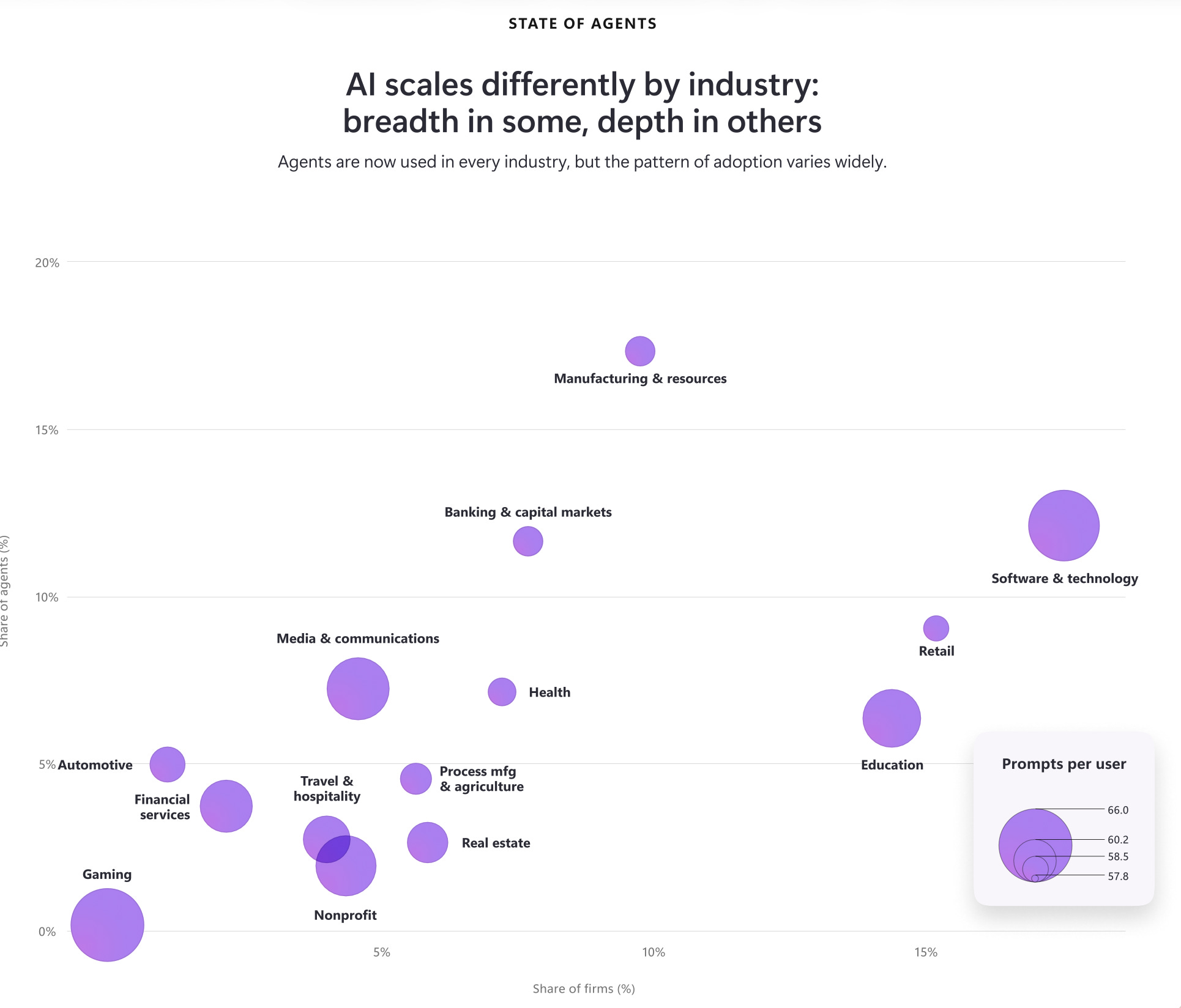

More agents doesn’t mean better use of agents. Active agents inside Microsoft 365 grew 15x year over year, 18x in large enterprises. That’s the growth number. The one that matters is whether companies have written down how their agents work, where humans step in, and what quality looks like. Most firms can’t answer that, even the ones with big agent counts.

Risk if leaders ignore this

Three downsides.

First, talent flight

Frontier Professionals cluster in companies that reward reinvention. When the reward system punishes experiments that don’t ship right away, your AI-fluent people leave for places that don’t penalize them. They take the institutional knowledge with them.

Second, lost compounding

The report calls it “Owned Intelligence”: the know-how that builds up over time because the company captures what its agents learn. Firms without a way to evaluate and capture that watch local agent wins stay local.

Third, a governance gap

15x agent growth without a central set of controls (who’s allowed to do what, which agents can act on which data, when an agent gets retired) is a security incident already booked for next year’s annual filing.

Opportunity if leaders act early

The most useful piece of the report is the four roles it lays out, and how they need to work together. Employees rethink work as briefing AI and checking what comes back. Leaders redesign processes so the goal is the outcome, and give agents room to act. IT runs the central controls for how agents operate day to day. Security builds monitoring and audit trails into the platform itself. None of those roles are new. The coordination is. Companies that get the four working together turn agent activity into a system where every piece of work teaches the company something, and what the company learns reshapes how the next piece of work gets done. That’s why the early movers get hard to catch.

Every Frontier Firm needs to build Owned Intelligence - institutional know-how that compounds over time, is unique to the firm, and hard to replicate.

What leadership should do next

In the next 30-90 days, four moves. Stop counting AI pilots. Start measuring AI absorption: the percentage of teams who have written down how their agents work, where humans take over, and what good output looks like. Check whether your performance reviews quietly punish people for trying new things. When someone runs an experiment that doesn’t immediately ship results, does the review punish them or back them up? Have senior leaders use AI in the open, in front of their teams. The manager-modeling effect has the biggest payoff in the data. Set up a way to grade your agents, with three questions every Frontier Firm needs to answer: who reviews how agents are doing, who has authority to update how they work, and how does a local win get captured and scaled.

The four-role coordination doesn’t happen by accident. It happens when someone with budget authority decides that how the company runs is the work.

“The question worth asking next week: what percentage of our agent activity is producing institutional intelligence we can compound, versus local productivity gains we can’t?”

References

Microsoft WorkLab. 2026 Work Trend Index Annual Report: Agents, human agency, and the opportunity for every organization. May 2026. microsoft.com/worklab/work-trend-index

Survey: 20,000 full-time knowledge workers across 10 markets (Australia, Brazil, France, Germany, India, Italy, Japan, Netherlands, UK, US), fielded by Edelman Data x Intelligence, February 18 - April 20, 2026.

Telemetry: Microsoft 365 Copilot and SharePoint agent activity, March 2025 - March 2026.

Microsoft People Science Agentic Teaming & Trust Survey, July 2025 (1,800 workers; 819 leaders, 520 managers, 461 individual contributors).

Foreword by Dr. Karim Lakhani (Harvard Business School).