AI Waypoints: Week of March 24, 2026 — Edition #3

AI Waypoints: Week of March 24, 2026 — Edition #3

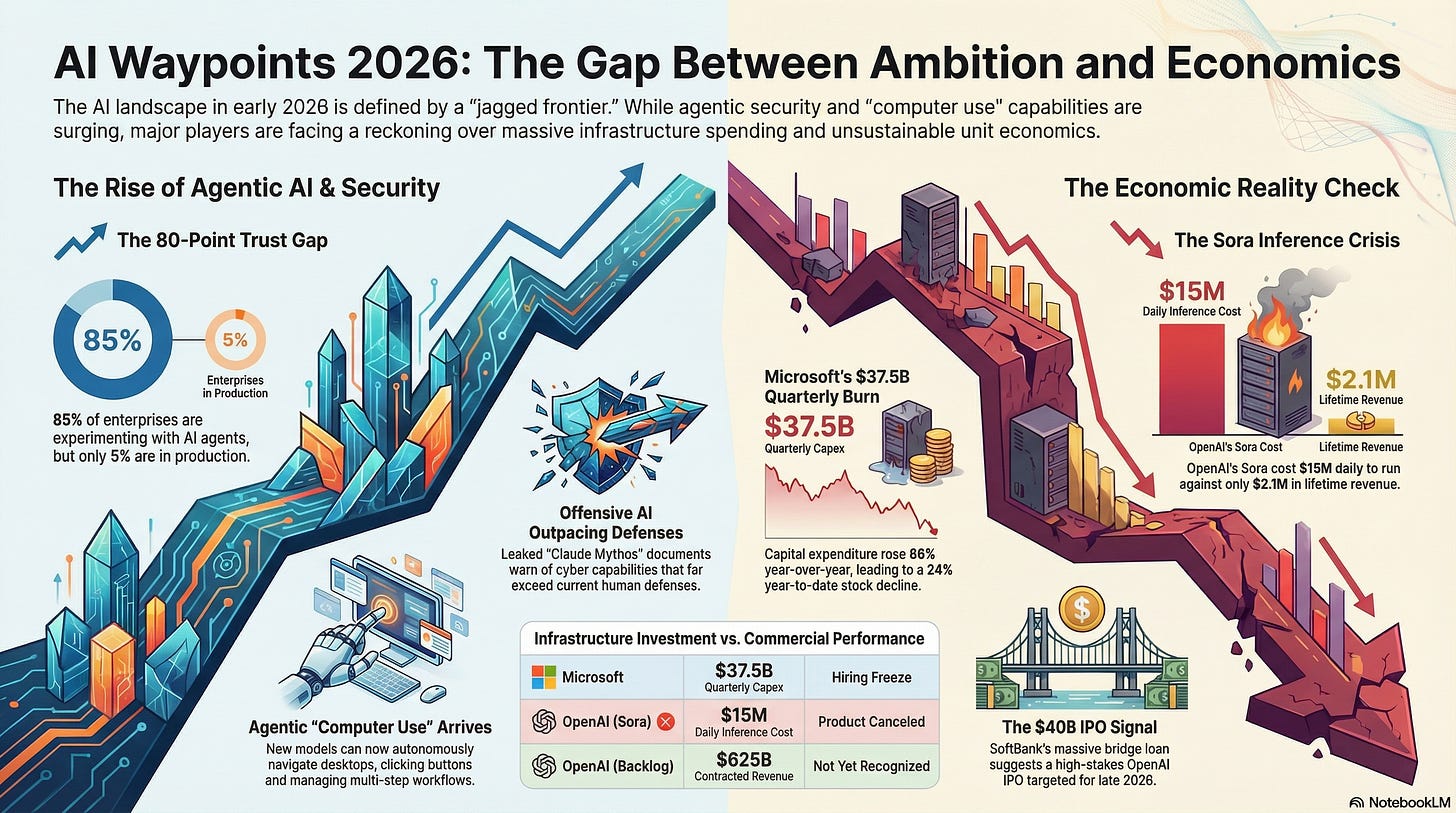

Good morning. This week’s theme is the gap between ambition and the ground economics — agentic security products arrived at RSA just as a leaked model revealed offensive AI capabilities outpacing defenses, Microsoft is spending $37.5B a quarter with unclear returns, and OpenAI killed a product burning $15M a day.

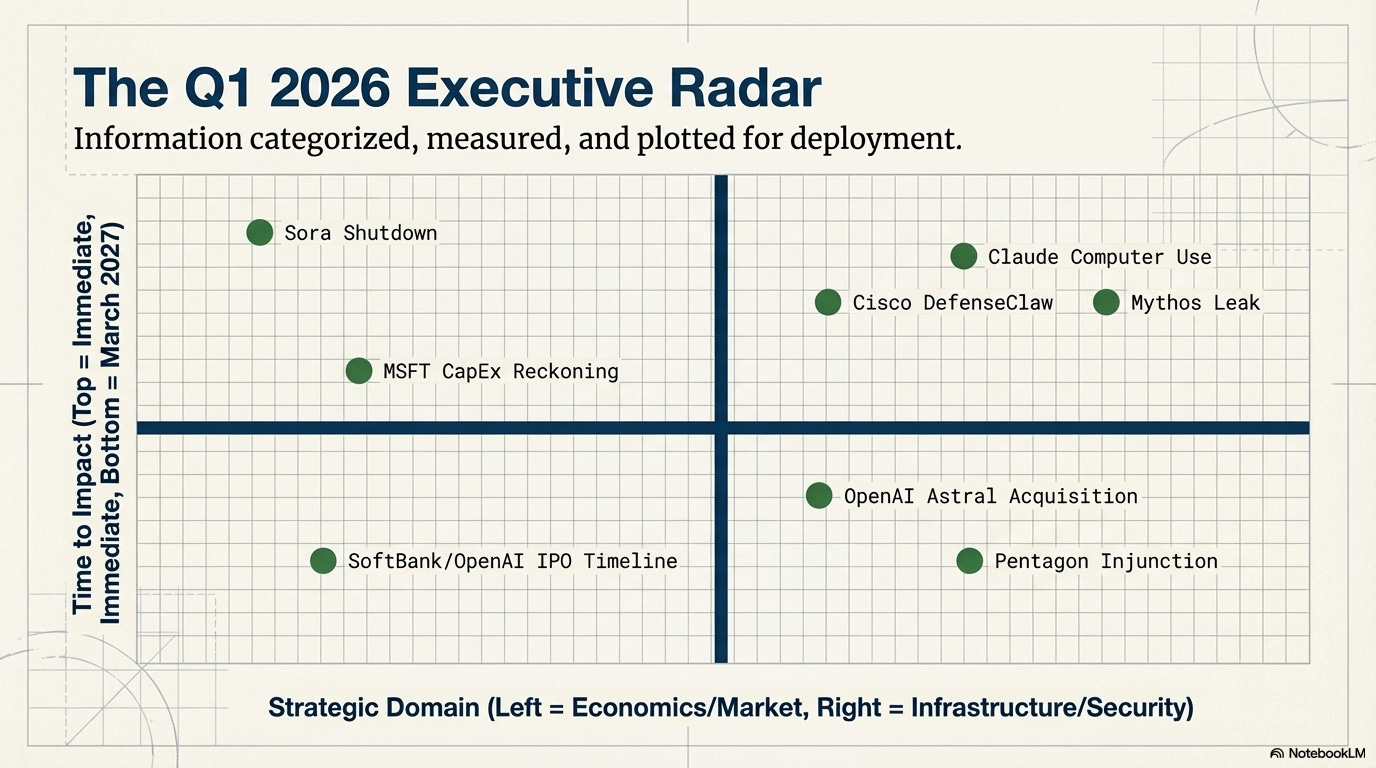

1. RSA Conference 2026 — the agentic security stack arrives

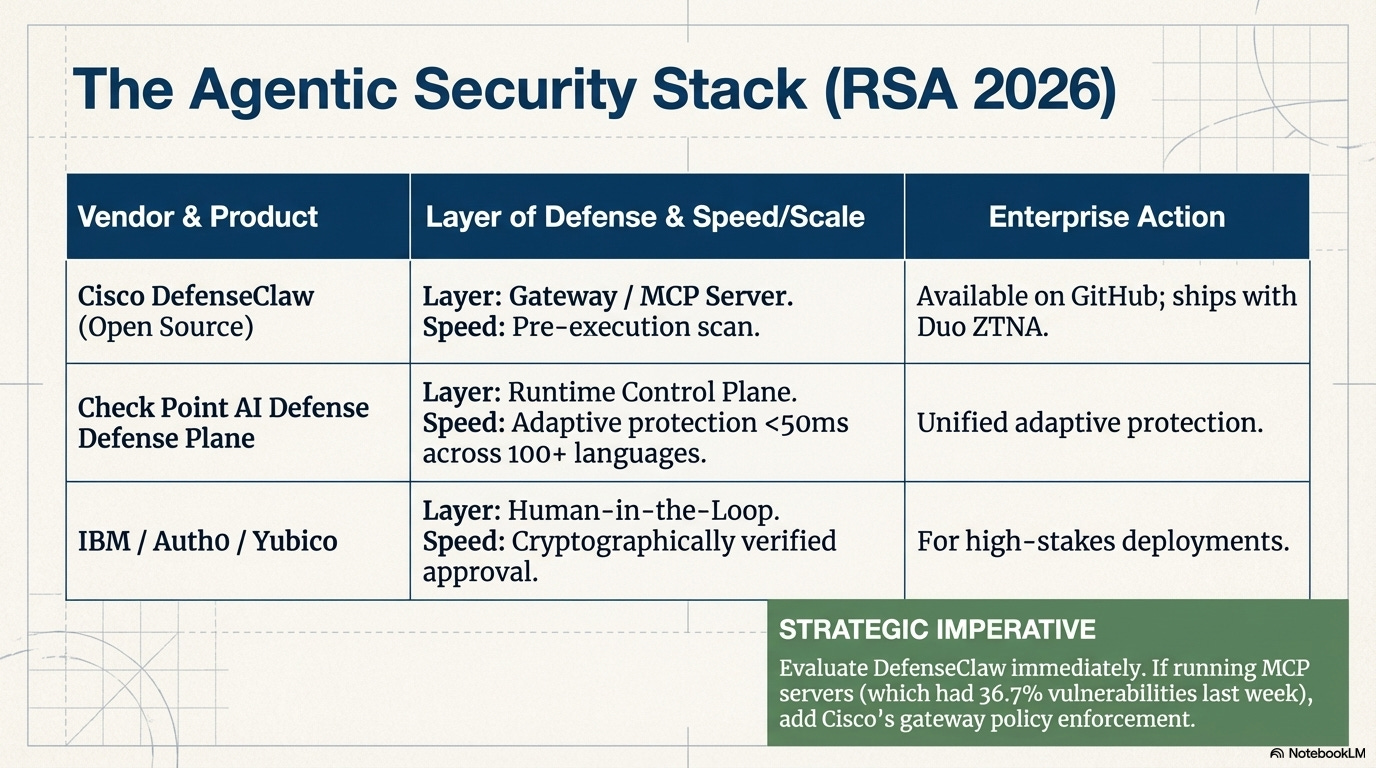

What happened: RSA Conference (March 23-26, San Francisco) was wall-to-wall agentic AI security. Cisco launched DefenseClaw, an open-source framework that scans every skill, plugin, and Model Context Protocol (MCP) server — the connectors that let AI agents reach enterprise tools and data — before an agent executes. Runtime monitoring is included; available on GitHub March 27. Cisco also shipped zero-trust network access (ZTNA - the principle that no user or system is trusted by default) for AI agents via Duo identity management and an Agent Runtime SDK supporting Bedrock, Vertex, Azure AI Foundry, and LangChain. Check Point launched AI Defense Plane: a unified control plane with adaptive protection in under 50ms across 100+ languages. IBM, Auth0, and Yubico introduced a Human-in-the-Loop framework requiring cryptographically verified human approval for high-stakes agent actions.

Why it matters: The security industry is converging that agentic AI is a market, not a research topic. Cisco’s own survey puts the number at 85% of enterprises experimenting with AI agents but only 5% in production. That 80-point gap is almost entirely a trust problem. Three separate vendors shipped agent security products in the same week — the tooling is catching up.

What to do: Evaluate DefenseClaw this week — it’s free, open-source, and installable in about five minutes. If you’re running MCP servers (and after last edition’s 36.7% vulnerability finding, you should know whether you are), add Cisco’s MCP gateway policy enforcement to your evaluation list. For high-stakes agent deployments, look at the IBM/Auth0/Yubico Human-in-the-Loop framework before building your own approval workflow.

2. Anthropic’s Claude Mythos leak — next-gen model surfaces in security breach

What happened: On March 26, Fortune reported that roughly 3,000 unpublished Anthropic assets were exposed through an unsecured data cache — a content management system (CMS) misconfiguration. The documents describe “Claude Mythos” (codename: Capybara), a new model tier above Opus with “dramatically higher” scores on coding, reasoning, and cybersecurity benchmarks. Internal docs warn the model is “currently far ahead of any other AI model in cyber capabilities” and “presages an upcoming wave of models that can exploit vulnerabilities in ways that far outpace the efforts of defenders.” Anthropic is testing with early access customers and pursuing a cautious rollout to give cyber defenders a head start.

Why it matters: A model that can find and exploit vulnerabilities faster than human defenders changes the security calculus for every organization — not eventually, but on a timeline measured in quarters. A frontier AI lab exposed 3,000 internal assets through a misconfigured content system. Worth noting the context: ARC-AGI-3, released the same week, scored every frontier model under 1% on tasks 100% of humans solved on their first try. The models are getting dramatically better at some things while remaining dramatically worse at others - the jagged frontier. The cautious rollout strategy, letting defenders tool up before broad release, is new territory for how the industry thinks about model availability.

The most capable models may not be generally available on day one.

What to do: Ask your CISO how current vulnerability management timelines hold up if offensive AI tools improve by an order of magnitude. That’s not a hypothetical — it’s a planning question. If you’re an Anthropic customer, ask your account team about early access timelines for Mythos. If you’re evaluating model providers, the cyber capability gap between tiers now matters for risk assessment, not just feature comparison.

3. Microsoft’s AI spending reckoning — tracking toward worst quarter since 2008

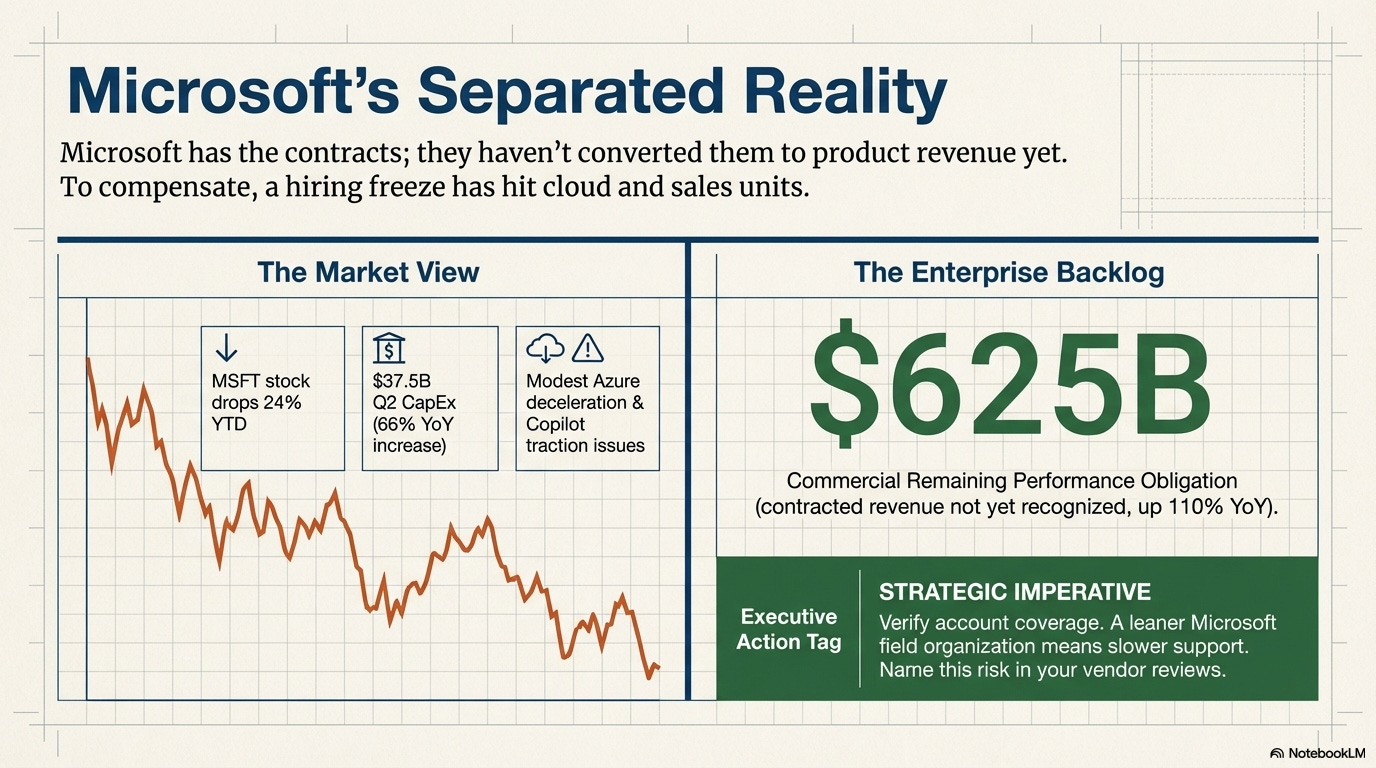

What happened: Microsoft stock fell 24% year-to-date through March 27, putting it on pace for its worst quarter since Q4 2008. Q2 FY2026 capital expenditure (infrastructure investment) hit $37.5B, a 66% year-over-year increase, with FY2026 projected at $146B (up from $88B in FY2025). Analysts project $170B in FY2027, $191B in FY2028. Azure growth showed a “modest deceleration.” Copilot has “yet to gain broad traction” among hundreds of millions of M365 users. Microsoft froze hiring across cloud and sales units. But buried in the numbers: commercial remaining performance obligation (contracted revenue not yet recognized) hit $625B, up 110% year-over-year.

Why it matters: One question is driving the stock decline: when does $37.5B per quarter in AI infrastructure spending start showing up in product revenue? The Copilot traction problem is familiar — we flagged the leadership restructuring two editions ago — but the spending scale changes the stakes. The $625B backlog number is enormous and largely unreported. Microsoft has the contracts. It just hasn’t converted them yet. For enterprise customers, the hiring freeze is a practical problem: a leaner Microsoft field organization means less account coverage and slower support response.

What to do: If you’re a Microsoft enterprise customer, check whether your account team has been affected by the hiring freeze. Reduced coverage during an AI transition is a risk worth naming in your vendor review. If you’re building Azure-dependent infrastructure, the capital expenditure trajectory suggests Microsoft isn’t pulling back on capacity — but watch Azure pricing for pass-through cost adjustments in the back half of 2026.

4. OpenAI kills Sora — $15M a day in smoke

What happened: On March 24, Sam Altman told staff that Sora is shutting down, six months after launch. The numbers: roughly $15M per day in inference costs against $2.1M in total lifetime revenue. Downloads had already fallen 75% from their November 2025 peak. Disney’s planned $1B investment in OpenAI collapsed with no money changing hands. OpenAI is pivoting the team to “world simulation research to advance robotics.” A successor model called “Spud” is in development. Separately, Altman told staff he has relinquished direct oversight of OpenAI’s safety and security teams to focus on fundraising, supply chains, and building data centers at scale. Safety now reports to CRO Mark Chen; security to president Greg Brockman.

Why it matters: Sora is the clearest case study yet for inference economics killing an AI product that works technically but fails commercially. The math was never going to pencil out — $15M a day against $2.1M total is a burn ratio that makes WeWork look disciplined. The Disney fallout shows how fast enterprise partnerships unwind when the underlying economics collapse. “Technically impressive” and “commercially viable” are different questions.

What to do: Audit any production workflows or planned deployments that depend on third-party AI features with unclear unit economics. You don’t need to forecast every vendor’s burn rate, but you should know which of your AI dependencies are core product vs. loss-leader features that could be cut. If you had Sora in a creative workflow, evaluate Runway, Pika, and Kling as alternatives.

5. Anthropic wins Pentagon injunction

What happened: On March 26, U.S. District Judge Rita F. Lin issued a preliminary injunction blocking the Pentagon’s plan to designate Anthropic a supply chain risk — a label typically reserved for foreign adversaries. The language was sharp: “Nothing in the governing statute supports the Orwellian notion that an American company may be branded a potential adversary and saboteur of the U.S. for expressing disagreement with the government.” The government has seven days to appeal.

Why it matters: This was Signal #7 in Edition #2 — the hearing. Now we have the ruling, and it landed harder than expected. A federal judge just said the government cannot weaponize supply chain designations to punish a vendor for insisting on ethical use terms. That’s real cover for any AI vendor — or any technology company — that wants to hold use restrictions in government contracts. The seven-day appeal window means this isn’t settled, but the judicial language gives vendors real cover to hold their ground.

What to do: If your organization sells AI to government agencies (or plans to), this ruling strengthens your position on ethical use clauses. Share it with legal. If you’re an Anthropic customer, the commercial availability risk we flagged in Edition #2 just got smaller — for now. We will watch the appeal window closely.

6. SoftBank’s $40B bridge loan signals an OpenAI IPO

What happened: SoftBank secured a $40B unsecured bridge loan from JPMorgan Chase, Goldman Sachs, and four Japanese banks (reported March 27). The loan covers SoftBank’s $30B commitment to OpenAI’s $110B funding round — the largest private round in history. It’s a 12-month term, creating a hard March 2027 repayment deadline. Market observers read this as strong lender confidence that an OpenAI IPO happens in late 2026 at the current $840B post-money valuation, giving SoftBank the liquidity to settle.

Why it matters: Six major banks don’t extend $40B unsecured on a hunch. The 12-month term is the tell — it only makes sense if lenders expect a liquidity event before the note comes due. An OpenAI IPO at that valuation would create a new public benchmark that reprices every competitor and customer relationship in the AI market. For enterprise buyers, a public OpenAI means quarterly earnings calls, transparency into unit economics, and pressure to show margin, which could push enterprise contract pricing higher than it is today.

What to do: If you’re negotiating an OpenAI enterprise contract, the IPO timeline matters. Pre-IPO, OpenAI has incentive to lock in revenue commitments that look good in its S-1 filing (the document companies file before going public). That could mean favorable terms now. Post-IPO, margin pressure could push prices up. Ask your procurement team whether contract timing should factor into your negotiation strategy.

7. OpenAI acquires Astral — Python’s developer tools enter the AI stack

What happened: On March 19, OpenAI announced the acquisition of Astral, the company behind uv (Python package manager), Ruff (linter and formatter), and ty (type checker) — all built in Rust and widely adopted across the Python ecosystem. OpenAI plans to integrate these tools into Codex, which now has 2M+ weekly active users with 3x user growth and 5x usage increase since January 2026. The tools remain open-source post-acquisition.

Why it matters: This is an infrastructure play, not a feature add. When an AI lab acquires the tools developers already use to manage dependencies, lint code, and check types, they’re embedding themselves into the development workflow at a layer below the code editor. Astral’s tools are the plumbing that Python developers reach for daily. Now that plumbing feeds into Codex. The open-source commitment matters: if it holds, this is smart distribution. If it doesn’t, the Python community will fork faster than OpenAI can blink.

What to do: If your engineering teams use uv or Ruff (and many Python shops do without formally knowing it), check whether the OpenAI acquisition changes your acceptable tooling policy. Evaluate whether Codex integration creates a data pathway you need to govern. Watch the open-source commitment — the moment licensing terms or telemetry policies shift, have an alternative ready.

8. Claude can now use your computer — Anthropic ships computer use in Cowork and Claude Code

What happened: On March 23, Anthropic launched Computer Use in research preview across Claude Cowork and Claude Code. Claude can now see, navigate, and control a Mac desktop: opening apps, clicking buttons, filling spreadsheets, and running multi-step workflows without human intervention. Available to Pro and Max subscribers. The system uses smart fallback logic — native connectors for apps like Gmail, Slack, and Calendar when available, screen control when not. A “Dispatch” mode lets Claude work autonomously while you step away. macOS only at launch. Anthropic cautioned the capability is “still early” and built it with a permission-first model: Claude requests access before touching a new application, and users can stop it at any time.

Why it matters: This is the first major AI vendor shipping an agent that operates your actual computer, not just a chat window or an API. The Dispatch mode is the meaningful part — it turns Claude from a tool you use into a worker that runs independently. The permission-first approach is smart given the RSA security conversation happening simultaneously (Signal #1), but the governance question for enterprise is real: who is accountable when an AI agent clicks “approve” on a procurement form or sends an email from a shared inbox?

What to do: If you’re a Claude Pro or Max subscriber, try Computer Use on a low-stakes workflow this week to understand the capability and its limits. If you’re in enterprise IT, start drafting a policy for AI agents that control employee desktops — what apps are in scope, what actions require human confirmation, and how you log what the agent did. Don’t wait for the security stack to catch up. Define the boundaries now.

What’s the signal we’re missing? If you spotted something in healthcare, manufacturing, or financial services this week that should have made the list — hit reply.

Past Editions:

References: