AI Waypoints: Week of March 17, 2026 — Edition #2

Ten enterprise AI signals to guide your week.

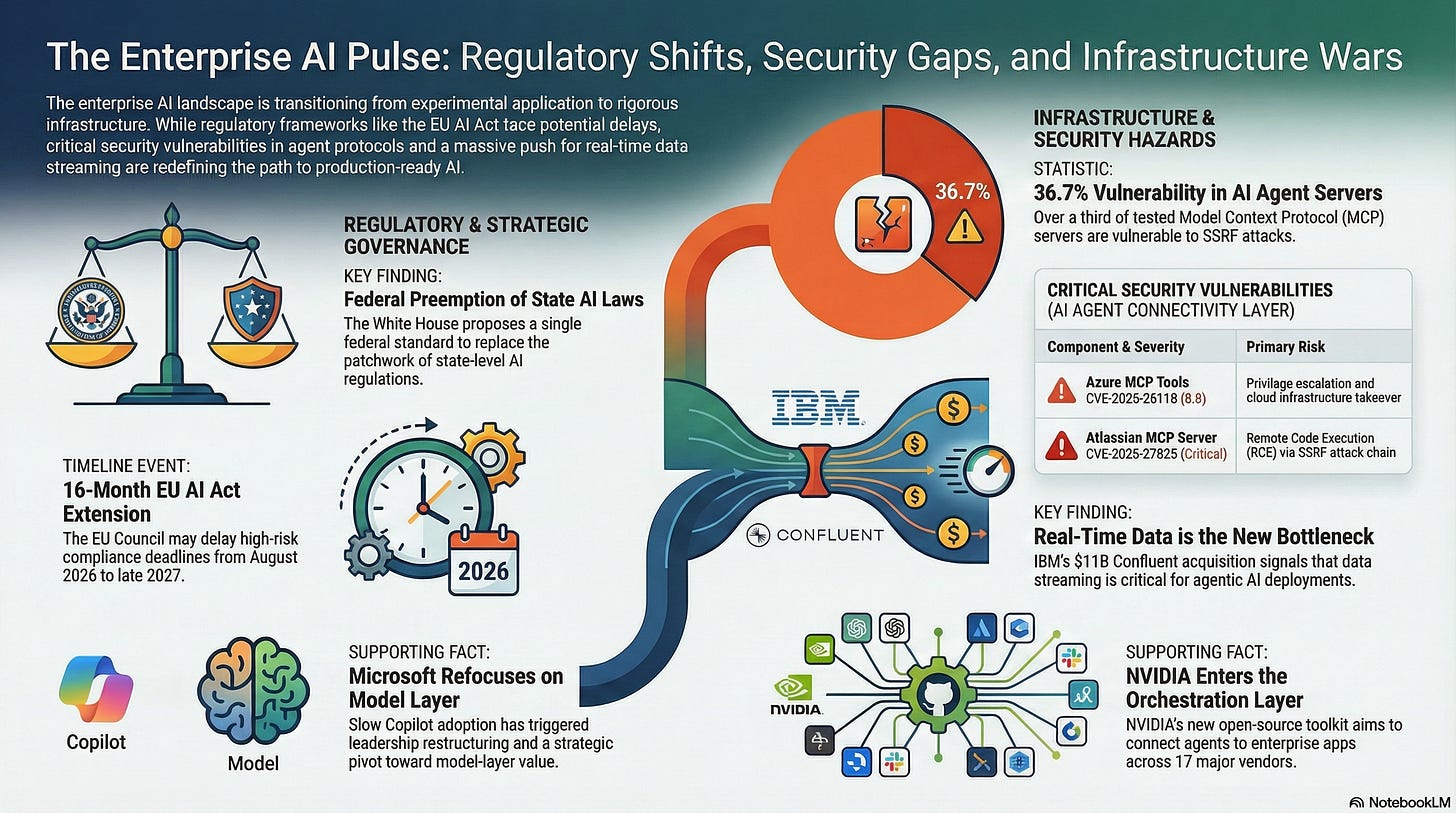

Good morning. This week’s signals hit the scaffolding around enterprise AI — regulatory frameworks are being redrawn, major vendors are restructuring, and the security layer for AI agents has a 36.7% failure rate.

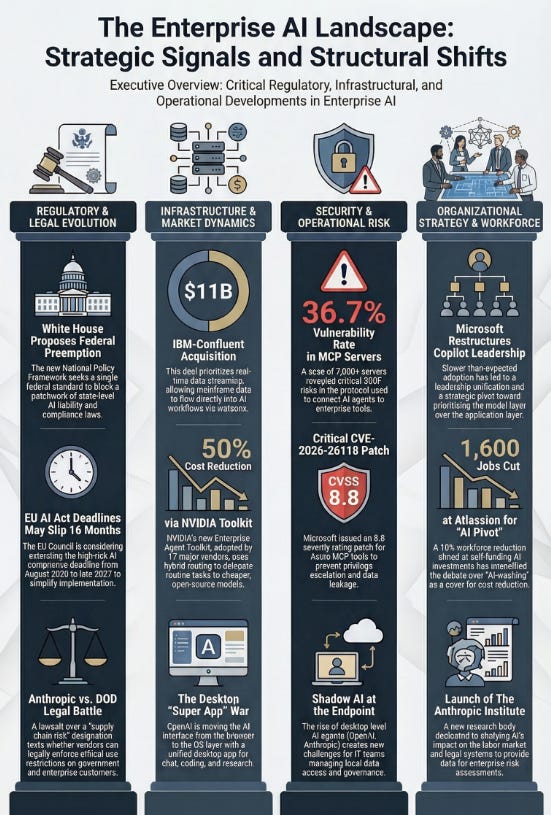

1. White House Drops National AI Framework — Calls for Federal Preemption of All State AI Laws

What happened: On March 20, the Trump administration released a four-page National Policy Framework for AI, directing Congress to establish a single federal standard that would preempt all state-level AI laws. The framework explicitly calls for blocking states from imposing liability on AI developers for third-party misuse, proposes regulatory sandboxes, faster data center permitting, and no new federal AI regulatory body. It is a declaration of intent, not legislation — Congress still has to act.

Why it matters: If enacted, this eliminates the patchwork compliance problem — no more tracking Colorado, California, Texas, and Illinois AI rules in parallel. But it also removes the strongest enforcement mechanisms we currently have. The more likely near-term outcome is a split: federal preemption stalls in Congress while state laws continue accumulating. Enterprise compliance architecture built for a single federal standard will need to flex both directions.

What to do: Brief your legal and compliance teams on the framework’s seven pillars this week. Map which state AI laws affect your operations — Colorado AI Act, California, Illinois Biometric Information Privacy Act (BIPA), and Texas DAIA are the most active right now. Don’t pause compliance work on the assumption that preemption will pass quickly. Congress rarely moves fast.

2. IBM Closes $11B Confluent Acquisition — Real-Time Data Is Now the Enterprise AI Infrastructure Play

What happened: IBM completed its acquisition of Confluent on March 17, creating an integrated stack from mainframe (IBM Z) to data streaming (Confluent/Kafka) to AI application layer (watsonx). Confluent serves 6,500+ enterprises, including 40% of the Fortune 500. The deal enables mainframe transactional data to stream directly into AI workflows via Confluent connectors, a connection that used to require a lot of custom plumbing.

Why it matters: This is a data infrastructure landgrab dressed as an AI acquisition. We’re watching the argument play out in real dollars: real-time data streaming, not model capability, is what’s blocking production AI and agent deployments. Financial services and insurance firms on mainframes should pay close attention — IBM just made the path from legacy to AI much easier, and much more locked-in. Kafka alternatives like Redpanda and AWS MSK are worth keeping in your back pocket.

What to do: If you run Confluent or Kafka today, check your contract terms and renewal timeline. IBM acquisitions typically bring pricing changes within 12-18 months of close and historically it trends one way - up! If you’re planning agentic AI deployments, assess whether your data infrastructure can deliver real-time feeds.

Mainframe shops: request an IBM briefing on the Z-to-watsonx pipeline now, before the sales motion changes.

3. Microsoft Restructures Copilot Leadership After Slow Adoption

What happened: Microsoft announced on March 17 that it’s unifying commercial and consumer Copilot teams under a new leader, Jacob Andreou (ex-Snap). Mustafa Suleyman was pulled back from overseeing all Microsoft AI and refocused specifically on model development. CNBC reported the restructuring was driven by “slower-than-expected adoption.” Suleyman’s statement, “most of the future value is going to accrue to the model layer,” is a notable reversal from Microsoft’s previous position that the application layer matters most.

Mustafa’s book - The Coming Wave is a great read!

Why it matters: When a vendor restructures leadership because of slow adoption, that’s a product-market fit signal, not just org design. The consumer-commercial unification also suggests Microsoft may blur the line between personal and enterprise Copilot — which could create headaches for IT teams managing what data flows where. Suleyman’s comment about model-layer value deserves a bookmark: if Microsoft pivots strategy under new leadership, the Copilot roadmap customers committed to could look different in 12 months.

What to do: If you’re a Copilot customer, request a roadmap briefing from your Microsoft account team. Leadership changes tend to either accelerate or kill specific features. If you’re still evaluating, extend your pilot period rather than committing now. Watch for pricing adjustments in the next 90 days. Especially there are opportunities with the introduction of E7 licensing that includes Agent 365 licensing.

4. MCP Servers Have a 36.7% Vulnerability Rate — Patch Your Azure Tools Now

What happened: BlueRock Security scanned 7,000+ Model Context Protocol (MCP) servers — the standard for connecting AI agents to enterprise tools and data — and found 36.7% vulnerable to server-side request forgery (SSRF). Their research showed that Microsoft’s MarkItDown MCP server allowed arbitrary calls enabling privilege escalation, data leakage, and full cloud infrastructure takeover. Microsoft patched CVE-2026-26118 (CVSS 8.8, near the top of the severity scale) in Azure MCP Server Tools on March 10. A separate critical SSRF-to-remote-code-execution (RCE) attack chain was also found in the most widely deployed Atlassian MCP server (CVE-2026-27825).

Why it matters: If teams are deploying MCP servers without a security review, there’s a real chance of SSRF exposure right now. This is the early cloud misconfiguration pattern all over again: fast adoption, no established security baseline, vulnerabilities that look minor until they’re catastrophic.

What to do: Inventory all MCP server deployments in your environment immediately. Apply Microsoft’s March patches for Azure MCP Server Tools. Set up a security review gate before any new MCP server goes live. BlueRock’s MCP Trust Registry is one option for vetting third-party servers. Don’t wait on this one.

5. NVIDIA Launches Enterprise Agent Toolkit — 17 Major Software Vendors Already Adopting

What happened: At GTC 2026 on March 16, NVIDIA launched its Agent Toolkit: an open-source stack including Nemotron (agentic reasoning models), AgentIQ (enterprise knowledge integration), and OpenShell (policy-based security runtime for autonomous agents). Seventeen enterprise software vendors announced adoption, including Adobe, Atlassian, Box, Cisco, CrowdStrike, Salesforce, SAP, and ServiceNow. The architecture routes complex tasks to frontier models while delegating routine work to Nemotron, with NVIDIA claiming 50%+ cost reduction.

Why it matters: NVIDIA is positioning itself as the orchestration layer between enterprise software and AI models, not just the GPU vendor. If 17 major platforms adopt this toolkit, NVIDIA could answer the “how do I connect agents to enterprise apps” question before Microsoft, Google, or Anthropic do. The hybrid routing model (frontier plus open-source) is also the first vendor-backed implementation of the cost-arbitrage strategy many enterprise teams want but haven’t been able to build themselves.

What to do: If you use any of the 17 adopting platforms, ask your vendor reps when AgentIQ and OpenShell integration reaches general availability (GA). Evaluate whether NVIDIA’s orchestration layer competes with or complements your existing Microsoft or Anthropic investments. Benchmark NVIDIA’s 50% cost claim against your actual agentic AI spend before buying into the architecture.

6. Atlassian Cuts 1,600 Jobs Citing AI — “AI-Washing” Debate Intensifies

What happened: Atlassian announced 1,600 layoffs (10% of its workforce) on March 11 to “self-fund” AI and enterprise sales investments. CTO Rajeev Rajan stepped down the same day. CEO Mike Cannon-Brookes stated it would be “disingenuous to pretend AI doesn’t change the mix of skills needed.” The announcement follows Block’s 4,000-person cut (40% of workforce) in late February. Both companies saw stock prices rise, and both earned the “AI-washing” label from analysts who view the AI rationale as cover for traditional cost reduction. Expected charges: $225-236M.

Why it matters: Whether the AI justification is genuine or not, the market is rewarding AI-labeled headcount cuts. That pressures every enterprise to have an AI workforce strategy on paper, regardless of what’s actually driving decisions. For Atlassian customers: a 10% workforce cut plus CTO departure during an announced AI pivot is a product risk signal. Rovo’s AI agent roadmap has to survive this transition.

What to do: Watch your vendors’ earnings calls for AI-justified restructuring — it often signals product roadmap instability. Internally, get ahead of the AI-washing label by tying any AI-related workforce changes to measurable productivity data before announcing them. If you’re an Atlassian customer, ask your account team explicitly whether the Rovo agent timeline is still on track post-restructuring.

7. Anthropic Sues the Pentagon — Hearing Today Over “Supply Chain Risk” Designation

What happened: Anthropic sued the DOD after being designated a “supply chain risk” following a breakdown in contract negotiations. The dispute centers on use restrictions — Anthropic sought limits on surveillance and autonomous weapons applications, while the DOD deemed those conditions incompatible with operational requirements. The DOD subsequently signed a deal with OpenAI. Court filings from March 20 revealed the Pentagon told Anthropic the two sides were “nearly aligned” just one week before the designation was issued. A preliminary injunction hearing is scheduled for today, March 24, in San Francisco.

Why it matters: This is no longer a government procurement story. It tests whether an AI vendor can legally enforce ethical use restrictions on a customer. If Anthropic loses, every AI vendor’s acceptable-use policy gets weaker at the negotiating table. If Anthropic wins, vendors gain real leverage to restrict how their models are deployed. Either outcome touches the AI vendor contracts we’re negotiating right now. The OpenAI-as-government-alternative dynamic also raises the possibility of a preferred federal vendor emerging.

What to do: Review the acceptable use and ethical use clauses in your AI vendor contracts. Understand whether your vendors have the legal standing to restrict how you deploy their models and whether those clauses apply to your specific use cases. If you’re running multi-vendor, assess concentration risk if government actions expand to affect commercial availability.

8. EU Agrees to Simplify the AI Act — High-Risk Deadline Could Slip 16 Months

What happened: On March 13, the EU Council adopted its negotiating position on “Omnibus VII,” a package to simplify the AI Act. Key changes: the high-risk AI compliance deadline (currently August 2026) may be extended by up to 16 months; exemptions for small and medium enterprises (SMEs) expand to small mid-cap companies; more sensitive personal data processing is permitted for bias detection; and the AI Office receives stronger enforcement powers. The European Parliament supported the postponement on March 16. Trialogue negotiations between the Council and Parliament are next.

Why it matters: If you’ve been racing to hit the August 2026 high-risk compliance deadline, there may be breathing room ahead. But the rules aren’t going away. The SME exemption expansion also means smaller vendors and supply chain partners face less regulatory pressure, which could affect how we assess third-party AI governance. The bias detection carve-out for sensitive data processing matters most for HR tech, hiring tools, and any AI touching protected characteristics.

What to do: Don’t stop EU AI Act compliance work — the delay isn’t final until trialogue concludes. Update your compliance timeline with two scenarios: August 2026 (no change) and December 2027 (16-month extension). If you deploy AI in hiring or HR, review the bias detection data processing changes with your Data Protection Officer (DPO) before assuming the carve-out applies.

9. OpenAI Is Building a Desktop “Super App” — ChatGPT, Codex, and a Browser in One

What happened: OpenAI is developing a unified desktop application that merges ChatGPT, Codex (coding), and Atlas (AI browser) into a single environment under CEO of Applications Fidji Simo and President Greg Brockman. The goal is to keep users in one app for chat, coding, research, and multi-step agentic tasks. Bloomberg reported on March 20 this is a direct response to Anthropic’s Claude Code and Claude Desktop gaining ground with developers. No launch date has been announced.

Why it matters: The AI interface war is moving from the browser tab to the desktop OS layer, just like Slack, Teams, and Zoom before it. For enterprise IT, an always-on AI desktop agent raises obvious questions: what local data can it access, will it be part of ChatGPT Enterprise or a separate license, and how fast will developers install it whether IT approves or not. Shadow AI at the endpoint is harder to catch than browser-based usage.

What to do: Add AI desktop agents to your next endpoint governance review. Draft an acceptable use policy for AI desktop applications before they ship. If you’re already governing Claude Desktop or Microsoft Copilot at the desktop level, extend the same framework proactively to cover OpenAI’s upcoming product.

10. Anthropic Launches a Research Institute for AI’s Economic and Societal Impact

What happened: Anthropic launched The Anthropic Institute on March 11, merging its Frontier Red Team, Societal Impacts, and Economic Research teams under co-founder Jack Clark. New hires include Matt Botvinick (ex-Google DeepMind, Yale Law) and Anton Korinek (AI economics). Focus areas are AI labor market displacement, AI and the legal system, and forecasting AI progress. The institute will “engage directly with workers facing job loss,” an unusually concrete commitment for a frontier lab.

Why it matters: Anthropic is building the “responsible AI vendor” brand, and the research outputs give enterprise customers something to cite in their own AI impact assessments. The labor market research lands squarely on anyone building an AI workforce strategy — vendor-backed economic data you can reference (or push back on) is useful. The Frontier Red Team integration also means Anthropic’s safety testing could become more visible and auditable, which shifts how enterprise due diligence conversations play out.

What to do: Keep an eye on the Anthropic Institute’s publications — their labor market and legal system research will likely produce data points you can use in AI business cases and risk assessments. If you’re an Anthropic customer, ask whether Institute red-team findings will show up in Claude’s enterprise safety documentation and whether you’ll get access for vendor reviews.

What did I miss? If you’re seeing signals from your vertical that didn’t make this edition — especially in financial services, healthcare, or manufacturing — hit reply.

Past Editions:

References: