AI Waypoints: Week of April 27, 2026 — Edition #7

Oracle wires its database into Gemini Enterprise at GCP Next. Anthropic adds 5 GW with Amazon (8.5 GW total) and signs Japan's largest AI workforce deal with NEC.

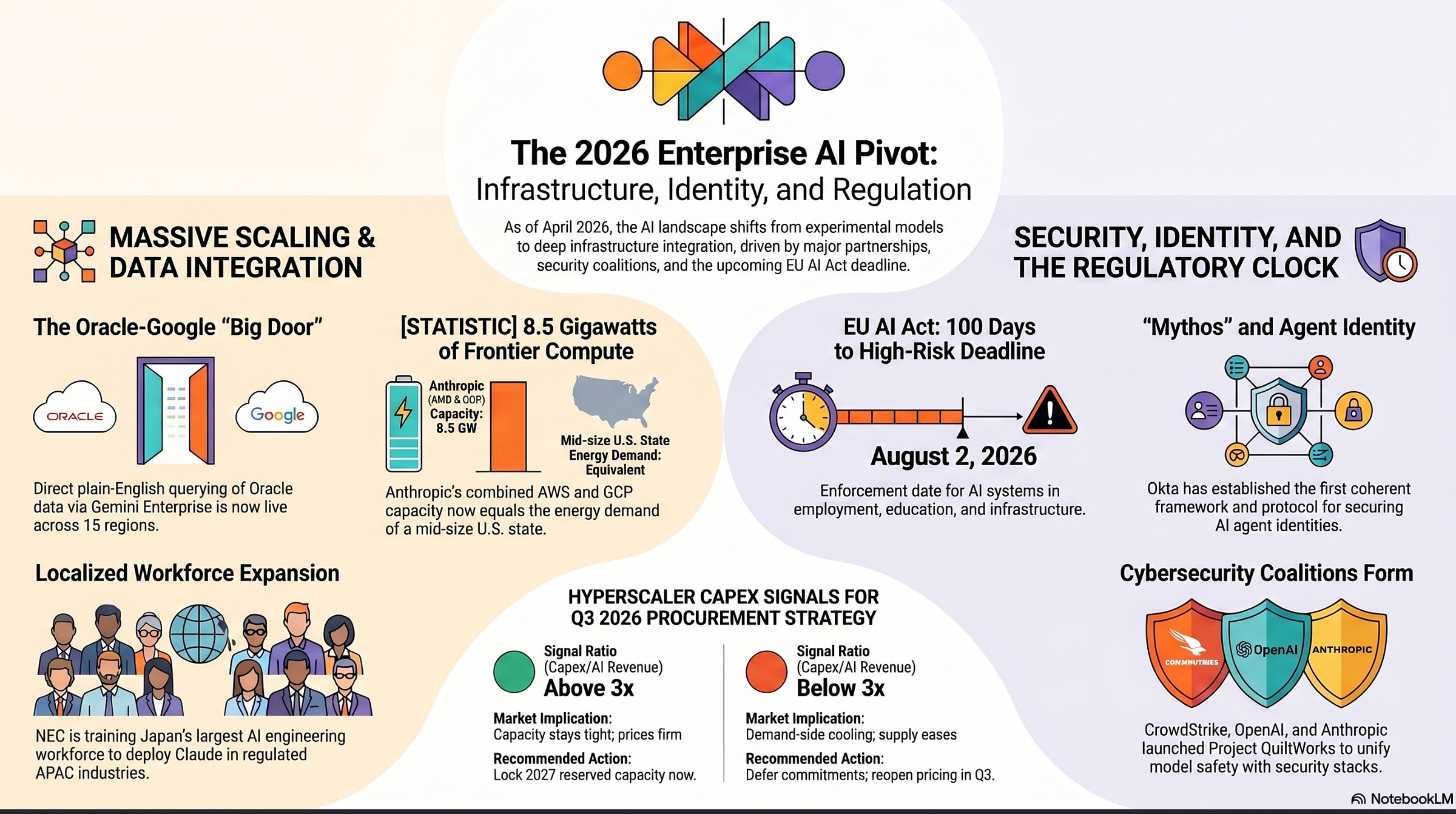

Good morning. This week Oracle wired its database into Google’s Gemini Enterprise, Anthropic locked in another five gigawatts of compute with Amazon and signed Japan’s largest AI workforce deal with NEC, and two security vendors used RSAC pre-week to claim the agent-identity slot. The EU AI Act enforcement clock crossed 100 days to high-risk applicability. The fun story is how fast the bills are coming due on what was bought in 2024 and 2025.

1. Oracle wires its database into Gemini Enterprise at Google Cloud Next

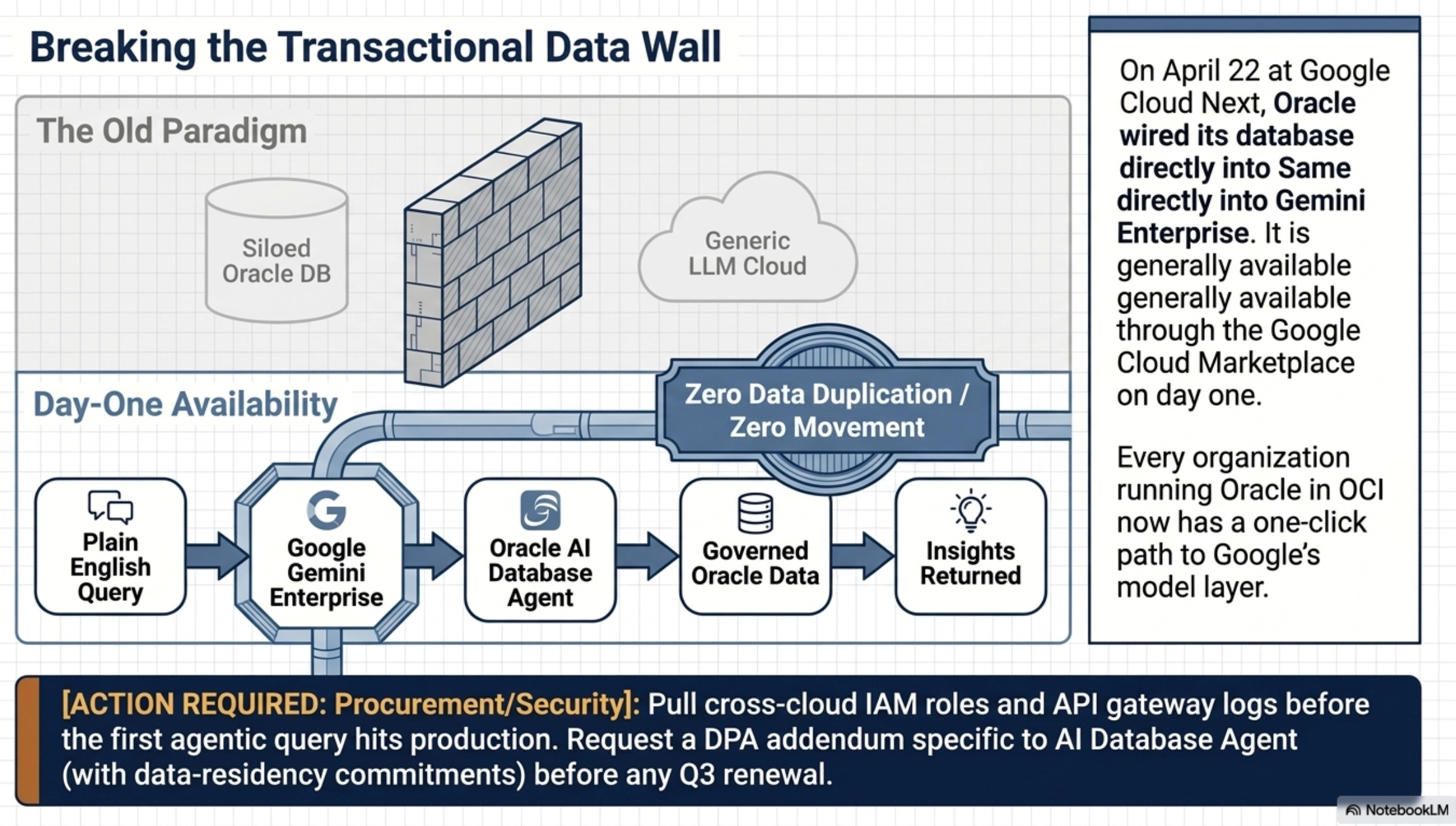

What happened: Oracle announced on April 22 at Google Cloud Next 2026 that the Oracle AI Database Agent for Gemini Enterprise is now generally available through the Google Cloud Marketplace. Authorized users can query Oracle AI Database@Google Cloud in plain English from Gemini Enterprise — the agent interprets each request, runs against governed Oracle data, and returns insights without moving or duplicating the data. The service spans 15 regions.

Why it matters: I have spent ten years watching enterprises wall off transactional Oracle data from anything resembling an LLM. That wall just got a nice big door and it is a door Oracle and Google walked through together, at a keynote, with marketplace availability on day one. Every organization running Oracle in OCI now has a one-click path to Google’s model layer, which means cross-cloud IAM, query lineage, and data residency become an audit problem this quarter.

What to do: It would help to pull your cross-cloud IAM roles and API gateway logs before the first agentic query hits production workloads. Request a DPA addendum specific to AI Database Agent (including data-residency commitments) before any Q3 renewal goes back to procurement.

2. Anthropic and Amazon expand to five gigawatts of new compute

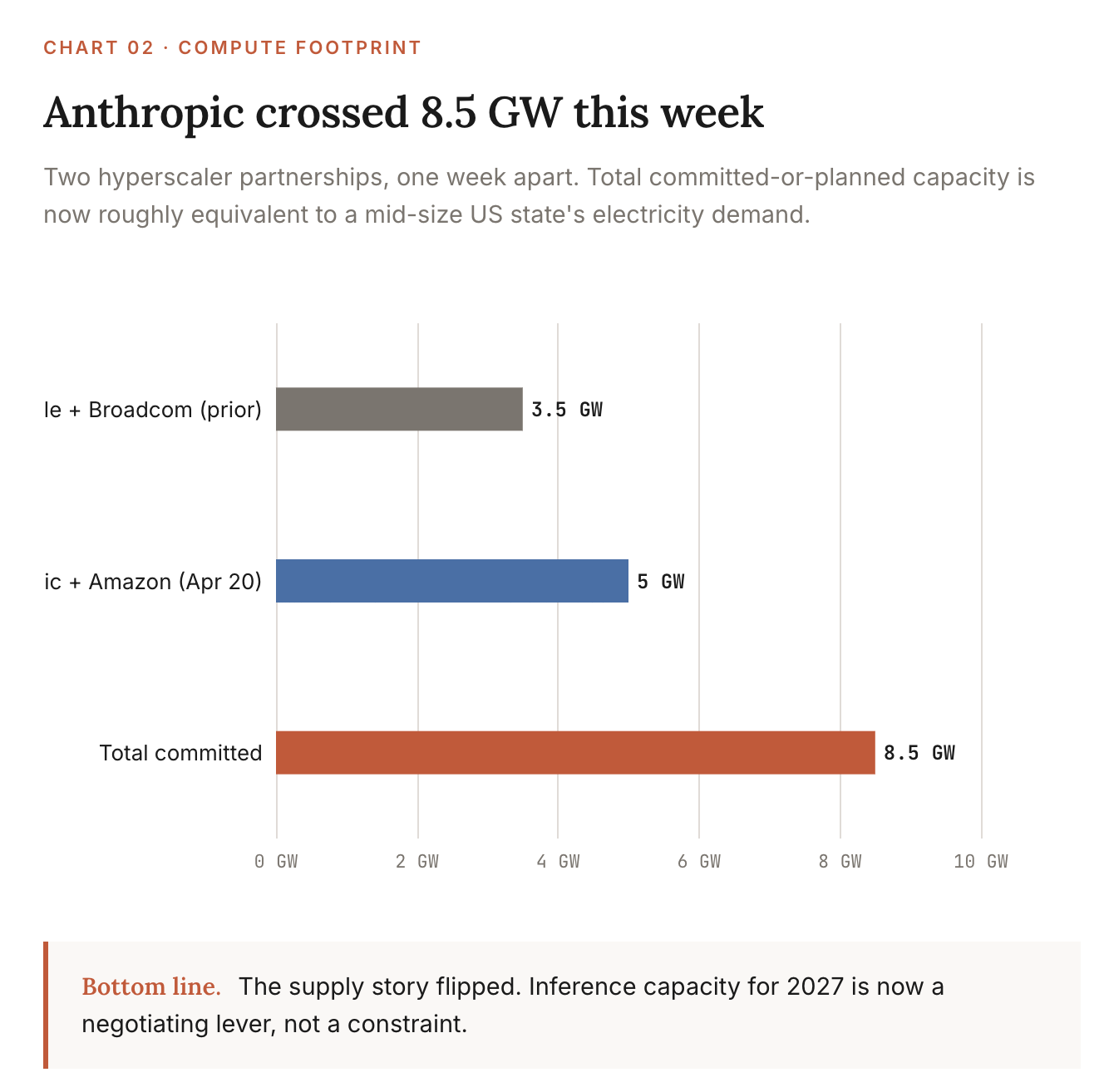

What happened: Anthropic and Amazon announced on April 20 an expanded compute partnership for up to 5 gigawatts of new capacity, building on the Trainium-anchored relationship from 2024. This lands one week after the Anthropic-Google-Broadcom 3.5 GW announcement covered in Edition 6 — pushing Anthropic’s combined committed-or-planned compute footprint past 8.5 gigawatts across two hyperscaler stacks.

One gigawatt powers about 750,000 homes. 8.5 GW is the peak electricity demand of a small US state — reserved by one AI lab. Anthropic has it locked across two of the three big clouds (AWS and Google), so if one runs short, the other covers. AI capacity is now a power-and-chips problem, not a software one.

Why it matters: I have stopped treating frontier model capacity as a vendor problem and started treating it as a power-grid and procurement problem. Eight and a half gigawatts is roughly the demand of a mid-size US state, and it gives Anthropic optionality across both AWS and GCP that no other lab has. For anyone running Claude through Bedrock, this is the supply-side answer to “will I get the inference capacity I am paying for in 2027?” — yes, and probably faster than the Azure-OpenAI lane.

What to do: If your Claude usage runs through AWS Bedrock, ask your AE which Trainium-class capacity gets prioritized to enterprise contracts versus consumer-facing API. It’s an opportunity to look for committed-throughput language in the next renewal. The supply story is now strong enough to negotiate against.

3. Anthropic and NEC build Japan’s largest AI engineering workforce

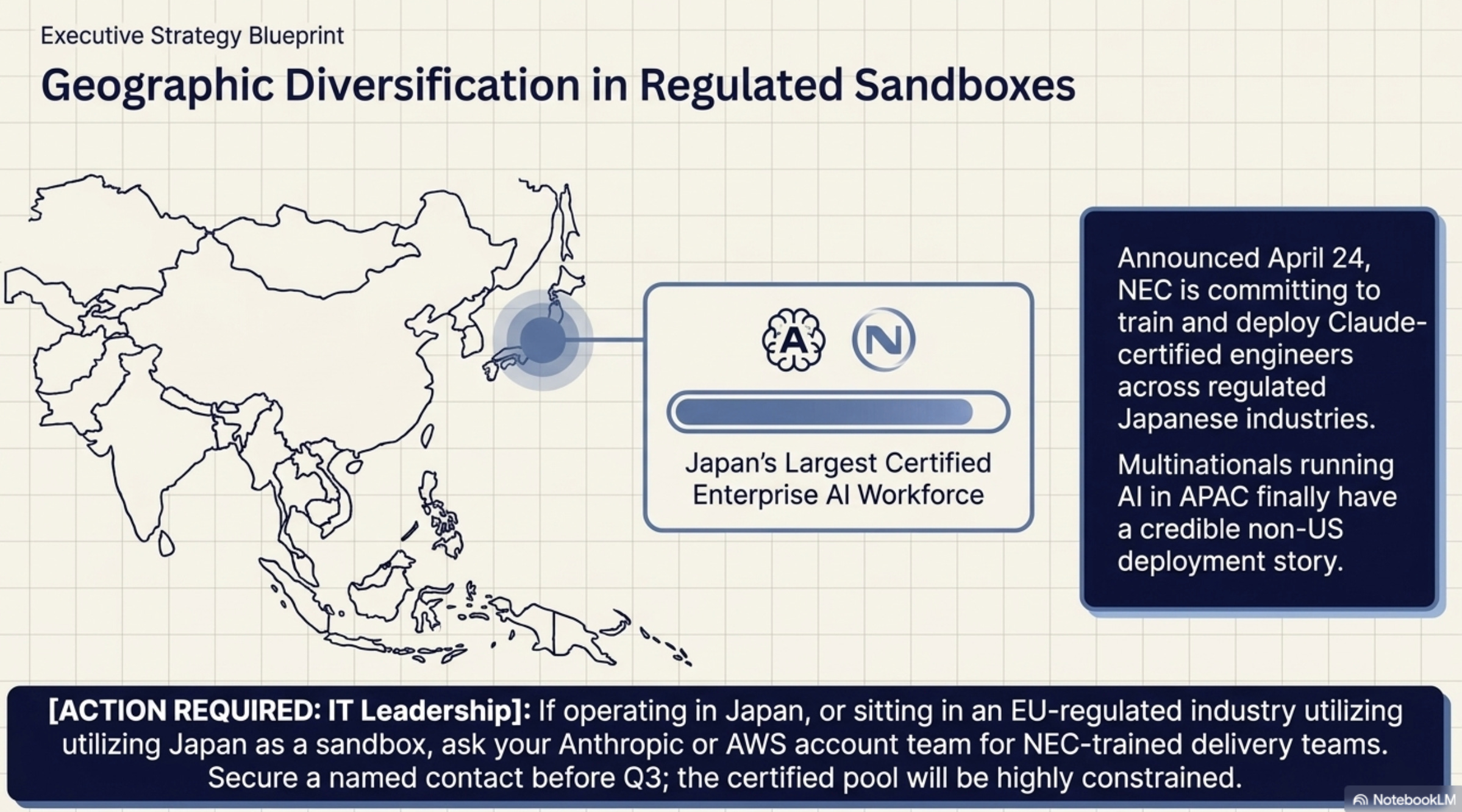

What happened: Anthropic and NEC announced on April 24 a partnership to build Japan’s largest enterprise AI engineering workforce, with NEC committing to train and deploy Claude-certified engineers across regulated Japanese industries. The deal positions Anthropic as the model layer for Japan’s “innovation-first” AI policy stance and gives NEC’s enterprise customers a non-OpenAI default.

Why it matters: Geographic diversification of frontier AI is real now, not theoretical. Every multinational running AI in APAC has been waiting for a credible non-US-deployment story for regulated workloads, and the Japan story is the cleanest one yet with a predictable regulator, deep enterprise integration culture, and now a named workforce pipeline. The procurement question shifts from “which model?” to “which model in which jurisdiction?”

What to do: If you have Japan operations, or sit in an EU regulated industry watching Japan as a sandbox jurisdiction, ask your Anthropic or AWS account team about NEC-trained delivery teams. Get a named contact before Q3. The certified pool will be small for the first six months.

4. CrowdStrike launches Project QuiltWorks with OpenAI and Anthropic

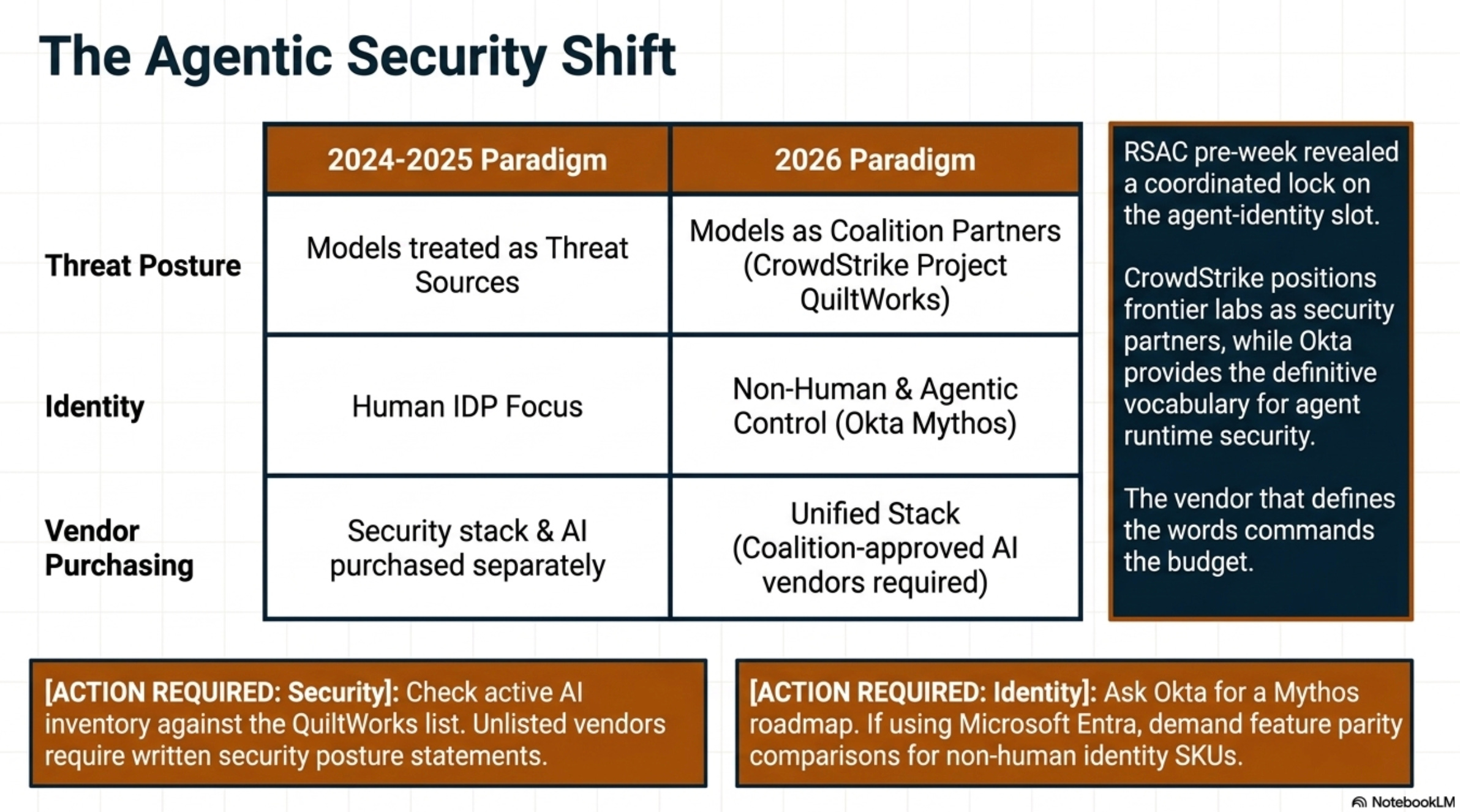

What happened: CrowdStrike announced Project QuiltWorks on April 23, described as a cybersecurity industry coalition with OpenAI and Anthropic to “close the AI vulnerability gap” as frontier models accelerate risk. The labeling positions frontier model providers as security partners rather than threat sources. That is a big departure from the 2024-2025 narrative.

Why it matters: This is the first time the model labs and the security industry have formed a public, named, jointly-branded program. The coalition label matters because it tells procurement teams that “the AI vendor’s security posture” and “your security stack’s coverage” are no longer separate purchase decisions. Whoever doesn’t have a coalition-approved AI vendor on their roster in 2026 will be answering questions about it on a board call in 2027.

What to do: Ask CrowdStrike directly which AI vendors are inside the coalition versus outside. Run that list against your active AI vendor inventory before next quarter’s risk review. If you have a vendor that didn’t make the list, you need a written security posture statement from them on file.

5. Okta and Anthropic ship “Mythos” — agent identity gets a name

What happened: Okta published a piece on April 23 explaining what Anthropic’s Mythos means for identity security, one day after announcing on April 22 what CISOs need to know about AI agent runtime security — and weeks after Okta’s March 16 blueprint for the secure agentic enterprise. Together with the Cross App Access Protocol launch, Okta is staking the most-cited claim on agent identity heading into RSA Conference.

Why it matters: Okta is now the first IDP to ship a coherent vocabulary for “which agent, which scope, which data” that is the unsolved enterprise control: Mythos as the brand, Cross App Access Protocol as the mechanism, agent runtime security as the operational frame. The vendor that defines the such words usually has dibs on the budget.

What to do: If your identity provider is Okta, ask for a Mythos roadmap commitment with delivery dates before Q3 closes. If it is Microsoft Entra, ask the equivalent question about non-human identity SKUs and demand a feature parity comparison. The IDP that wins this gets renewed for the next decade.

6. Hyperscaler Q1 capex prints — the ratio is the signal

What happened: Microsoft, Alphabet, and Meta reported their Q1 2026 earnings on April 23 and 24. Besides the headline revenue, the number that matters is how much they spent on AI infrastructure (data centers, GPUs, networking) versus how much AI revenue they pulled in. Also scan the earnings call transcripts for any mention of chip wait times and how much reserved AI capacity is still available for new customers. Watch the Microsoft IR site, Alphabet IR, and Meta IR for transcripts.

Why it matters: When the big three clouds are spending more than 3× their AI revenue on infrastructure, it tells you GPUs are still scarce and your per-call AI pricing will not get cheaper in 2026. If that ratio drops, even a little, it is the first sign that demand for AI compute is cooling somewhere, which means your account team’s leverage is fading and you can push back on inference pricing in Q3. Either direction, this number drives every “lock in capacity now or wait?” call you make this quarter.

What to do: Review the three transcripts this weekend. If spend-to-AI-revenue is still above 3×, lock in 2027 reserved capacity now while your account exec still has discount budget to deploy. If it is softening, hold off on commitments and reopen the inference pricing conversation in Q3.

7. EU AI Act — 100 days to high-risk system applicability

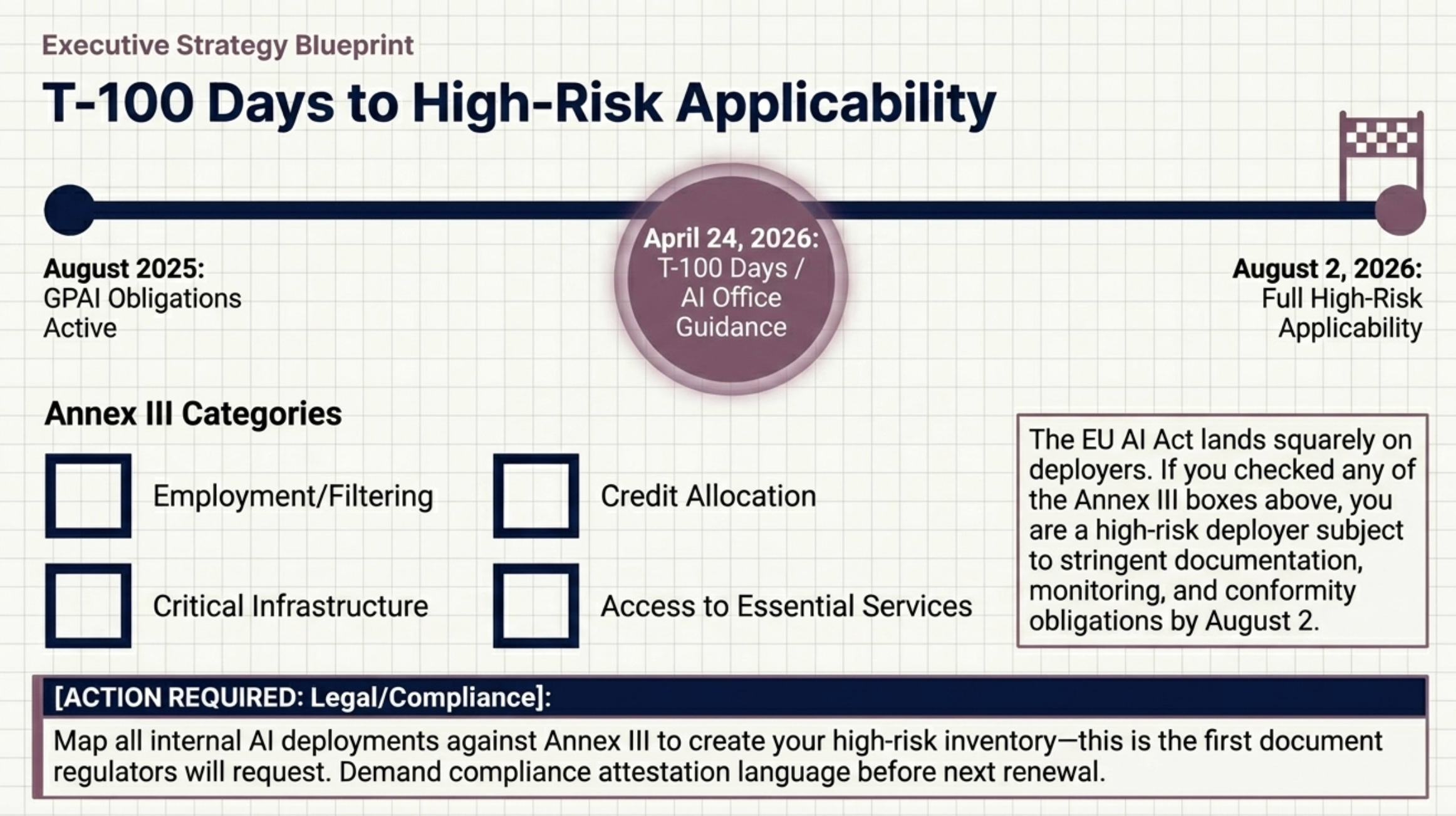

What happened: August 2, 2026 is the full applicability date for high-risk AI systems under the EU AI Act — Annex III categories including employment, education, critical infrastructure, and access to essential services. GPAI obligations have been in force since August 2025; this next milestone is the high-risk operational deadline. April 24 marked T-100, and the AI Office is publishing implementation guidance through April and May.

Why it matters: I wonder if the CIOs and CPOs are still treating it as a vendor problem. If your organization runs an AI system that filters job applications, allocates credit, manages critical infrastructure, or scores access to public services, you are the deployer of a high-risk system as of August 2, with documentation, monitoring, and conformity obligations attached.

What to do: Have legal pull every active AI vendor contract this week. Map every internal AI deployment against the Annex III categories. That map is your high-risk inventory, and it is what regulators will ask for first. Ask for AI Act compliance attestation language from vendors before next renewal.

What caught you eye for your organization and why?

References:

Oracle Newsroom — AI Database Agent for Gemini Enterprise: https://www.oracle.com/news/announcement/oracle-expands-powerful-ai-capabilities-in-oracle-ai-database-at-google-cloud-to-supercharge-enterprise-data-innovation-2026-04-22/

Anthropic + Amazon compute: https://www.anthropic.com/news/anthropic-amazon-compute

Anthropic + NEC Japan workforce: https://www.anthropic.com/news/anthropic-nec

CrowdStrike Press Releases: https://www.crowdstrike.com/press-releases/

Okta Newsroom: https://www.okta.com/press-room/

Microsoft Investor Relations: https://www.microsoft.com/en-us/Investor

Alphabet Investor Relations: https://abc.xyz/investor

Meta Investor Relations:

https://investor.atmeta.com

European Commission — AI Act: https://digital-strategy.ec.europa.eu/en/policies/regulatory-framework-ai