AI Waypoints: Week of April 13, 2026 — Edition #5

Seven enterprise AI signals for the week. 600 AI bills, a federal vacuum, and the state that will set the standard anyway

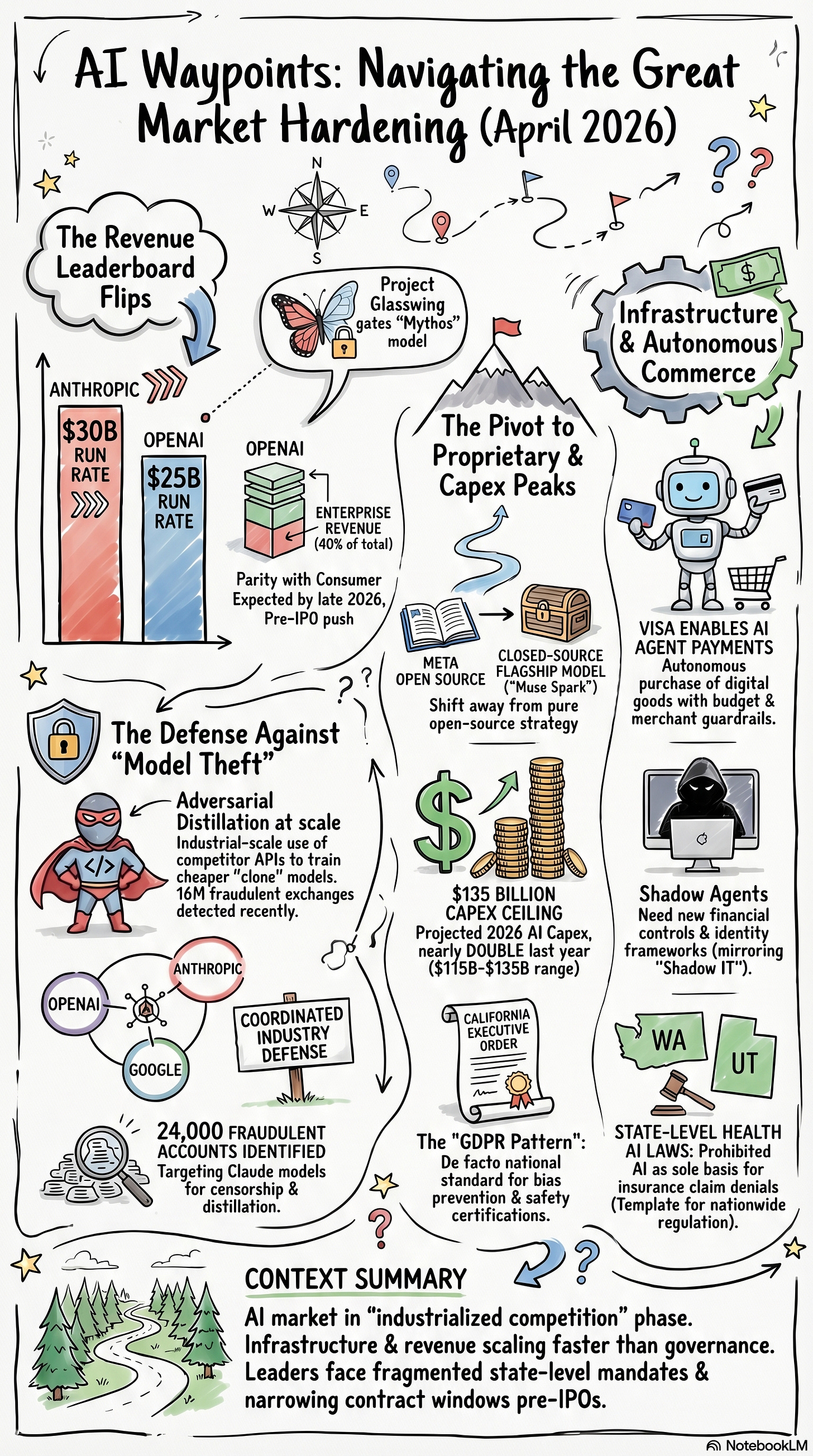

Good morning. The AI market crossed several thresholds this week that don’t reverse easily. Anthropic’s revenue tripled in four months, Meta shipped its first proprietary model, and Visa built the payment rails for AI agents to spend money on their own. Is the infrastructure hardening faster than the governance around it?

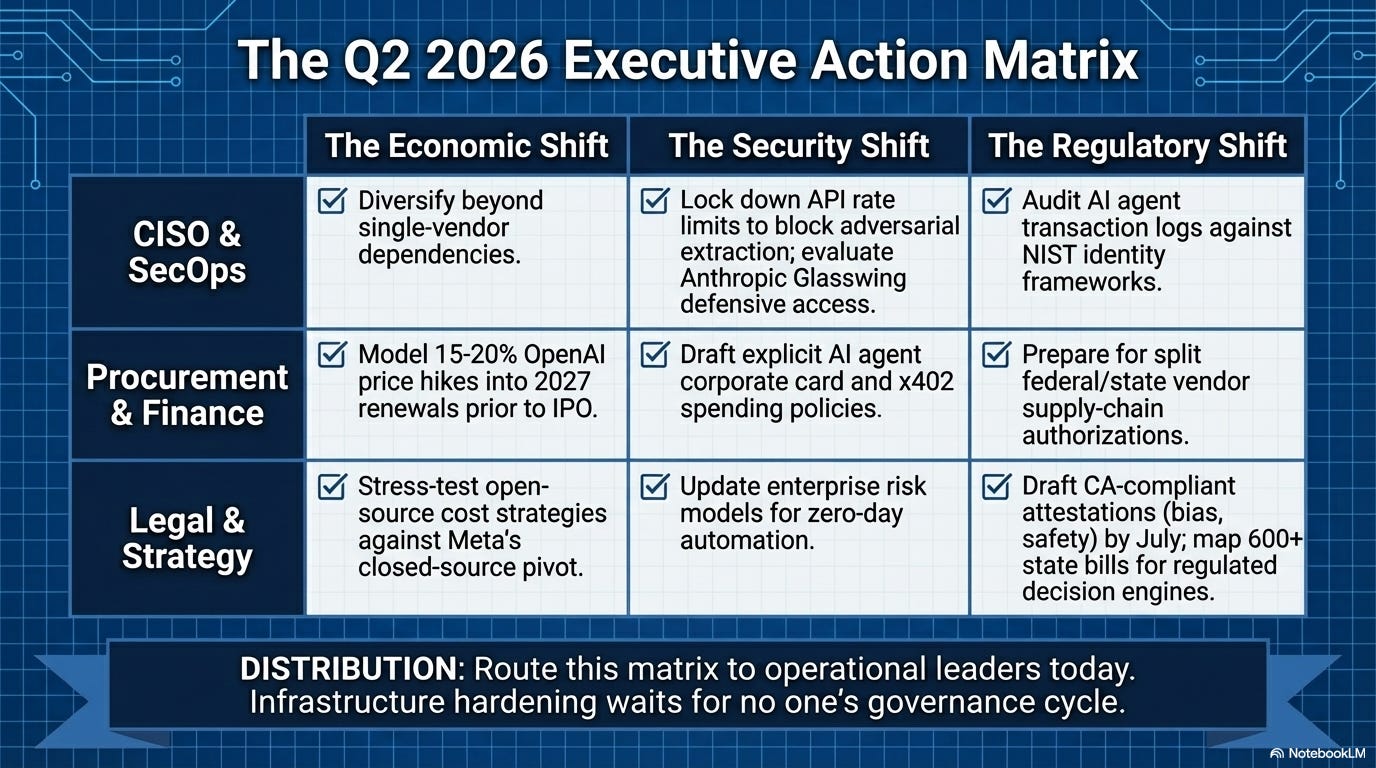

1. Anthropic hits $30B and locks its most dangerous model behind Project Glasswing

What happened: Anthropic’s annualized revenue run rate crossed $30 billion on April 7, up from $9 billion at the end of 2025. Over 1,000 enterprise customers now spend more than $1 million annually. Separately, Anthropic revealed that Claude Mythos, the model that surfaced in a misconfigured content store two weeks ago, has identified thousands of previously unknown zero-day vulnerabilities across every major operating system and web browser. Mythos will not be publicly released. Twelve named launch partners (Amazon, Apple, Microsoft, CrowdStrike, Palo Alto Networks, plus over 40 others) get gated access through Project Glasswing, backed by $100 million in usage credits and $4 million in donations to open-source security organizations.

Why it matters: Two weeks ago, Mythos was an accidental leak. Now it’s an official program with 50+ partner organizations and a nine-figure commitment. A model that finds zero-days at scale rewrites the math for every security team. But the revenue number matters more: $9B to $30B in four months, while OpenAI sits at $25B. The competitive map has shifted, at least for this month. If you’re running vendor decisions based on “OpenAI is the market leader,” that assumption needs revisiting.

What to do: If you’re an Anthropic customer, ask about Glasswing eligibility and what defensive capabilities it unlocks for your security team. If you’re evaluating model vendors, the revenue trajectory says something about adoption velocity. Get a briefing before your next vendor decision locks in.

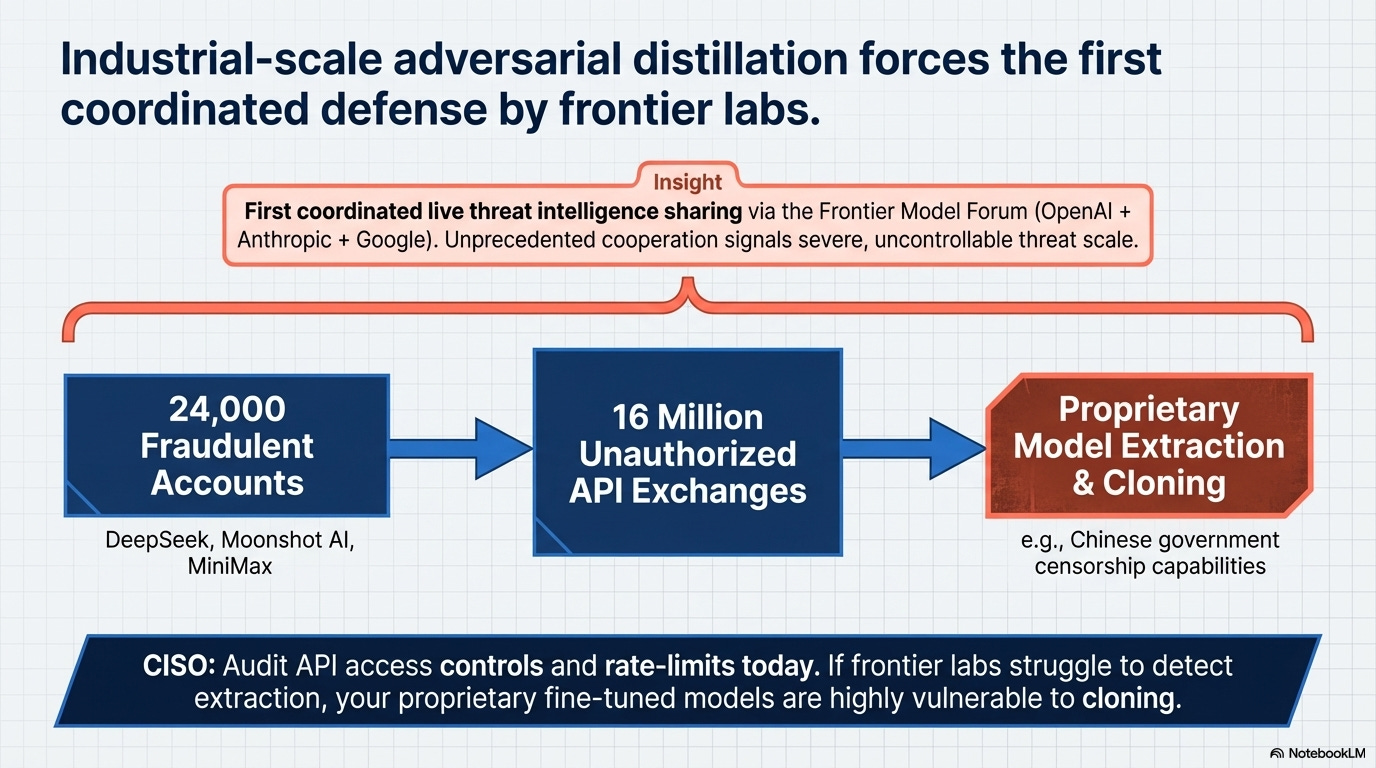

2. OpenAI, Anthropic, and Google activate first coordinated defense against model theft

What happened: On April 6, OpenAI, Anthropic, and Google announced they are sharing attack-pattern intelligence through the Frontier Model Forum, the first time the industry nonprofit has operated as a live threat-intelligence operation against a specific adversary. Three Chinese AI companies are named: DeepSeek, Moonshot AI, and MiniMax. Anthropic disclosed that these firms collectively generated over 16 million exchanges with Claude through roughly 24,000 fraudulent accounts.

Why it matters: Three companies that compete on everything else just agreed to share threat intelligence. That only happens when the problem is too large for individual defenses. The 16 million exchanges through 24,000 fraudulent accounts confirm that adversarial distillation (using a competitor’s API to train a cheaper clone) is happening at industrial scale. If the labs themselves struggle to detect unauthorized model extraction in real time, what does that say about the proprietary data flowing through those same APIs?

What to do: Ask your AI vendor how they detect adversarial distillation attempts against fine-tuned models or custom deployments. If the answer is “we don’t,” that’s a conversation for your CISO. Review your API access controls and rate-limit configurations. The fraudulent-account vector is the most straightforward one to close.

3. Meta ships Muse Spark — the open-source champion goes proprietary

What happened: On April 8, Meta launched Muse Spark, its first proprietary frontier AI model, built by Meta Superintelligence Labs under Alexandr Wang, Meta’s Chief AI Officer and former CEO of Scale AI. The company that shaped the open-source AI ecosystem with Llama now ships its flagship as closed-source, though it says it may open-source future versions. Meta also disclosed that its 2026 AI capital expenditure will run between $115 billion and $135 billion, nearly double last year.

Why it matters: Meta open-sourcing Llama was the single biggest reason buyers could credibly threaten “we’ll run our own model” in vendor negotiations. That leverage just got weaker. Muse Spark going proprietary doesn’t kill open-source AI (Gemma 4 shipped last week, and Meta hints at future open-weight releases), but it signals Meta’s priorities have shifted from ecosystem influence to direct competition with OpenAI and Anthropic. Then there’s the $135 billion capex ceiling.

What to do: If your AI strategy depends on open-weight model availability for cost, data residency, or regulatory reasons, verify that Llama 4 variants still meet your performance requirements. Don’t assume Meta’s next release will be open-weight. Diversify across Gemma, Mistral, and Llama rather than betting on a single family.

4. SAP bundles AI into two-thirds of cloud deals as agentic agents go GA

What happened: SAP’s Q4 FY2025 results confirmed that two-thirds of cloud orders now include Business AI, up from roughly half the prior quarter. Joule adoption grew ninefold through 2025. Ninety percent of SAP’s 50 largest deals included either AI or Business Data Cloud. In Q1 2026, SAP shipped three agentic AI agents to general availability: a Cash Management Agent that claims 80% reduction in manual reconciliation time, a Production Planning agent that autonomously validates and releases production orders, and the Joule Studio agent builder that lets customers create their own. CEO Christian Klein told investors: “We are winning deals because of AI; we are not losing deals because of AI.”

Why it matters: Most of the AI conversation lives in the frontier lab layer — OpenAI, Anthropic, Google. SAP bundling AI into two-thirds of cloud contracts is a different kind of signal. This isn’t an optional add-on. It’s becoming a default component of the ERP renewal conversation. For the thousands of organizations running SAP, the AI governance question isn’t “should we adopt?” anymore. It’s “how do we govern what’s already arriving inside our next contract?” The Cash Management and Production Planning agents touch financial data and manufacturing operations directly. It’s agentic AI inside core business processes, shipped by the vendor, with or without a formal AI strategy from the customer.

What to do: If you run SAP, check whether your next cloud renewal includes bundled Business AI and what that means for your data governance posture. The agents shipping in Q1 touch financial reconciliation and production operations so make sure your AI governance framework covers vendor-embedded agents, not just the ones your teams build. If you’re negotiating a new SAP cloud contract, understand what Joule capabilities are included by default and whether you can scope or disable them.

5. California signs AI procurement executive order — largest state market sets the standard

What happened: Governor Newsom signed Executive Order N-5-26 on March 30, directing state agencies to develop vendor certification requirements for AI companies seeking state contracts. Agencies have 120 days to build those requirements, including attestations on bias prevention, civil rights protection, and content safety. The order also directs California’s CISO to review federal supply-chain risk designations and, if found improper, issue guidance allowing state agencies to continue buying from companies the federal government has blacklisted.

Why it matters: California is the nation’s largest state market for AI products. When California sets standards, vendors build to them whether they sell to the state or not. This is the GDPR pattern: one large buyer sets the floor, compliance infrastructure scales to cover everyone. The 120-day timeline means certification requirements land in late July. The federal breakaway clause matters too: California can run its AI buying pipeline independently of White House trade policy, creating a split market for any vendor caught between federal and state requirements.

What to do: If you sell AI products or services to government entities, start drafting your bias, civil rights, and content safety attestations now. These requirements will propagate beyond California. If you’re buying AI tools, use the certification framework as a free audit checklist (link below in references) for evaluating your own vendors once the requirements are published.

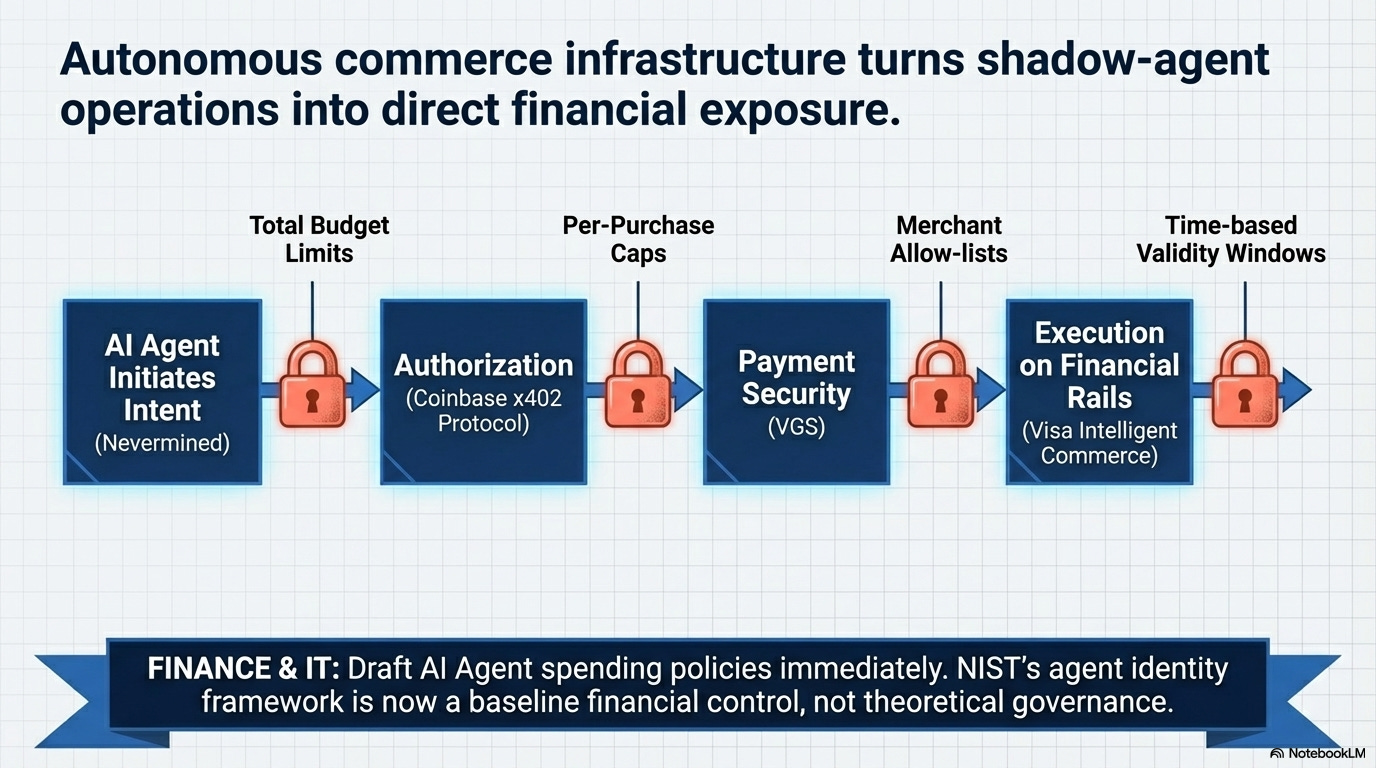

6. Visa enables AI agent card payments — autonomous commerce gets infrastructure

What happened: On April 9, Nevermined integrated Visa Intelligent Commerce, Coinbase’s x402 protocol, and VGS payment security to allow AI agents to autonomously purchase digital goods and services using registered Visa cards. The system includes guardrails: total budget limits, per-purchase caps, merchant restrictions, and time-based validity windows.

Why it matters: Agents that browse the web and write code have existed for months. Agents that can spend money are new. The guardrail architecture (budget caps, merchant allow-lists, time windows) is the right framework, but it means organizations now need agent spending policies just like they have corporate card policies. NIST’s AI agent identity framework just got more urgent. If agents transact autonomously, identity, authorization, and audit trails aren’t governance theory. They’re financial controls.

What to do: Check whether anyone in your organization is already delegating payment credentials to AI agents. The shadow-agent pattern mirrors shadow IT. If you’re building agents that need to transact, evaluate Visa Intelligent Commerce against your existing corporate card infrastructure. Draft agent spending policies now (budget limits, approval workflows, audit requirements) before the need arrives.

7. States pass AI health insurance laws as 600+ bills pile up nationwide

What happened: Washington gave final approval to SB 5395 and Utah signed SB 319, both prohibiting health insurers from using AI as the sole basis for denying or modifying claims. These join a larger wave: state lawmakers have introduced over 600 AI-related bills in 2026 sessions so far. Meanwhile, federal Executive Order 14365’s mandated deadlines, including a Commerce Department evaluation of state AI laws and the establishment of an AI Litigation Task Force to challenge them, have passed without action.

Why it matters: The regulatory center of gravity in the US has shifted to the states, and health insurance is the leading edge. Washington and Utah’s laws are narrowly targeted (AI can’t be the sole basis for denial), but they set a template other states will copy. For insurers and healthtech companies, the compliance map just got more fragmented: rules vary by state, federal preemption isn’t materializing, and 600+ bills means more restrictions are arriving every session. EO 14365 tried to preempt state regulation, but the task force created to challenge state laws hasn’t filed a single action. States noticed.

What to do: If you operate in health insurance or process claims using AI, map which states’ laws apply to your operations now. A unified federal framework isn’t coming this year. If you deploy AI in any regulated decision (credit scoring, hiring, customer risk), track the 600+ bill pipeline. The health insurance restrictions are a template that will reach other industries.

What signal did I miss? If you’re tracking AI developments in energy infrastructure, defense, or financial services regulation that should have made the cut, hit reply.

Past editions:

References: